qingyuan18

qingyuan18

已经解决了,谢谢 ---原始邮件--- 发件人: ***@***.***> 发送时间: 2023年6月5日(周一) 晚上6:18 收件人: ***@***.***>; 抄送: ***@***.******@***.***>; 主题: Re: [THUDM/ChatGLM-6B] [BUG/Help]

谢谢啊,我已经在我的环境中解决了,呵呵 ---Original--- From: ***@***.***> Date: Sun, Apr 28, 2024 17:13 PM To: ***@***.***>; Cc: ***@***.******@***.***>; Subject: Re: [RVC-Boss/GPT-SoVITS] api.py not work due to incorrect modulepath (Issue #1011) 楼主看看这个pr?我已经合了,有没有解决你的问题 — Reply...

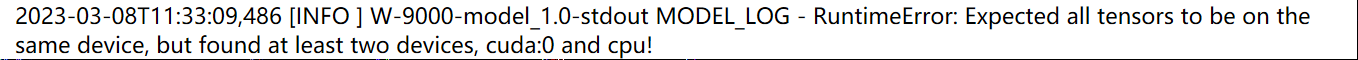

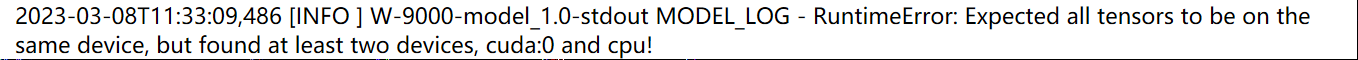

> @qingyuan18 can you try setting kernel injection to True? yes, I have tried that , the deepspeed load successful, but inference throws error: "  "

1 > > @qingyuan18 can you try setting kernel injection to True? > > yes, I have tried that , the deepspeed load successful, but inference throws error: " ...

with Sagemaker LMI inference image (vllm 0.7.0+ ), the return of chunk message is as following format : `line {"token": {"id": 1947, "text": " art", "log_prob": 0.0}} line {"token": {"id":...

> [@qingyuan18](https://github.com/qingyuan18) can you share what change you made for the fix ? code path: litellm/litellm/llms/sagemaker/common_utils.py -> AWSEventStreamDecoder -> aiter_bytes changed codes: ``` async def aiter_bytes( self, iterator: AsyncIterator[bytes] )...