deepspeed on T4 GPU server and run Stable diffustion model inference error

Describe the bug A clear and concise description of what the bug is.

To Reproduce

Steps to reproduce the behavior:

try:

print("begin load deepspeed....")

deepspeed.init_inference(

model=getattr(pipe,"model", pipe), # Transformers models

mp_size=1, # Number of GPU

dtype=torch.float16, # dtype of the weights (fp16)

replace_method="auto", # Lets DS autmatically identify the layer to replace

replace_with_kernel_inject=False # replace the model with the kernel injector

)

print("model accelarate with deepspeed!")

except Exception as e:

print("deepspeed accelarate excpetion!")

print(e)

Expected behavior load SD model successfully

ds_report output throw out error as following: MODEL_LOG - module 'diffusers.models.vae' has no attribute 'AutoencoderKL'

Screenshots If applicable, add screenshots to help explain your problem.

System info (please complete the following information):

- OS: T4 GPU (AWS G4dn server)

- GPU 1

- Interconnects (if applicable) [e.g., two machines connected with 100 Gbps IB]

- Python version: 3.8

- Pytorch 1.12

Launcher context

Are you launching your experiment with the deepspeed launcher, MPI, or something else?

Docker context Are you using a specific docker image that you can share?

Additional context Add any other context about the problem here.

Thank you for using DeepSpeed @qingyuan18, can you share what version of diffusers are you using?

Same issue posted here https://github.com/microsoft/DeepSpeed/issues/2968

@qingyuan18 can you try setting kernel injection to True?

@qingyuan18 can you try setting kernel injection to True?

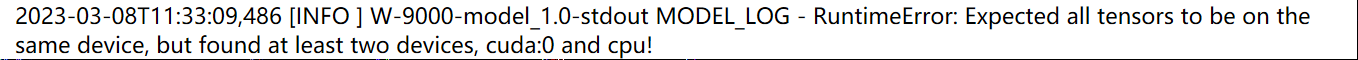

yes, I have tried that , the deepspeed load successful, but inference throws error:

"

"

"

1

@qingyuan18 can you try setting kernel injection to True?

yes, I have tried that , the deepspeed load successful, but inference throws error: "

"

1 more question: dose it need to specificly define the injected model which deepspeed modified to GPU core? like below:

try:

print("begin load deepspeed....")

deepspeed.init_inference(

model=getattr(model,"model", model), # Transformers models

mp_size=1, # Number of GPU

dtype=torch.float16, # dtype of the weights (fp16)

replace_method="auto", # Lets DS autmatically identify the layer to replace

replace_with_kernel_inject=False # replace the model with the kernel injector

)

print("model accelarate with deepspeed!")

except Exception as e:

print("deepspeed accelarate excpetion!")

print(e)

model = model.to("cuda:0")

@qingyuan18 What version of diffusers and deepspeed are you using?

Closing. Please reopen if issue still exists.