diffusers

diffusers copied to clipboard

diffusers copied to clipboard

🤗 Diffusers: State-of-the-art diffusion models for image, video, and audio generation in PyTorch.

This simplifies `AttentionBlock` by always making q,k,v a 3D tensors like we do in `CrossAttention`. This way we can also leverage sliced attention and xformers attention in this block.

Corrects the stacklevel of `deprecate` to make it easier for users to adapt their code. Thanks for the issue @keturn

### Describe the bug I am trying to load upto 4 fine-tuned models using pipeline, Here is what my code looks like, ``` pipe1 = StableDiffusionPipeline.from_pretrained(model_path1, revision="fp16", torch_dtype=torch.float16) pipe2 =...

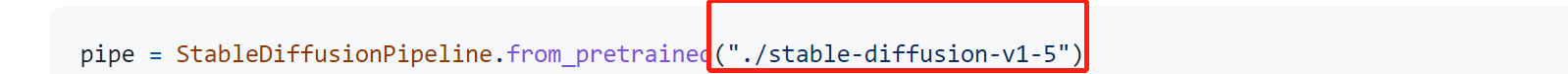

### Model/Pipeline/Scheduler description How to download model stable-diffusion-v1-5 to the local disk  ### Open source status - [X] The model implementation is available - [X] The model weights are...

Thank you for releasing a wonderful repository! Let me ask a newbie question. **What API design would you like to have changed or added to the library? Why?** `diffusers.DDPMScheduler.set_timesteps` **What...

### Describe the bug https://github.com/huggingface/diffusers/blob/v0.9.0/src/diffusers/schedulers/scheduling_ddim.py#L303 https://github.com/huggingface/diffusers/blob/v0.9.0/src/diffusers/schedulers/scheduling_ddim.py#L278 It seems that the ``model_output'' at L303 should be the predicted epsilon. However, it is actually the predicted original sample when prediction_type == "sample"....

When the original model and the diffusers model are run separately img2img produces different results with the same seed

The checkpoint merger pipeline based on the discussion at #877. Tested the checkpoint merger for the case with two checkpoints.

### Describe the bug I get this message when I try to upscale a image (/tmp/pip-install-r2dsw42o/xformers_584b8336664f4e63bb32e09d7dcaeac6/third_party/flash-attention/csrc/flash_attn/src/fmha_fprop_fp16_kernel.sm80.cu:68): invalid argument I can't find the culprit unfortunately, thank you!! ### Reproduction _No response_...