Donggeun Yu

Donggeun Yu

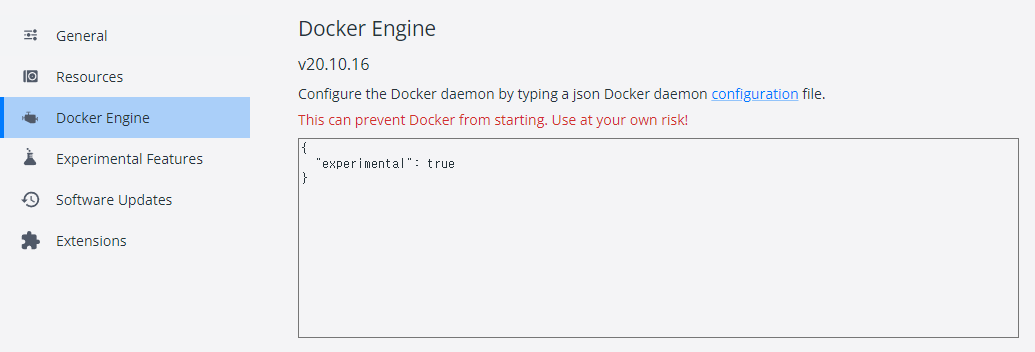

I'm working in a window containers environment on Docker Desktop. Is there any way to use nvidia-docker using --platform=linux option in window containers environment?

If I apply your repo, can I divide the GPU like this and check it on nvidia-smi? like this: https://docs.run.ai/Researcher/Walkthroughs/walkthrough-fractions/

I used the following yaml. But I get an error not finding the comment.md file. ~~~ pluralith: runs-on: ubuntu-latest permissions: contents: read pull-requests: write env: working-directory: ./terraform steps: - name:...

### System Info - `transformers` version: 4.39.0 - Platform: Linux-5.4.0-81-generic-x86_64-with-glibc2.35 - Python version: 3.10.12 - Huggingface_hub version: 0.19.4 - Safetensors version: 0.4.2 - Accelerate version: 0.27.2 - Accelerate config: not...

# What does this PR do? Currently function `get_proposal_pos_embed` is fixed with return float32. Modified to return dtype of proposal. ## Before submitting - [ ] This PR fixes a...

### System Info - `transformers` version: 4.39.0 - Platform: Linux-5.4.0-81-generic-x86_64-with-glibc2.35 - Python version: 3.10.12 - Huggingface_hub version: 0.23.4 - Safetensors version: 0.4.2 - Accelerate version: 0.31.0 - Accelerate config: not...

# What does this PR do? I got zero results from deformable attention when multi-GPU inference. However, this issue happens only in my environment. The DeviceGuard has been added to...

This PR is a rewrite of mmdet.models.dense_heads.GARPNHead.get_bboxes. Please let me know if there are any deficiencies.