fix: try to unlock a wait context cause program exit

请先用goimport格式化下代码,通过初步check @watermelon-brother

请先用goimport格式化下代码,通过初步check @watermelon-brother

已经格式化了

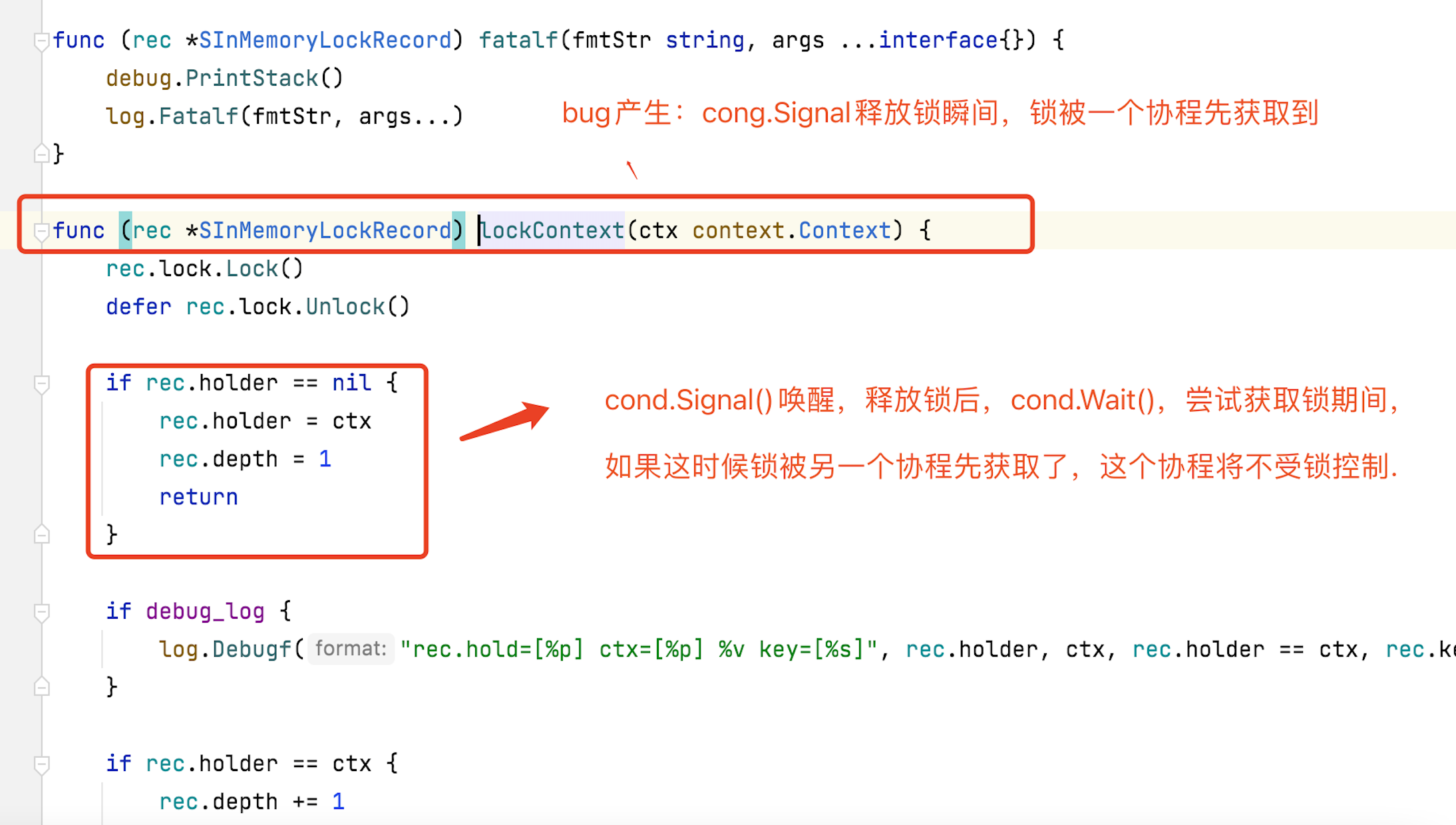

我感觉你说的这个问题似乎不存在?Signal()之后,有且只有一个waiter会被唤醒,感觉不存在多个被唤醒的情况。如果有新的goroute尝试获取锁,这个时候应该首先会被外层的 rec.lock.lock() 挡住?

看下面这段程序,你在思考下我说的问题吧 @swordqiu 模拟程序

package main

import (

"fmt"

"sync"

"time"

)

func main() {

mutex := sync.Mutex{}

// 创建唤醒锁

cond := sync.NewCond(&mutex)

goRoutine := func() {

for i := 0; i < 10; i++ {

go func(num int, cond *sync.Cond) {

cond.L.Lock()

if num < 3 {

//小于3的休眠,等待唤醒

cond.Wait()

}

fmt.Println("num", num, "time", time.Now().Second())

cond.L.Unlock()

}(i, cond)

}

}

goWaitLockRoutine := func() {

for i := 0; i < 10; i++ {

go func(num int, cond *sync.Cond) {

cond.L.Lock()

fmt.Println("我比cond.Wait()先抢到锁了")

cond.L.Unlock()

}(i, cond)

}

}

goRoutine()

time.Sleep(time.Second * 1)

cond.L.Lock()

// 唤醒一次

cond.Signal()

fmt.Println("发送信号给cond.Wait(),等会我在释放锁,cond.Wait()你要准备好获取锁了")

// 模拟锁未释放期间新协程到来被阻塞在cond.L.Lock()

goWaitLockRoutine()

cond.L.Unlock()

time.Sleep(time.Second * 1)

cond.L.Lock()

//唤醒剩下的所有

cond.Broadcast()

cond.L.Unlock()

time.Sleep(time.Second * 1)

}

输出

num 3 time 10

num 9 time 10

num 7 time 10

num 8 time 10

num 4 time 10

num 5 time 10

num 6 time 10

发送信号给cond.Wait(),等会我在释放锁,cond.Wait()你要准备好获取锁了

我比cond.Wait()先抢到锁了

我比cond.Wait()先抢到锁了

我比cond.Wait()先抢到锁了

我比cond.Wait()先抢到锁了

num 0 time 11

我比cond.Wait()先抢到锁了

我比cond.Wait()先抢到锁了

我比cond.Wait()先抢到锁了

我比cond.Wait()先抢到锁了

我比cond.Wait()先抢到锁了

我比cond.Wait()先抢到锁了

num 2 time 12

num 1 time 12

看下面这段程序,你在思考下我说的问题吧 @swordqiu 模拟程序

package main import ( "fmt" "sync" "time" ) func main() { mutex := sync.Mutex{} // 创建唤醒锁 cond := sync.NewCond(&mutex) goRoutine := func() { for i := 0; i < 10; i++ { go func(num int, cond *sync.Cond) { cond.L.Lock() if num < 3 { //小于3的休眠,等待唤醒 cond.Wait() } fmt.Println("num", num, "time", time.Now().Second()) cond.L.Unlock() }(i, cond) } } goWaitLockRoutine := func() { for i := 0; i < 10; i++ { go func(num int, cond *sync.Cond) { cond.L.Lock() fmt.Println("我比cond.Wait()先抢到锁了") cond.L.Unlock() }(i, cond) } } goRoutine() time.Sleep(time.Second * 1) cond.L.Lock() // 唤醒一次 cond.Signal() fmt.Println("发送信号给cond.Wait(),等会我在释放锁,cond.Wait()你要准备好获取锁了") // 模拟锁未释放期间新协程到来被阻塞在cond.L.Lock() goWaitLockRoutine() cond.L.Unlock() time.Sleep(time.Second * 1) cond.L.Lock() //唤醒剩下的所有 cond.Broadcast() cond.L.Unlock() time.Sleep(time.Second * 1) }输出

num 3 time 10 num 9 time 10 num 7 time 10 num 8 time 10 num 4 time 10 num 5 time 10 num 6 time 10 发送信号给cond.Wait(),等会我在释放锁,cond.Wait()你要准备好获取锁了 我比cond.Wait()先抢到锁了 我比cond.Wait()先抢到锁了 我比cond.Wait()先抢到锁了 我比cond.Wait()先抢到锁了 num 0 time 11 我比cond.Wait()先抢到锁了 我比cond.Wait()先抢到锁了 我比cond.Wait()先抢到锁了 我比cond.Wait()先抢到锁了 我比cond.Wait()先抢到锁了 我比cond.Wait()先抢到锁了 num 2 time 12 num 1 time 12

这种场景感觉在Cloudpods的框架里不存在?

lockman不是一个通用的锁机制,设计目的是防止多个worker(定义在appsrv/worker.go)同时访问同一个资源(lockObject)或者同一类资源(lockClass)。在Cloudpods框架内,如果要并发执行,需要用worker来run这个代码,不推荐直接go执行,因为这样无法控制并发度。

或者你给出Cloudpods代码里出现这个问题的代码片段,我们评估一下。

k8s事件触发的时候run函数中的lockman.LockClass @swordqiu https://github.com/yunionio/kubecomps/blob/master/pkg/kubeserver/client/cache.go

func (h eventHandler) run(f func(ctx context.Context, userCred mcclient.TokenCredential, cls manager.ICluster)) {

// cacheFactory must enable bidirectional sync

if !h.cacheFactory.bidirectionalSync {

return

}

adminCred := auth.AdminCredential()

ctx := context.Background()

now := time.Now()

ms := now.UnixMilli()

ctx = context.WithValue(ctx, "Time", ms)

lockman.LockClass(ctx, h.manager, db.GetLockClassKey(h.manager, adminCred))

defer lockman.ReleaseClass(ctx, h.manager, db.GetLockClassKey(h.manager, adminCred))

// eventWorkMan.Run(func() {

// f(ctx, adminCred, h.cluster)

// }, nil, nil)

f(ctx, adminCred, h.cluster)

}

感觉理论上你这种场景不会有问题,另外我加了一个测试用例

https://github.com/yunionio/cloudpods/pull/14570

验证不会出现panic的情况。你能把你出现panic的trace发出来看下吗?

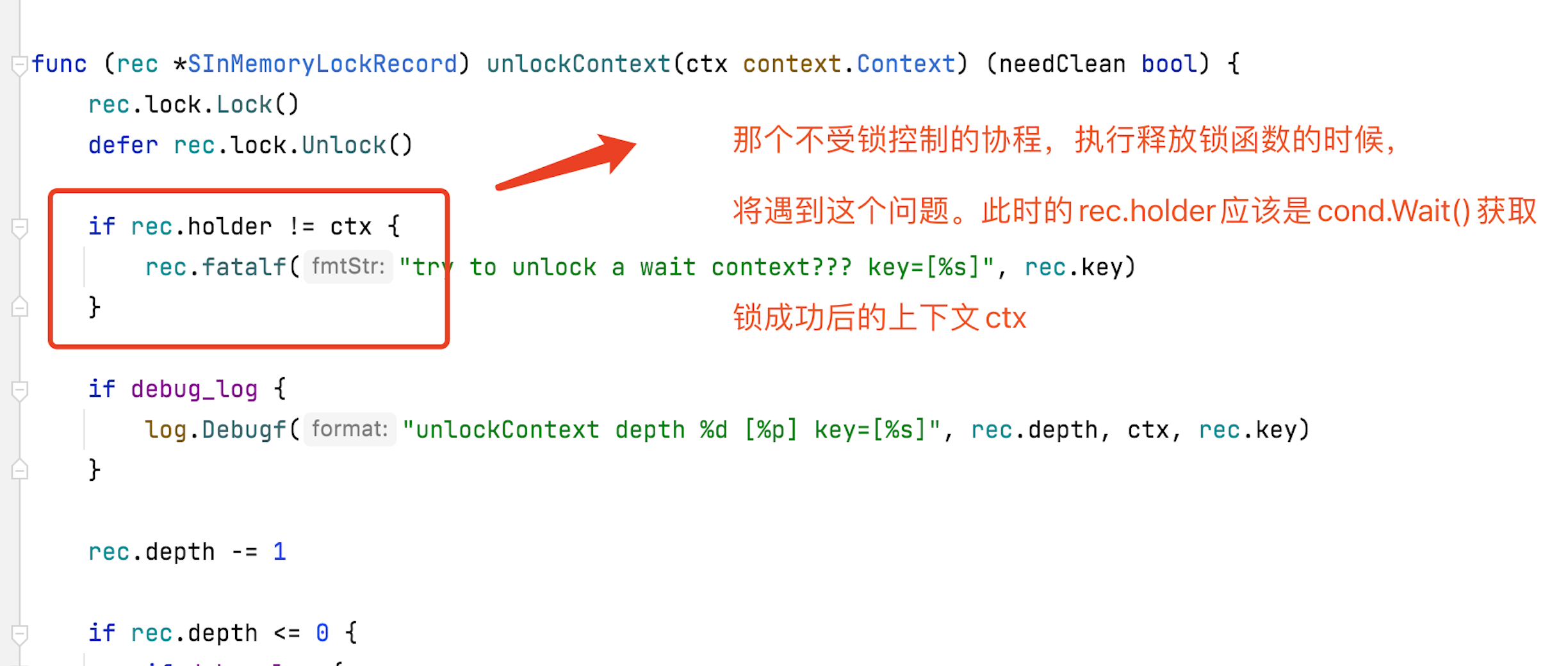

@swordqiu

June 30th 2022, 03:39:49.577 [fatal 220630 03:39:49 lockman.(*SInMemoryLockRecord).fatalf(inmemory.go:51)] try to unlock a wait context??? key=[k8s_node-]

June 30th 2022, 03:39:49.574 /usr/local/go/src/runtime/debug/stack.go:24 +0x65

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/k8s.io/apimachinery/pkg/util/wait/wait.go:155 +0x67

June 30th 2022, 03:39:49.574 /usr/local/go/src/runtime/debug/stack.go:16 +0x19

June 30th 2022, 03:39:49.574 yunion.io/x/onecloud/pkg/cloudcommon/db/lockman.ReleaseClass({0x2f19948, 0xc02794eba0}, {0x7f85fba3b240, 0xc000146780}, {0x0, 0xc00ba67ce8})

June 30th 2022, 03:39:49.574 created by k8s.io/apimachinery/pkg/util/wait.(*Group).Start

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/k8s.io/client-go/tools/cache/shared_informer.go:771 +0x6b

June 30th 2022, 03:39:49.574 yunion.io/x/onecloud/pkg/cloudcommon/db/lockman.(*SBaseLockManager).ReleaseClass(0xc000c96d50, {0x2f19948, 0xc02794eba0}, {0x7f85fba3b240, 0xc000146780}, {0x0, 0x2ef65b0})

June 30th 2022, 03:39:49.574 k8s.io/apimachinery/pkg/util/wait.(*Group).Start.func1()

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/yunion.io/x/onecloud/pkg/cloudcommon/db/lockman/lockman.go:80 +0x56

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/k8s.io/apimachinery/pkg/util/wait/wait.go:71 +0x88

June 30th 2022, 03:39:49.574 k8s.io/apimachinery/pkg/util/wait.BackoffUntil.func1(0x7f85fe591538)

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/yunion.io/x/onecloud/pkg/cloudcommon/db/lockman/base.go:34 +0x71

June 30th 2022, 03:39:49.574 runtime/debug.Stack()

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/k8s.io/apimachinery/pkg/util/wait/wait.go:90

June 30th 2022, 03:39:49.574 yunion.io/x/kubecomps/pkg/kubeserver/client.eventHandler.run({0xc0094cd980, {0x2f62970, 0xc000cc2300}, {0x7f85ff0fe9e8, 0xc000146780}}, 0xc00ba67ce8)

June 30th 2022, 03:39:49.574 k8s.io/client-go/tools/cache.(*processorListener).run(0xc0094b2680)

June 30th 2022, 03:39:49.574 goroutine 2234 [running]:

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/k8s.io/apimachinery/pkg/util/wait/wait.go:156 +0xb6

June 30th 2022, 03:39:49.574 /go/opm/k8s/pkg/kubeserver/client/cache.go:264 +0x402

June 30th 2022, 03:39:49.574 k8s.io/apimachinery/pkg/util/wait.BackoffUntil(0xc0094d6f38, {0x2ec8420, 0xc009346060}, 0x1, 0xc009f63200)

June 30th 2022, 03:39:49.574 yunion.io/x/onecloud/pkg/cloudcommon/db/lockman.(*SInMemoryLockRecord).fatalf(0xc03d5e4b60, {0x2b5e869, 0x28}, {0xc00ba67a08, 0x1, 0x1})

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/yunion.io/x/onecloud/pkg/cloudcommon/db/lockman/inmemory.go:50 +0x32

June 30th 2022, 03:39:49.574 runtime/debug.PrintStack()

June 30th 2022, 03:39:49.574 k8s.io/apimachinery/pkg/util/wait.JitterUntil(0xc000114000, 0x3b9aca00, 0x0, 0xc0, 0xc0094d6f88)

June 30th 2022, 03:39:49.574 k8s.io/apimachinery/pkg/util/wait.Until(...)

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/k8s.io/apimachinery/pkg/util/wait/wait.go:73 +0x5a

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/yunion.io/x/onecloud/pkg/cloudcommon/db/lockman/inmemory.go:193 +0xe7

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/k8s.io/apimachinery/pkg/util/wait/wait.go:133 +0x89

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/yunion.io/x/onecloud/pkg/cloudcommon/db/lockman/inmemory.go:118 +0x119

June 30th 2022, 03:39:49.574 yunion.io/x/onecloud/pkg/cloudcommon/db/lockman.(*SInMemoryLockRecord).unlockContext(0xc0279aa900, {0x2f19948, 0xc02794eba0})

June 30th 2022, 03:39:49.574 /go/opm/k8s/vendor/k8s.io/client-go/tools/cache/shared_informer.go:775 +0x127

June 30th 2022, 03:39:49.574 yunion.io/x/onecloud/pkg/cloudcommon/db/lockman.(*SInMemoryLockManager).UnlockKey(0xc000121278, {0x2f19948, 0xc02794eba0}, {0xc03f9bffd0, 0x9})

June 30th 2022, 03:39:49.574 k8s.io/client-go/tools/cache.(*processorListener).run.func1()

June 30th 2022, 03:39:49.574 yunion.io/x/kubecomps/pkg/kubeserver/client.eventHandler.OnUpdate({0xc0094cd980, {0x2f62970, 0xc000cc2300}, {0x7f85ff0fe9e8, 0xc000146780}}, {0x2a60ba0, 0xc03e137b00}, {0x2a60ba0, 0xc038b3ac00})

June 30th 2022, 03:39:49.574 /go/opm/k8s/pkg/kubeserver/client/cache.go:273 +0x10c

fixed yunionio/kubecomps#90 thanks