gcn

gcn copied to clipboard

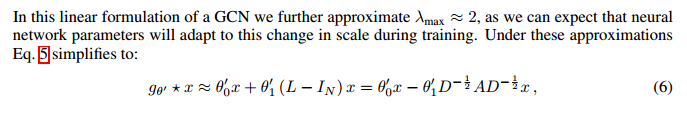

why lamda_max can be approximated to be 2?

I can't understand why λmax ≈ 2?

I can't understand why λmax ≈ 2?

'neural network parameters will adapt to this change in scale during training' what does the sentence above mean?

For keeping a normalized scale when doing iterations, I think

As illustrated in paper, IN + D^-1/2 A D^-1/2 has Eigenvalues in range of [0, 2], so λmax would equal 2 at most, and this is the approximation in paper.