FEA Adding FuzzyJoin

This PR adds the new FuzzyJoin class that allows joining tables with dirty columns.

Co-authored-by: @LeoGrin

Awesome! Some additional things which could be useful:

- benchmark on datasets with typos, on abbreviations (@alexis-cvetkov was saying that we could use the is_abbrevation tag in YAGO for this), and maybe on many to many joins.

- add a parameter to control for recall / precision tradeoff. It would probably be hard to set so I'm not sure. An idea: print the worst matches so the user knows if he needs more precision.

- allow custom match dictionary

- (maybe) add a option to require 100% for certain columns (when we have an id column but it's missing in some cases)

• allow custom match dictionary • (maybe) add a option to require 100% for certain columns (when we have an id column but it's missing in some cases)

Let's do these two later, in a second PR.

Thanks for the active back and forth, this is great!

Thanks for this exciting PR. Two TODOs:

- Fix the tests

- Add an example. I have a hard time reviewing a PR without an example, because I cannot get a feeling on the user experience.

Hi @jovan-stojanovic I'm curious why you went for Countvectorizer (e.g. with character n-grams) instead of, for example, something like the SimilarityEncoder.

I guess the "fuzzy" part comes from matching (for example) char n-grams which might still give close distance if there are some misspellings in fields/columns - but dirty_cat also covers this use case with the SimilarityEncoder, no?

(background is that we're thinking about how to do deduplication and how to use fuzzyjoin for that, but for this using string distances would be cool)

Thanks!

Hi @jovan-stojanovic I'm curious why you went for Countvectorizer (e.g. with character n-grams) instead of, for example, something like the

SimilarityEncoder.I guess the "fuzzy" part comes from matching (for example) char n-grams which might still give close distance if there are some misspellings in fields/columns - but dirty_cat also covers this use case with the SimilarityEncoder, no?

(background is that we're thinking about how to do deduplication and how to use fuzzyjoin for that, but for this using string distances would be cool)

Thanks!

Hi @mjboos , thanks for your comment. Both the SimilarityEncoder and FuzzyJoin are based on CountVectorizer, so they are similar in that way. However, we did not use the SimilarityEncoder directly because it can be very slow, because you need to compute for every category distances between them. From the user perspective, this is important. This is why we used Nearest Neighbors as it is much faster.

Here, there is an option return_distance=True that may help for deduplication.

However, you are right that the next challenge would be to add a better precision metric so as to distinguish between good and bad matches. It links to this discussion.

Maybe applying the SimilarityEncoder to n neairest neighbors would be an option then? Certainly better than applying it directly to all categories. This would be added as an option with precision='sim_enc' and a threshold.

In any case, thanks, you can create an issue, to be discussed as an additional feature in future PR's!

Ok, thanks, working on fixing the tests and will add those changes in a few minutes.

Code coverage is a bit low

Can you analyze the report (button "details") and see what input configuration must be tested.

Can you analyze the report (button "details") and see what input configuration must be tested.

Think I implemented most of your remarks! Please take a look when you have time, we can put it for release 0.3.

Please take a look when you have time, we can put it for release 0.3.

I'm sorry, I don't want to rush this, or delay 0.3: we will not include this for 0.3.

Also, having it merged in the dev will enable to get feedback and modify things before releasing it to many users.

Hi, Some feedbacks in terms of api:

- The fact that when

return_distanceisTrue, the distances are returned in a separate variable bugs me a bit. In most of the use cases that I envision, I'll have to add that list into my joined table to link the matched record and their distance. - The values of the

match_typeparameter (nearest,radius) are not super clear to me. In both case we return the nearest neighbour so the wording might be a bit misleading. I also did not understand thatmatch_thresholdshould only be used withradiusmode. - The docstring description of

radiusmethod is not clear (when you talk aboutestimated precisionandprecision_threshold, this latter variable does not exist in the code btw).

Usage comments:

- It was not clear to me that it was a 1-1 join search.

- As you said,

match_thresholdseems to be quite hard to tune without trial and error. As the method is long to run, the process will be very long. - A too big value of

match_thresholdwill lead to an error that is not very clear:

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<timed exec> in <module>

/data.design/analytics/dsshome/config/projects/PLAYGROUNDDU/lib/python/fuzzy_join.py in fuzzy_join(left_table, right_table, on, return_distance, analyzer, ngram_range, match_type, match_threshold, suffixes, keep)

234 ]

235 ),

--> 236 axis=1,

237 )

238

<__array_function__ internals> in append(*args, **kwargs)

/data.design/analytics/dsshome/code-envs/python/data-disco/lib64/python3.6/site-packages/numpy/lib/function_base.py in append(arr, values, axis)

4669 values = ravel(values)

4670 axis = arr.ndim-1

-> 4671 return concatenate((arr, values), axis=axis)

4672

4673

<__array_function__ internals> in concatenate(*args, **kwargs)

ValueError: all the input arrays must have same number of dimensions, but the array at index 0 has 2 dimension(s) and the array at index 1 has 1 dimension(s)

Code comments:

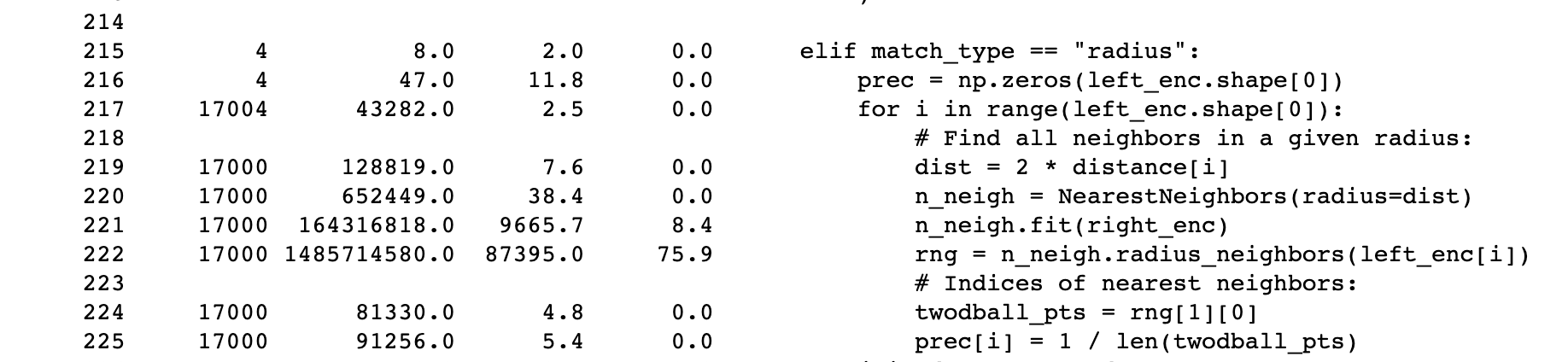

- To be verified, but my impression is that the

radiusargument is not used during the fit phase ofNearestNeighbor. If it's the case we can move those lines out of the for loop. - Anw according to my profiling, the main bottleneck is in the query phase with

n_neigh.radius_neighbors()that represents 75% of the running time.

One general comment on the API design here: we need to make sure that the behavior feels very similar to that of pandas.join https://pandas.pydata.org/docs/reference/api/pandas.DataFrame.join.html, so we should be using the same terminology, argument name, and return structure as much as possible.

I wouldn't bother with a full experimental mechanism: "from dirty_cat.experimental import enable_fuzzy_join": we are not a very mature and stable package, and people know that. I would just write in the docs that the feature is experimental and I would raise a warning when the function is call

I don't think that I would do a print_worse_match function for now. Rather do it in the example (and make sure that the return structure of fuzzy join makes this easy)

we need to make sure that the behavior feels very similar to that of pandas.join

With this in mind, maybe I would:

- rename "keep" to "how" (as in pandas)

- rename "match_type" to "how_fuzzy", taking as values "closest_match", and "within_radius"

- rename "match_threshold" to "fuzzy_radius"

These are of course very open suggestions and it would be good to gather the view of a variety of people on these choices/

You might new a "plt.tight_layout()" before each plt.show() in the example, as the xlabel and ylabel do not appear

We're having failing tests that seem unrelated to this PR: https://github.com/dirty-cat/dirty_cat/actions/runs/3175021096/jobs/5176907256

I don't like this: if we have a fragile test suite, we will be chasing more and more failing tests as we add features.

It seems that the test failures that we are witnessing are independent from the PR. @jovan-stojanovic : can you confirm?

Yes, this is something that happened for the first time yesterday. With #371, I will try and resolve the min_hash_encoder (and gap_encoder) test so that we don't fetch data online. It is definitely linked to the fact that we need to download data from the internet for some tests.. ( See #376 and #379 )

I tried using it, but the problem is that it will not work with our current minimal requirements. It requires the statsmodels package along with seaborn to work.

Let's add statsmodels to our doc dependencies

You didn't add the dependency to statsmodels in the right place, I fear: https://app.circleci.com/pipelines/github/dirty-cat/dirty_cat/1032/workflows/83c23fe0-dfaf-4b27-aab6-f6a9f3f87cae/jobs/2300

You need to add the dependency here: https://github.com/dirty-cat/dirty_cat/blob/master/build_tools/circle/build_doc.sh#L126

You should remove it to were you have added it previously.

Merging! This is a great addition.

Congrats!!