Yeo-Johnson Power Transformer gives Numpy warning

Describe the bug

When I use a power transformer with yeo-johnson method I get this warning in numpy:

../lib/python3.10/site-packages/numpy/core/_methods.py:235: RuntimeWarning: overflow encountered in multiply

Strangely I can't surpress this warning with filter.warnings()

Tl;dr: The eroor seems to appear when multiple instances of the transformer are called, in this example because n_jobs=2 is set in the preprocessor. But the bug also appears with n_jobs=1 when you call this preprocessor in a grid search for example.

Steps/Code to Reproduce

The following code snippet will raise a warning:

import numpy as np

from sklearn.preprocessing import PowerTransformer

rng = np.random.default_rng(0)

X = rng.exponential(size=(50,))

X -= X.max()

X = -X

X += 100

X = X.reshape(-1, 1)

method = "yeo-johnson"

transformer = PowerTransformer(method=method, standardize=False)

transformer.fit_transform(X)

Expected Results

No Warnings

Actual Results

/Users/glemaitre/mambaforge/envs/dev/lib/python3.8/site-packages/numpy/core/_methods.py:233: RuntimeWarning: overflow encountered in multiply

x = um.multiply(x, x, out=x)

Versions

System:

python: 3.8.12 | packaged by conda-forge | (default, Sep 16 2021, 01:38:21) [Clang 11.1.0 ]

executable: /Users/glemaitre/mambaforge/envs/dev/bin/python

machine: macOS-12.3.1-arm64-arm-64bit

Python dependencies:

sklearn: 1.2.dev0

pip: 21.3

setuptools: 58.2.0

numpy: 1.21.6

scipy: 1.8.0

Cython: 0.29.24

pandas: 1.4.2

matplotlib: 3.4.3

joblib: 1.0.1

threadpoolctl: 2.2.0

Built with OpenMP: True

threadpoolctl info:

user_api: blas

internal_api: openblas

prefix: libopenblas

filepath: /Users/glemaitre/mambaforge/envs/dev/lib/libopenblas_vortexp-r0.3.18.dylib

version: 0.3.18

threading_layer: openmp

architecture: VORTEX

num_threads: 8

user_api: openmp

internal_api: openmp

prefix: libomp

filepath: /Users/glemaitre/mambaforge/envs/dev/lib/libomp.dylib

version: None

num_threads: 8

Could you provide the data such that we can reproduce the use case and check what involve trigger this overflow

This is not a future version warning. If you can provide the dummy data so that we can look in it because the function is working as it was supposed.

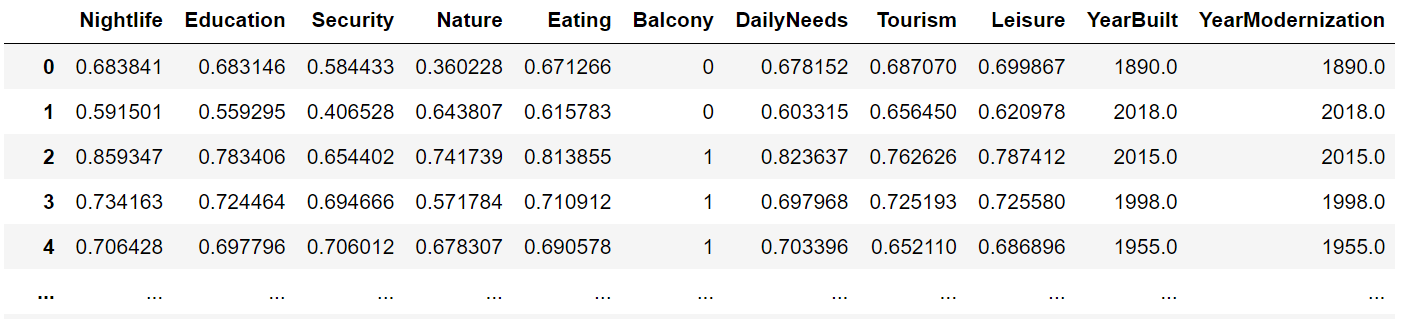

I can provide this screenshot if this is good?

Also for the dtypes:

Nightlife float64

Education float64

Security float64

Nature float64

Eating float64

Balcony int64

DailyNeeds float64

Tourism float64

Leisure float64

YearBuilt float64

YearModernization float64

dtype: object

I could also provide a small sample dataset if it is possible to upload it here?

You can upload it here. You can provide the smallest sample until it triggers the warning.

dataset.zip This is the minimum size where I could reproduce the error. Strangely enough it does not happen with smaller datasets, maybe this is linked to samplesize.

The problem seems to be within column "YearModernization".

The warning does not appear when I use the power transformer outside of the pipeline:

So this works:

pt = PowerTransformer(method="yeo-johnson")

pt.fit(pd.DataFrame(X_train["YearModernization"]))

But there is no parameter n_jobs in PowerTransformer, isn't it?

Sorry the parameter is in the preprocessor pipeline.

Also if you call this preprocessor with a gridsearch and even when preprocessor stays at n_jobs=1, the same error appears. So it my guess is that when multiple fits are called on this Transformer with cv=5 for example something breaks.

Could you provide the version of pandas used as well to be able to unpickle the dataset.

Basically the following:

In [1]: import sklearn

In [2]: sklearn.show_versions()

System: python: 3.10.4 | packaged by conda-forge | (main, Mar 24 2022, 17:38:57) [GCC 10.3.0] executable: /home/xxxxx/anaconda3/envs/MASTER/bin/python machine: Linux-4.15.0-154-generic-x86_64-with-glibc2.27

Python dependencies: sklearn: 1.2.dev0 pip: 22.0.4 setuptools: 62.1.0 numpy: 1.22.3 scipy: 1.8.0 Cython: 0.29.28 pandas: 1.4.2 matplotlib: 3.5.2 joblib: 1.1.0 threadpoolctl: 3.1.0

Built with OpenMP: True

threadpoolctl info: user_api: openmp internal_api: openmp prefix: libgomp filepath: /home/xxx/anaconda3/envs/MASTER/lib/python3.10/site-packages/scikit_learn.libs/libgomp-a34b3233.so.1.0.0 version: None num_threads: 56

user_api: blas

internal_api: openblas prefix: libopenblas filepath: /home/xxx/anaconda3/envs/MASTER/lib/libopenblasp-r0.3.20.so version: 0.3.20 threading_layer: pthreads architecture: Haswell num_threads: 56

user_api: openmp

internal_api: openmp prefix: libgomp filepath: /home/xxx/anaconda3/envs/MASTER/lib/libgomp.so.1.0.0 version: None num_threads: 56

This maybe explains why the warnings can not be turned off, with filter.warnings when the setting only applies to the main thread/process, but the problem is caused by some processes/threads which are created in parallel.

So the warning is raised by the following line:

https://github.com/scikit-learn/scikit-learn/blob/b0b8a39d8bb80611398e4c57895420d5cb1dfe09/sklearn/preprocessing/_data.py#L3244-L3246

At some point x_trans is equal to the following:

[1.50645674e+206 1.82014521e+206 2.06412322e+206 ... 3.00589049e+206

2.41473577e+206 7.49229704e+205]

and computing the variance will overflow.

We should probably prevent to compute the variance and directly return np.inf.

The problem is indeed trigger by YearModernisation. The distribution is the following:

Somehow, I feel that it could be linked to the following issue: https://github.com/scikit-learn/scikit-learn/issues/14959 The PR https://github.com/scikit-learn/scikit-learn/pull/20653 was maybe not enough to solve the problem.

ping @thomasjpfan @ogrisel @jeremiedbb that have a look at the original issue.

~~maybe we could make a "safe variance" function by using the fact that V(x) = xmax**2 V(x / xmax) ?~~

EDIT: nvm it will probably not be more stable...

The square will overflow :)

Can someone craft a minimal reproducer with a single column dataset, possibly syntactically generated?

But then this code would trigger the warning as well:

pt = PowerTransformer(method="yeo-johnson")

pt.fit(pd.DataFrame(X_train["YearModernization"]))

which it does not. Or am I wrong?

Can someone craft a minimal reproducer with a single column dataset, possibly syntactically generated?

Here is a minimal reproducer, but you have to use this dataset:

from joblib import load

import pandas as pd

from sklearn.preprocessing import PowerTransformer

data = load("X_train.joblib")

data = pd.DataFrame(data["YearModernization"])

pow_features = ["YearModernization"]

#Method 1 - Works fine

pt = PowerTransformer(method="yeo-johnson")

pt.fit(data)

print("This has worked without error")

#Method 2 - Error warning because n_jobs>1

power_transformer = Pipeline(steps=[('pow', PowerTransformer(method="yeo-johnson"))])

# Preprocessing the dataframe

preprocessor = ColumnTransformer(

transformers=

[

("pow", power_transformer, pow_features)

], n_jobs=-1

)

# Pipeline for fitting

preprocessor.fit(data)

print("This produces an error if n_jobs is set >= 1")

I could make a smaller reproducer with synthetic data that I added in the original discussion.

In addition, if we increase the shift toward positive values, the optimization will always return a log-likelihood of np.inf and thus return infinite values. Here the fact of using standardize=True will hide extremely large values after the transform.

I think that we should detect this problem and maybe return the original data when we cannot transform properly the dataset. Indeed, it seems that the exponential distribution is not well suited for this transformer.

I ran into this as well. Here is a minimal example that reproduces the problem:

import numpy as np

from sklearn.preprocessing import PowerTransformer

x = np.array([2003.0, 1950.0, 1997.0, 2000.0, 2009.0, 2009.0, 1980.0, 1999.0, 2007.0, 1991.0])

# [Issue #1]

# RuntimeWarning: overflow encountered in multiply from `x_trans_var = x_trans.var()`

PowerTransformer(method="yeo-johnson", standardize=True).fit_transform(x[:, np.newaxis])

# [Issue #2]

# RuntimeWarning: overflow encountered in power from `out[pos] = (np.power(x[pos] + 1, lmbda) - 1) / lmbda`

PowerTransformer(method="yeo-johnson", standardize=True).fit_transform(x[:5, np.newaxis])

I think there are two distinct problems to handle here:

- Make the expressions that use

np.powermore robust against overflow. - Avoid overflow in

x_trans.var()by regularizing the output's exponent. Without regularization, the output's exponent can blow up for certain inputs for very little gain in the loss function.

Here is a patch that addresses both of the above issues:

import numpy as np

from scipy import optimize

from sklearn.preprocessing import PowerTransformer as OriginalPowerTransformer

class PowerTransformer(OriginalPowerTransformer):

"""Apply a power transform featurewise to make data more Gaussian-like."""

def _yeo_johnson_inverse_transform(self, x, lmbda):

"""Return inverse-transformed input x following Yeo-Johnson inverse transform."""

x_inv = np.zeros_like(x)

pos = x >= 0

# when x >= 0

if abs(lmbda) < np.spacing(1.0):

x_inv[pos] = np.exp(x[pos]) - 1

else: # lmbda != 0

# x_inv[pos] = np.power(x[pos] * lmbda + 1, 1 / lmbda) - 1 # Original line

x_inv[pos] = np.exp(np.log1p(x[pos] * lmbda) / lmbda) - 1

# when x < 0

if abs(lmbda - 2) > np.spacing(1.0):

# x_inv[~pos] = 1 - np.power(-(2 - lmbda) * x[~pos] + 1, 1 / (2 - lmbda)) # Original line

x_inv[~pos] = 1 - np.exp(np.log1p(-(2 - lmbda) * x[~pos]) / (2 - lmbda))

else: # lmbda == 2

x_inv[~pos] = 1 - np.exp(-x[~pos])

return x_inv

def _yeo_johnson_transform(self, x, lmbda):

"""Return transformed input x following Yeo-Johnson transform."""

out = np.zeros_like(x)

pos = x >= 0 # binary mask

# when x >= 0

if abs(lmbda) < np.spacing(1.0):

out[pos] = np.log1p(x[pos])

else: # lmbda != 0

# out[pos] = (np.power(x[pos] + 1, lmbda) - 1) / lmbda # Original line

out[pos] = (np.exp(np.log1p(x[pos]) * lmbda) - 1) / lmbda

# when x < 0

if abs(lmbda - 2) > np.spacing(1.0):

# out[~pos] = -(np.power(-x[~pos] + 1, 2 - lmbda) - 1) / (2 - lmbda) # Original line

out[~pos] = -(np.exp(np.log1p(-x[~pos]) * (2 - lmbda)) - 1) / (2 - lmbda)

else: # lmbda == 2

out[~pos] = -np.log1p(-x[~pos])

return out

def _yeo_johnson_optimize(self, x):

"""Find and return optimal lambda parameter of the Yeo-Johnson transform."""

x_tiny = np.finfo(np.float64).tiny

def _neg_log_likelihood(lmbda):

"""Compute the negative log likelihood of the observed data x."""

x_trans = self._yeo_johnson_transform(x, lmbda)

x_trans_var = x_trans.var()

# Reject transformed data that would raise a RuntimeWarning in np.log

if x_trans_var < x_tiny:

return np.inf

log_var = np.log(x_trans_var)

# loglike = -n_samples / 2 * log_var # Original line

# loglike += (lmbda - 1) * (np.sign(x) * np.log1p(np.abs(x))).sum() # Original line

loglike = -0.5 * log_var

loglike += (lmbda - 1) * (np.sign(x) * np.log1p(np.abs(x))).mean()

loglike -= 0.01 * np.mean(np.abs(np.log(np.abs(x_trans_var[x_trans_var != 0])))) # Regularize the exponent

return -loglike

# the computation of lambda is influenced by NaNs so we need to

# get rid of them

x = x[~np.isnan(x)]

# choosing bracket -2, 2 like for boxcox

lmbda = cast(float, optimize.brent(_neg_log_likelihood, brack=(-2, 2)))

return lmbda

Thanks @lsorber. Would you be interested in opening a PR with non regression tests based on https://github.com/scikit-learn/scikit-learn/issues/23319#issuecomment-1464933635?

@ogrisel Here's a PR that addresses both types of overflow and includes a non-regression test: https://github.com/scikit-learn/scikit-learn/pull/26188

Is there an ETA for the fix?

I'm hitting this problem on a completely different dataset. What's worse, I cannot seem to find any way to turn off the warnings in my Jupyter notebook. I've tried warnings, logging, the %%capture directive - this message seems to bypass everything. :( And with cross-validation, the message gets spammed over many screens of text.

This bypass of warning filtering might be a separate bug on its own, I'm not sure yet.

EDIT: X dataframe that triggers the bug (one column needs to be imputed with the mean value):

@FlorinAndrei Current status: I created a PR that fixed the issue upstream in SciPy (https://github.com/scipy/scipy/issues/18389), which is nearing completion. After that, I'll create a PR for sklearn that uses SciPy's improved implementation.

#26308 together with the upcoming scipy 1.12 will resolve this issue.