ENH: add MarginalGP model and fixes in covariance functions API

- [x] Add

MarginalGPmodel. - [x] Add tests

- [x] Add documentation suite

- [x] Add notebook

I have completed my work on the model and now tests and a notebook is left. Can one of you take a look at this please @fonnesbeck @aloctavodia

All the doctests are passing but I cannot get NUTS to run on this, unfortunately.

What's the issue with NUTS?

Codecov Report

Merging #309 into master will decrease coverage by

0.50%. The diff coverage is83.09%.

@@ Coverage Diff @@

## master #309 +/- ##

==========================================

- Coverage 90.89% 90.38% -0.51%

==========================================

Files 36 36

Lines 2822 2903 +81

==========================================

+ Hits 2565 2624 +59

- Misses 257 279 +22

| Impacted Files | Coverage Δ | |

|---|---|---|

| pymc4/distributions/multivariate.py | 100.00% <ø> (ø) |

|

| pymc4/gp/_kernel.py | 79.82% <ø> (ø) |

|

| pymc4/gp/gp.py | 79.14% <76.53%> (-6.37%) |

:arrow_down: |

| pymc4/gp/cov.py | 90.33% <97.36%> (+0.54%) |

:arrow_up: |

| pymc4/gp/util.py | 100.00% <100.00%> (ø) |

What's the issue with NUTS?

@fonnesbeck It is giving me this error no matter what the input is:

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "C:\Users\tirth\Desktop\INTERESTS\PyMC4\pymc4\inference\sampling.py", line 172, in sample

results, sample_stats = run_chains(init_state, step_size)

File "C:\Users\tirth\Desktop\INTERESTS\PyMC4\env\lib\site-packages\tensorflow\python\eager\def_function.py", line 780, in __call__

result = self._call(*args, **kwds)

File "C:\Users\tirth\Desktop\INTERESTS\PyMC4\env\lib\site-packages\tensorflow\python\eager\def_function.py", line 846, in _call

return self._concrete_stateful_fn._filtered_call(canon_args, canon_kwds) # pylint: disable=protected-access

File "C:\Users\tirth\Desktop\INTERESTS\PyMC4\env\lib\site-packages\tensorflow\python\eager\function.py", line 1842, in _filtered_call

return self._call_flat(

File "C:\Users\tirth\Desktop\INTERESTS\PyMC4\env\lib\site-packages\tensorflow\python\eager\function.py", line 1922, in _call_flat

return self._build_call_outputs(self._inference_function.call(

File "C:\Users\tirth\Desktop\INTERESTS\PyMC4\env\lib\site-packages\tensorflow\python\eager\function.py", line 545, in call

outputs = execute.execute(

File "C:\Users\tirth\Desktop\INTERESTS\PyMC4\env\lib\site-packages\tensorflow\python\eager\execute.py", line 59, in quick_execute

tensors = pywrap_tfe.TFE_Py_Execute(ctx._handle, device_name, op_name,

tensorflow.python.framework.errors_impl.InvalidArgumentError: Input matrix is not invertible.

[[{{node mcmc_sample_chain/trace_scan/while/body/_171/mcmc_sample_chain/trace_scan/while/smart_for_loop/while/body/_565/mcmc_sample_chain/trace_scan/while/smart_for_loop/while/dual_averaging_step_size_adaptation___init__/_one_step/NoUTurnSampler/.one_step/while/body/_885/mcmc_sample_chain/trace_scan/while/smart_for_loop/while/dual_averaging_step_size_adaptation___init__/_one_step/NoUTurnSampler/.one_step/while/loop_tree_doubling/build_sub_tree/while/body/_1167/mcmc_sample_chain/trace_scan/while/smart_for_loop/while/dual_averaging_step_size_adaptation___init__/_one_step/NoUTurnSampler/.one_step/while/loop_tree_doubling/build_sub_tree/while/loop_build_sub_tree/leapfrog_integrate/while/body/_1449/mcmc_sample_chain/trace_scan/while/smart_for_loop/while/dual_averaging_step_size_adaptation___init__/_one_step/NoUTurnSampler/.one_step/while/loop_tree_doubling/build_sub_tree/while/loop_build_sub_tree/leapfrog_integrate/while/leapfrog_integrate_one_step/maybe_call_fn_and_grads/value_and_gradients/value_and_gradient/gradients/mcmc_sample_chain/trace_scan/while/smart_for_loop/while/dual_averaging_step_size_adaptation___init__/_one_step/NoUTurnSampler/.one_step/while/loop_tree_doubling/build_sub_tree/while/loop_build_sub_tree/leapfrog_integrate/while/leapfrog_integrate_one_step/maybe_call_fn_and_grads/value_and_gradients/value_and_gradient/loop_body/PartitionedCall/pfor/PartitionedCall_grad/PartitionedCall/gradients/Cholesky/pfor/Cholesky_grad/triangular_solve/MatrixTriangularSolve}}]] [Op:__inference_run_chains_27917]

Function call stack:

run_chains

Never seen this before. But I will try to debug it

Turns out this happened due to the choice of poor priors and kernels. I solved it by adding a white noise kernel. So NUTS now works (just have to add a lot of noise for float32 data type which is not very good).

Marking this ready for reviews. Will add tests and notebook asap

Yeah, the TFP Gaussian processes have default jitter, which can be customized as an argument to the constructor. I wonder if we should do similarly.

I have already added the jitter argument that defaults to 1e-4 for float32 and 1e-6 for float64 datatype and works in a similar way as TFP.

On Sat, Aug 1, 2020, 1:41 AM Chris Fonnesbeck [email protected] wrote:

Yeah, the TFP Gaussian processes have default jitter, which can be customized as an argument to the constructor. I wonder if we should do similarly.

— You are receiving this because you authored the thread. Reply to this email directly, view it on GitHub https://github.com/pymc-devs/pymc4/pull/309#issuecomment-667332749, or unsubscribe https://github.com/notifications/unsubscribe-auth/AKJOJRBDOCU3B6TZ6ULSI3LR6MQQDANCNFSM4PQHBW6Q .

I have added the notebook but the inference is really difficult. Tried both full rank ADVI and NUTS but still not able to get good results. I will play around with it more... meanwhile accepting some reviews on the notebook @AlexAndorra @bwengals (there may be loads of typos since I did this in a hurry. 😅)

I never liked the name MarginalGP as it's not descriptive from the user's perspective. LatentGP is better in this way because it tells me to use this for latent GPs. But what the hell is a marginal GP? How about ObservedGP?

Its because it is based on the marginal likelihood. I'd hesitate to use Observed because it is so closely associated with data in PyMC. You could make an argument for just GP, since it is the canonical form of the Gaussian process.

@fonnesbeck Well I understand that but I don't think it makes sense from a user perspective who doesn't know the underlying math of GPs. Not married to Observed but anything that is more intuitive and doesn't require knowing what a marginal likelihood and why it's being used here.

@twiecki I see your general point regarding hiding unnecessary technical jargon from the user, but here its pretty important that the user does recognize that the marginal likelihood is required, because in the next line they will be calling marginal_likelihood. GPs are slightly more advanced than parametric PyMC3, and part of that is knowing when the marginal likelihood is appropriate and when it is not. We just need to make sure our docs and examples provide this baseline knowledge (and I think they mostly do, but could use a review by a non-expert user).

Running fit fails for me locally:

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

in

----> 1 advifit = pm.fit(model, method="fullrank_advi")

~/repos/pymc4/pymc4/variational/approximations.py in fit(model, method, num_steps, sample_size, random_seed, optimizer, **kwargs)

207 # Here we assume that `model` parameter is provided by the user.

208 try:

--> 209 inference = _select[method.lower()](model, random_seed)

210 except KeyError:

211 raise KeyError(

~/repos/pymc4/pymc4/variational/approximations.py in __init__(self, model, random_seed)

34 self.model = model

35 self._seed = random_seed

---> 36 self.state, self.deterministic_names = initialize_sampling_state(model)

37 if not self.state.all_unobserved_values:

38 raise ValueError(

~/repos/pymc4/pymc4/inference/utils.py in initialize_sampling_state(model, observed, state)

25 The list of names of the model's deterministics

26 """

---> 27 _, state = flow.evaluate_meta_model(model, observed=observed, state=state)

28 deterministic_names = list(state.deterministics)

29

~/repos/pymc4/pymc4/flow/executor.py in evaluate_model(self, model, state, _validate_state, values, observed, sample_shape)

459 try:

460 with model_info["scope"]:

--> 461 dist = control_flow.send(return_value)

462 if not isinstance(dist, MODEL_POTENTIAL_AND_DETERMINISTIC_TYPES):

463 # prohibit any unknown type

~/repos/pymc4/pymc4/coroutine_model.py in control_flow(self)

215 def control_flow(self):

216 """Iterate over the random variables in the model."""

--> 217 return (yield from self.genfn())

in MarginalGPModel(X, y)

4 η = yield pm.HalfCauchy("η", np.array(5.))

5

----> 6 cov = η**2 * Matern52(ℓ)

7 gp = MarginalGP(cov_fn=cov)

8

~/anaconda3/envs/pymc4/lib/python3.7/site-packages/tensorflow/python/ops/math_ops.py in binary_op_wrapper(x, y)

986 try:

987 y = ops.convert_to_tensor_v2(

--> 988 y, dtype_hint=x.dtype.base_dtype, name="y")

989 except TypeError:

990 # If the RHS is not a tensor, it might be a tensor aware object

~/anaconda3/envs/pymc4/lib/python3.7/site-packages/tensorflow/python/framework/ops.py in convert_to_tensor_v2(value, dtype, dtype_hint, name)

1281 name=name,

1282 preferred_dtype=dtype_hint,

-> 1283 as_ref=False)

1284

1285

~/anaconda3/envs/pymc4/lib/python3.7/site-packages/tensorflow/python/framework/ops.py in convert_to_tensor(value, dtype, name, as_ref, preferred_dtype, dtype_hint, ctx, accepted_result_types)

1339

1340 if ret is None:

-> 1341 ret = conversion_func(value, dtype=dtype, name=name, as_ref=as_ref)

1342

1343 if ret is NotImplemented:

~/anaconda3/envs/pymc4/lib/python3.7/site-packages/tensorflow/python/framework/constant_op.py in _constant_tensor_conversion_function(v, dtype, name, as_ref)

315 as_ref=False):

316 _ = as_ref

--> 317 return constant(v, dtype=dtype, name=name)

318

319

~/anaconda3/envs/pymc4/lib/python3.7/site-packages/tensorflow/python/framework/constant_op.py in constant(value, dtype, shape, name)

256 """

257 return _constant_impl(value, dtype, shape, name, verify_shape=False,

--> 258 allow_broadcast=True)

259

260

~/anaconda3/envs/pymc4/lib/python3.7/site-packages/tensorflow/python/framework/constant_op.py in _constant_impl(value, dtype, shape, name, verify_shape, allow_broadcast)

264 ctx = context.context()

265 if ctx.executing_eagerly():

--> 266 t = convert_to_eager_tensor(value, ctx, dtype)

267 if shape is None:

268 return t

~/anaconda3/envs/pymc4/lib/python3.7/site-packages/tensorflow/python/framework/constant_op.py in convert_to_eager_tensor(value, ctx, dtype)

94 dtype = dtypes.as_dtype(dtype).as_datatype_enum

95 ctx.ensure_initialized()

---> 96 return ops.EagerTensor(value, ctx.device_name, dtype)

97

98

ValueError: Attempt to convert a value () with an unsupported type () to a Tensor.

does changing the line η**2 * Matern52(ℓ) to Matern52(ℓ, η) help? I am not able to reproduce though. It maybe because of different tensorflow version. I developed this on tensorflow==2.4.0-dev20200705 and tensorflow_probability==0.11.0-dev20200705. Seems like () is sent to the η variable by the executor but don't know why would it do that...

Maybe related to tensorflow/tensorflow#30120 and tensorflow/tensorflow#33154. Mostly seems like a compatibility issue

Got it running by adding the amplitude as an argument, as you suggested.

I cannot get a good fit for this model, either using mean-field or full-rank ADVI. The fit wants to make the noise parameter very large, which results in the poor fit. Even constraining sigma to be pretty small does not work; it eventually breaks when you make it too small, as it is no longer able to invert the matrix.

@fonnesbeck That's the problem I am facing too!! Is there a way to create variables inside the function decorated with pm.model though? I think tensorflow probability doesn't train any variable as they are not instances of tf.Variable leading to low quality inference. I may be wrong as I don't know the specifics. Any idea @lucianopaz @Sayam753 @ferrine?

When I put variables in the model, pm.sample and pm.fit gives me the following error:

ValueError: tf.function-decorated function tried to create variables on non-first call.

Full traceback

ValueError Traceback (most recent call last)

<ipython-input-8-1b50fd0e27e1> in <module>()

24 trace_fn=trace_fn,

25 optimizer=opt,

---> 26 sample_size=1

27 )

28

/content/pymc4/pymc4/variational/approximations.py in fit(model, method, num_steps, sample_size, random_seed, optimizer, **kwargs)

243 return losses

244

--> 245 return ADVIFit(inference, run_approximation())

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in __call__(self, *args, **kwds)

794 else:

795 compiler = "nonXla"

--> 796 result = self._call(*args, **kwds)

797

798 new_tracing_count = self._get_tracing_count()

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in _call(self, *args, **kwds)

837 # This is the first call of __call__, so we have to initialize.

838 initializers = []

--> 839 self._initialize(args, kwds, add_initializers_to=initializers)

840 finally:

841 # At this point we know that the initialization is complete (or less

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in _initialize(self, args, kwds, add_initializers_to)

710 self._concrete_stateful_fn = (

711 self._stateful_fn._get_concrete_function_internal_garbage_collected( # pylint: disable=protected-access

--> 712 *args, **kwds))

713

714 def invalid_creator_scope(*unused_args, **unused_kwds):

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in _get_concrete_function_internal_garbage_collected(self, *args, **kwargs)

2949 args, kwargs = None, None

2950 with self._lock:

-> 2951 graph_function, _, _ = self._maybe_define_function(args, kwargs)

2952 return graph_function

2953

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in _maybe_define_function(self, args, kwargs)

3320

3321 self._function_cache.missed.add(call_context_key)

-> 3322 graph_function = self._create_graph_function(args, kwargs)

3323 self._function_cache.primary[cache_key] = graph_function

3324

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in _create_graph_function(self, args, kwargs, override_flat_arg_shapes)

3182 arg_names=arg_names,

3183 override_flat_arg_shapes=override_flat_arg_shapes,

-> 3184 capture_by_value=self._capture_by_value),

3185 self._function_attributes,

3186 function_spec=self.function_spec,

/usr/local/lib/python3.6/dist-packages/tensorflow/python/framework/func_graph.py in func_graph_from_py_func(name, python_func, args, kwargs, signature, func_graph, autograph, autograph_options, add_control_dependencies, arg_names, op_return_value, collections, capture_by_value, override_flat_arg_shapes)

984 _, original_func = tf_decorator.unwrap(python_func)

985

--> 986 func_outputs = python_func(*func_args, **func_kwargs)

987

988 # invariant: `func_outputs` contains only Tensors, CompositeTensors,

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in wrapped_fn(*args, **kwds)

612 # __wrapped__ allows AutoGraph to swap in a converted function. We give

613 # the function a weak reference to itself to avoid a reference cycle.

--> 614 return weak_wrapped_fn().__wrapped__(*args, **kwds)

615 weak_wrapped_fn = weakref.ref(wrapped_fn)

616

/content/pymc4/pymc4/variational/approximations.py in run_approximation()

239 seed=random_seed,

240 optimizer=opt,

--> 241 **kwargs,

242 )

243 return losses

/usr/local/lib/python3.6/dist-packages/tensorflow_probability/python/vi/optimization.py in fit_surrogate_posterior(target_log_prob_fn, surrogate_posterior, optimizer, num_steps, convergence_criterion, trace_fn, variational_loss_fn, sample_size, trainable_variables, seed, name)

299 trace_fn=trace_fn,

300 trainable_variables=trainable_variables,

--> 301 name=name)

/usr/local/lib/python3.6/dist-packages/tensorflow_probability/python/math/minimize.py in minimize(loss_fn, num_steps, optimizer, convergence_criterion, batch_convergence_reduce_fn, trainable_variables, trace_fn, return_full_length_trace, name)

335 loss_fn=loss_fn, optimizer=optimizer,

336 trainable_variables=trainable_variables)

--> 337 initial_loss, initial_grads, initial_parameters = optimizer_step_fn()

338 has_converged = None

339 initial_convergence_criterion_state = None

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in __call__(self, *args, **kwds)

794 else:

795 compiler = "nonXla"

--> 796 result = self._call(*args, **kwds)

797

798 new_tracing_count = self._get_tracing_count()

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in _call(self, *args, **kwds)

837 # This is the first call of __call__, so we have to initialize.

838 initializers = []

--> 839 self._initialize(args, kwds, add_initializers_to=initializers)

840 finally:

841 # At this point we know that the initialization is complete (or less

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in _initialize(self, args, kwds, add_initializers_to)

710 self._concrete_stateful_fn = (

711 self._stateful_fn._get_concrete_function_internal_garbage_collected( # pylint: disable=protected-access

--> 712 *args, **kwds))

713

714 def invalid_creator_scope(*unused_args, **unused_kwds):

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in _get_concrete_function_internal_garbage_collected(self, *args, **kwargs)

2949 args, kwargs = None, None

2950 with self._lock:

-> 2951 graph_function, _, _ = self._maybe_define_function(args, kwargs)

2952 return graph_function

2953

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in _maybe_define_function(self, args, kwargs)

3320

3321 self._function_cache.missed.add(call_context_key)

-> 3322 graph_function = self._create_graph_function(args, kwargs)

3323 self._function_cache.primary[cache_key] = graph_function

3324

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in _create_graph_function(self, args, kwargs, override_flat_arg_shapes)

3182 arg_names=arg_names,

3183 override_flat_arg_shapes=override_flat_arg_shapes,

-> 3184 capture_by_value=self._capture_by_value),

3185 self._function_attributes,

3186 function_spec=self.function_spec,

/usr/local/lib/python3.6/dist-packages/tensorflow/python/framework/func_graph.py in func_graph_from_py_func(name, python_func, args, kwargs, signature, func_graph, autograph, autograph_options, add_control_dependencies, arg_names, op_return_value, collections, capture_by_value, override_flat_arg_shapes)

984 _, original_func = tf_decorator.unwrap(python_func)

985

--> 986 func_outputs = python_func(*func_args, **func_kwargs)

987

988 # invariant: `func_outputs` contains only Tensors, CompositeTensors,

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in wrapped_fn(*args, **kwds)

612 # __wrapped__ allows AutoGraph to swap in a converted function. We give

613 # the function a weak reference to itself to avoid a reference cycle.

--> 614 return weak_wrapped_fn().__wrapped__(*args, **kwds)

615 weak_wrapped_fn = weakref.ref(wrapped_fn)

616

/usr/local/lib/python3.6/dist-packages/tensorflow_probability/python/math/minimize.py in optimizer_step()

100 for v in trainable_variables or []:

101 tape.watch(v)

--> 102 loss = loss_fn()

103 watched_variables = tape.watched_variables()

104 grads = tape.gradient(loss, watched_variables)

/usr/local/lib/python3.6/dist-packages/tensorflow_probability/python/vi/optimization.py in complete_variational_loss_fn()

291 surrogate_posterior,

292 sample_size=sample_size,

--> 293 seed=seed)

294

295 return tfp_math.minimize(complete_variational_loss_fn,

/usr/local/lib/python3.6/dist-packages/tensorflow_probability/python/vi/csiszar_divergence.py in monte_carlo_variational_loss(target_log_prob_fn, surrogate_posterior, sample_size, discrepancy_fn, use_reparameterization, seed, name)

965 # Log-prob is only used if use_reparameterization=False.

966 log_prob=surrogate_posterior.log_prob,

--> 967 use_reparameterization=use_reparameterization)

968

969

/usr/local/lib/python3.6/dist-packages/tensorflow/python/util/deprecation.py in new_func(*args, **kwargs)

505 'in a future version' if date is None else ('after %s' % date),

506 instructions)

--> 507 return func(*args, **kwargs)

508

509 doc = _add_deprecated_arg_notice_to_docstring(

/usr/local/lib/python3.6/dist-packages/tensorflow_probability/python/monte_carlo/expectation.py in expectation(***failed resolving arguments***)

184 raise ValueError('`f` must be a callable function.')

185 if use_reparameterization:

--> 186 return tf.reduce_mean(f(samples), axis=axis, keepdims=keepdims)

187 else:

188 if not callable(log_prob):

/usr/local/lib/python3.6/dist-packages/tensorflow_probability/python/vi/csiszar_divergence.py in divergence_fn(q_samples)

932

933 def divergence_fn(q_samples):

--> 934 target_log_prob = nest_util.call_fn(target_log_prob_fn, q_samples)

935 return discrepancy_fn(

936 target_log_prob - surrogate_posterior.log_prob(

/usr/local/lib/python3.6/dist-packages/tensorflow_probability/python/internal/nest_util.py in call_fn(fn, args)

209 return fn(**args)

210 else:

--> 211 return fn(args)

212

213

/content/pymc4/pymc4/variational/approximations.py in vectorizedfn(*q_samples)

69 def vectorize_function(function):

70 def vectorizedfn(*q_samples):

---> 71 return tf.vectorized_map(lambda samples: function(*samples), q_samples)

72

73 return vectorizedfn

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/parallel_for/control_flow_ops.py in vectorized_map(fn, elems, fallback_to_while_loop)

448 batch_size = array_ops.shape(first_elem)[0]

449 return pfor(loop_fn, batch_size,

--> 450 fallback_to_while_loop=fallback_to_while_loop)

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/parallel_for/control_flow_ops.py in pfor(loop_fn, iters, fallback_to_while_loop, parallel_iterations)

202 def_function.run_functions_eagerly(False)

203 f = def_function.function(f)

--> 204 outputs = f()

205 if functions_run_eagerly is not None:

206 def_function.run_functions_eagerly(functions_run_eagerly)

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/parallel_for/control_flow_ops.py in f()

187 iters,

188 fallback_to_while_loop=fallback_to_while_loop,

--> 189 parallel_iterations=parallel_iterations)

190 # Note that we wrap into a tf.function if in eager execution mode or under

191 # XLA compilation. The latter is so that we don't compile operations like

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/parallel_for/control_flow_ops.py in _pfor_impl(loop_fn, iters, fallback_to_while_loop, parallel_iterations, pfor_config)

245 else:

246 assert pfor_config is None

--> 247 loop_fn_outputs = loop_fn(loop_var)

248

249 # Convert outputs to Tensor if needed.

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/parallel_for/control_flow_ops.py in loop_fn(i)

440 def loop_fn(i):

441 gathered_elems = nest.map_structure(lambda x: array_ops.gather(x, i), elems)

--> 442 return fn(gathered_elems)

443 batch_size = None

444 first_elem = ops.convert_to_tensor(nest.flatten(elems)[0])

/content/pymc4/pymc4/variational/approximations.py in <lambda>(samples)

69 def vectorize_function(function):

70 def vectorizedfn(*q_samples):

---> 71 return tf.vectorized_map(lambda samples: function(*samples), q_samples)

72

73 return vectorizedfn

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in __call__(self, *args, **kwds)

794 else:

795 compiler = "nonXla"

--> 796 result = self._call(*args, **kwds)

797

798 new_tracing_count = self._get_tracing_count()

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in _call(self, *args, **kwds)

854 # Lifting succeeded, so variables are initialized and we can run the

855 # stateless function.

--> 856 return self._stateless_fn(*args, **kwds)

857 else:

858 canon_args, canon_kwds = \

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in __call__(self, *args, **kwargs)

2922 """Calls a graph function specialized to the inputs."""

2923 with self._lock:

-> 2924 graph_function, args, kwargs = self._maybe_define_function(args, kwargs)

2925 return graph_function._filtered_call(args, kwargs) # pylint: disable=protected-access

2926

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in _maybe_define_function(self, args, kwargs)

3320

3321 self._function_cache.missed.add(call_context_key)

-> 3322 graph_function = self._create_graph_function(args, kwargs)

3323 self._function_cache.primary[cache_key] = graph_function

3324

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/function.py in _create_graph_function(self, args, kwargs, override_flat_arg_shapes)

3182 arg_names=arg_names,

3183 override_flat_arg_shapes=override_flat_arg_shapes,

-> 3184 capture_by_value=self._capture_by_value),

3185 self._function_attributes,

3186 function_spec=self.function_spec,

/usr/local/lib/python3.6/dist-packages/tensorflow/python/framework/func_graph.py in func_graph_from_py_func(name, python_func, args, kwargs, signature, func_graph, autograph, autograph_options, add_control_dependencies, arg_names, op_return_value, collections, capture_by_value, override_flat_arg_shapes)

984 _, original_func = tf_decorator.unwrap(python_func)

985

--> 986 func_outputs = python_func(*func_args, **func_kwargs)

987

988 # invariant: `func_outputs` contains only Tensors, CompositeTensors,

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in wrapped_fn(*args, **kwds)

612 # __wrapped__ allows AutoGraph to swap in a converted function. We give

613 # the function a weak reference to itself to avoid a reference cycle.

--> 614 return weak_wrapped_fn().__wrapped__(*args, **kwds)

615 weak_wrapped_fn = weakref.ref(wrapped_fn)

616

/content/pymc4/pymc4/variational/approximations.py in logpfn(*values)

51 def logpfn(*values):

52 split_view = self.order.split(values[0])

---> 53 _, st = flow.evaluate_meta_model(self.model, values=split_view)

54 return st.collect_log_prob()

55

/content/pymc4/pymc4/flow/executor.py in evaluate_model(self, model, state, _validate_state, values, observed, sample_shape)

459 try:

460 with model_info["scope"]:

--> 461 dist = control_flow.send(return_value)

462 if not isinstance(dist, MODEL_POTENTIAL_AND_DETERMINISTIC_TYPES):

463 # prohibit any unknown type

/content/pymc4/pymc4/coroutine_model.py in control_flow(self)

215 def control_flow(self):

216 """Iterate over the random variables in the model."""

--> 217 return (yield from self.genfn())

<ipython-input-8-1b50fd0e27e1> in GPLVM()

1 @pm.model

2 def GPLVM():

----> 3 x = yield pm.MvNormalCholesky('x', loc=tf.Variable(tf.zeros(2, dtype=tf.float64)), scale_tril=np.eye(2), batch_stack=N)

4

5 # cov_fn = pm.gp.cov.Linear(np.array(0.), np.array(0.), np.array(0.))

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/variables.py in __call__(cls, *args, **kwargs)

260 return cls._variable_v1_call(*args, **kwargs)

261 elif cls is Variable:

--> 262 return cls._variable_v2_call(*args, **kwargs)

263 else:

264 return super(VariableMetaclass, cls).__call__(*args, **kwargs)

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/variables.py in _variable_v2_call(cls, initial_value, trainable, validate_shape, caching_device, name, variable_def, dtype, import_scope, constraint, synchronization, aggregation, shape)

254 synchronization=synchronization,

255 aggregation=aggregation,

--> 256 shape=shape)

257

258 def __call__(cls, *args, **kwargs):

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/variables.py in getter(**kwargs)

65

66 def getter(**kwargs):

---> 67 return captured_getter(captured_previous, **kwargs)

68

69 return getter

/usr/local/lib/python3.6/dist-packages/tensorflow/python/eager/def_function.py in invalid_creator_scope(*unused_args, **unused_kwds)

715 """Disables variable creation."""

716 raise ValueError(

--> 717 "tf.function-decorated function tried to create "

718 "variables on non-first call.")

719

ValueError: tf.function-decorated function tried to create variables on non-first call.

@fonnesbeck That's the problem I am facing too!! Is there a way to create variables inside the function decorated with

pm.modelthough? I think tensorflow probability doesn't train any variable as they are not instances oftf.Variableleading to low quality inference. I may be wrong as I don't know the specifics. Any idea @lucianopaz @Sayam753 @ferrine?

I have not investigated this :( For now I think it is quite problematic and should be separately researched in TensorFlow documentation.

@ferrine I don't know why the model currently implemented doesn't work. But I experimented with GP-LVM with the help of @Sayam753 and got very good results. So, I think the tf.Variable stuff isn't the case.

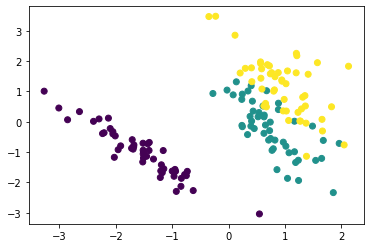

Here are the results of a GP-LVM model:

@fonnesbeck Should we temporarily change the example in the notebook to GP-LVM model?

Anyways, I have added the GP-LVM example in the notebook.

Thanks @AlexAndorra for the reviews. Have made the changes accordingly. Feel free to inform me if I missed something...

This all looks good to me, unless @bwengals has any concerns before we merge. I will test it on a couple simple models I am currently working with.

I don't understand the test failures. Is that a temporary glitch or something has changed?