TF2.8 support

This is same as andrey-churkin 's #1152 with some resolving merge error.

Changes

-

The Keras code was moved to the separate GitHub repository keras-team/keras since TF 2.6.0. The API endpoints for

tf.kerasstay unchanged. So, all imports from thetensorflow.python.kerasare deprecated for TF >= 2.6.0. These imports were replaced by imports fromtensorflow.kerasfor Public Keras API and by imports fromkerasfor Private API. -

For backward compatibility with TF versions, which are older than 2.6.0, the

tf_internalsmodule was created. It contains imports from the TF/Keras private API. For TF < 2.6 thetensorflow.python.kerasendpoint should be used for imports from the Keras private API. For TF >= 2.6 thekerasendpoint should be used. The endpoint for imports from private TF API was not changed. -

The attribute

map_vectorizationwas removed from thetf.data.experimental.OptimizationOptionssince TF 2.7. For backward compatibility with TF version, which are older than 2.7.0, the additional condition was added to theexamples.tensorflow.classification.datasets.builder.DatasetBuilderclass. -

The TF 2.8.0 depends on numpy >= 1.20. For this reason, numpy version was updated.

-

The code of the

tf.keras.applications.mobilenet.MobileNetmodel was updated since TF 2.6. For this reason, additional condition was added to thetests.tensorflow.test_models.mobilenetmodule. -

The reference graphs for TF 2.5 and TF 2.8.0 are different. The new

NoOpnode was added to the graphs for TF 2.8. This node is used as a placeholder for control edges. Additional step for TF 2.8 was added to the tests which remove this node. Reference graphs for TF 2.5 and TF 2.8.0 are the same now. -

The

tf.ones()method was changed in the TF 2.8.0 (additional TF operations appear in the TF graph). For this reason, reference graph for Mask R-CNN was updated for TF 2.8.0.

Reason for changes

These changes are required to support TensorFlow 2.8.0 version.

Related tickets

Ref: 79015 Ref: 79017

Tests

- The symbolic links to

reference_graphs/2.5were added to thereference_graphs/2.8. Only graphs for MobileNet and Mask R-CNN were updated. The reasons were highlighted above.

@negvet, Would you please give us advice to confirm which tests should be validated to merge this PR?

My thought is

- precommit tests

-

tests/tensorflow/test_sanity_sample.py -

tests/tensorflow/test_weekly.py -

tests/tensorflow/test_sota_checkpoints.py

Do we need more?

All the comments applied @vinnamkim

@negvet, Would you please give us advice to confirm which tests should be validated to merge this PR?

My thought is

- precommit tests

tests/tensorflow/test_sanity_sample.pytests/tensorflow/test_weekly.pytests/tensorflow/test_sota_checkpoints.pyDo we need more?

@dongkwan-kim01 Our pre-commit tests are all green. For the next step, I would like you to report the results of the above tests we discussed. Documentation for the roles of these tests should also be included.

@negvet, Would you please give us advice to confirm which tests should be validated to merge this PR? My thought is

- precommit tests

tests/tensorflow/test_sanity_sample.pytests/tensorflow/test_weekly.pytests/tensorflow/test_sota_checkpoints.pyDo we need more?

@dongkwan-kim01 Our pre-commit tests are all green. For the next step, I would like you to report the results of the above tests we discussed. Documentation for the roles of these tests should also be included.

Yes I'll attach the test results, and the documentation also be included when it's ready.

Thanks for your contribution! Could you provide in the ticket a summary of the nightly and weekly tests for this PR?

Yes, I'll attach the weekly and nightly test to the ticket when it's done, but currently I'm troubleshooting the version problems that occur during weekly and nightly tests. I'll provide the results when it's done. Thank you!

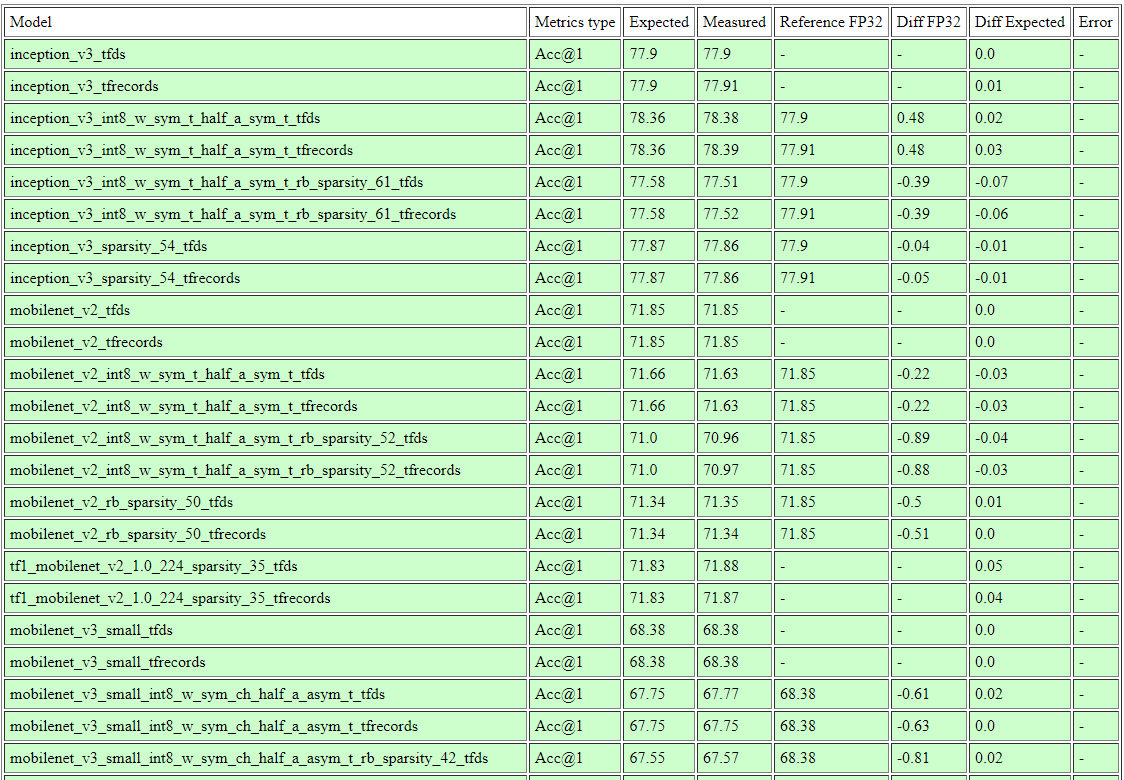

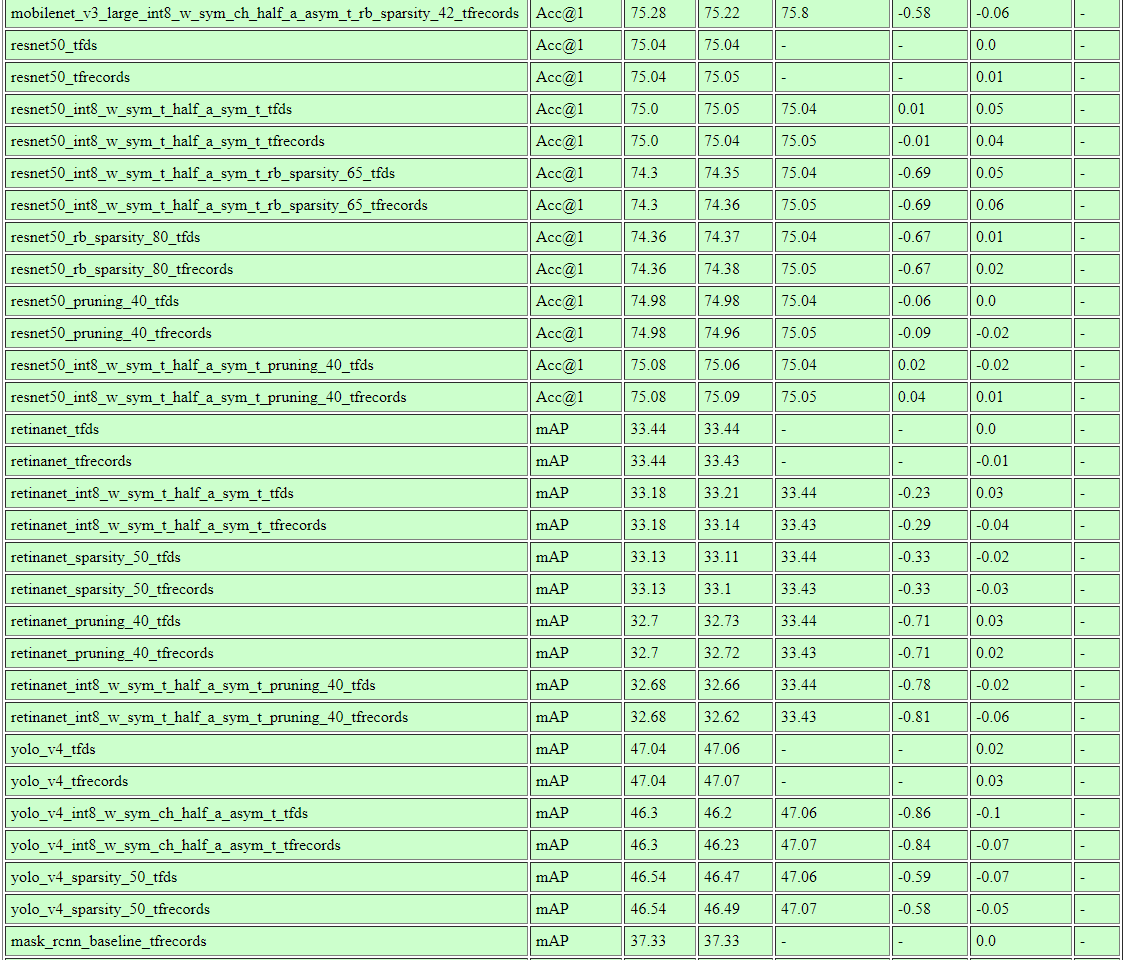

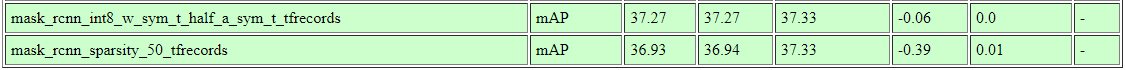

Results for the evaluation:

Result for the openvino IR model evaluation(Mask model AC not supported):

| Model | AC Measured | Reference FP32(OV) | Diff FP32 | within Threshold |

|---|---|---|---|---|

| inception_v3_imagenet_rb_sparsity_int8 | 77.24% | 78.03% | -0.79% | O |

| retinanet_coco_int8 | 33.16% | 33.33% | -0.17% | O |

| mask_rcnn_coco_int8 | - | - | - | - |

-> meets within threshold(-1.0%)

merge upstream develop because of pylint error in pytorch

Results for the evaluation:

Result for the openvino IR model evaluation(Mask model AC not supported): Model AC Measured Reference FP32(OV) Diff FP32 within Threshold inception_v3_imagenet_rb_sparsity_int8 77.24% 78.03% -0.79% O retinanet_coco_int8 33.16% 33.33% -0.17% O mask_rcnn_coco_int8 - - - -

-> meets within threshold(-1.0%)

@dongkwan-kim01, It looks like very strange. What precision did you use in the test? As you know, TF 2.8 uses TensorFloat-32 by default.

Results for the evaluation:

Result for the openvino IR model evaluation(Mask model AC not supported): Model AC Measured Reference FP32(OV) Diff FP32 within Threshold inception_v3_imagenet_rb_sparsity_int8 77.24% 78.03% -0.79% O retinanet_coco_int8 33.16% 33.33% -0.17% O mask_rcnn_coco_int8 - - - - -> meets within threshold(-1.0%)

@dongkwan-kim01, It looks like very strange. What precision did you use in the test? As you know, TF 2.8 uses TensorFloat-32 by default.

I used same configuration(examples/tensorflow/{task}/configs) and unit test code in tests/tensorflow/test_sota_checkpoints.py.

And for the TF32 precision, AFAIK tensorflow adopting the TF32 precision as default before version 2.5, and when I checked in the TF2.5.3(current develop version) and TF2.8.2 both of them using TF32 as default. Can it be a problem in the new TF version?

Results for the evaluation:

Result for the openvino IR model evaluation(Mask model AC not supported): Model AC Measured Reference FP32(OV) Diff FP32 within Threshold inception_v3_imagenet_rb_sparsity_int8 77.24% 78.03% -0.79% O retinanet_coco_int8 33.16% 33.33% -0.17% O mask_rcnn_coco_int8 - - - - -> meets within threshold(-1.0%)

@dongkwan-kim01, It looks like very strange. What precision did you use in the test? As you know, TF 2.8 uses TensorFloat-32 by default.

I used same configuration(

examples/tensorflow/{task}/configs) and unit test code intests/tensorflow/test_sota_checkpoints.py. And for the TF32 precision, AFAIK tensorflow adopting the TF32 precision as default before version 2.5, and when I checked in the TF2.5.3(current develop version) and TF2.8.2 both of them using TF32 as default. Can it be a problem in the new TF version?

@andrey-churkin Please take a look at accuracy drop issue.

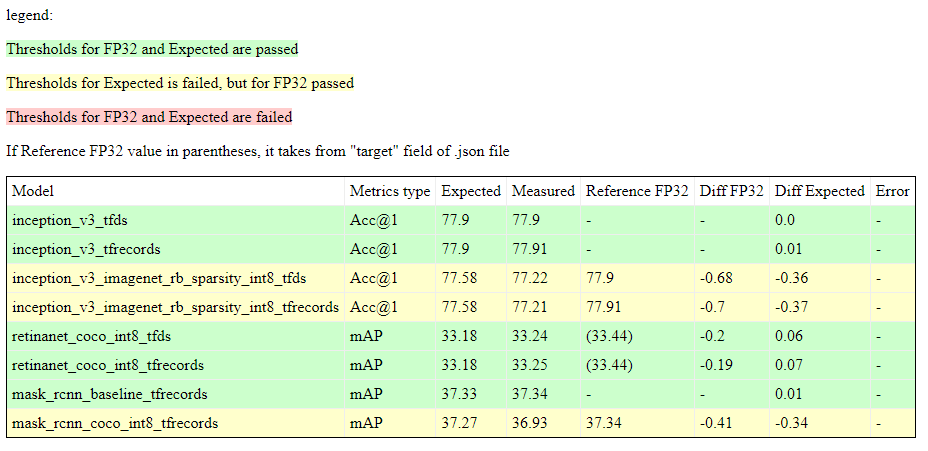

The test in CI server has failed in one model's Diff Expected(mobilenet_v3 int8 & sparsity 42) painted yellow in the figure, while the diff threshold is -0.1%p. Should we adjust the threshold for this model?

@andrey-churkin @alexsu52

@dongkwan-kim01 as you can see, the model's expected accuracy value is 67.55, which means that this very same model was specifically measured to reach 67.55 accuracy in the past. If you did not retrain the models in question, and if this is a degradation specific to this PR, then something's wrong with the code in the PR that needs to be investigated.

@dongkwan-kim01 as you can see, the model's expected accuracy value is 67.55, which means that this very same model was specifically measured to reach 67.55 accuracy in the past. If you did not retrain the models in question, and if this is a degradation specific to this PR, then something's wrong with the code in the PR that needs to be investigated.

Yes, the reason why the accuracy is different from the previous tf version should be investigated, though the main logical difference is changing import path, from deprecated tf.python.keras to tf.keras.

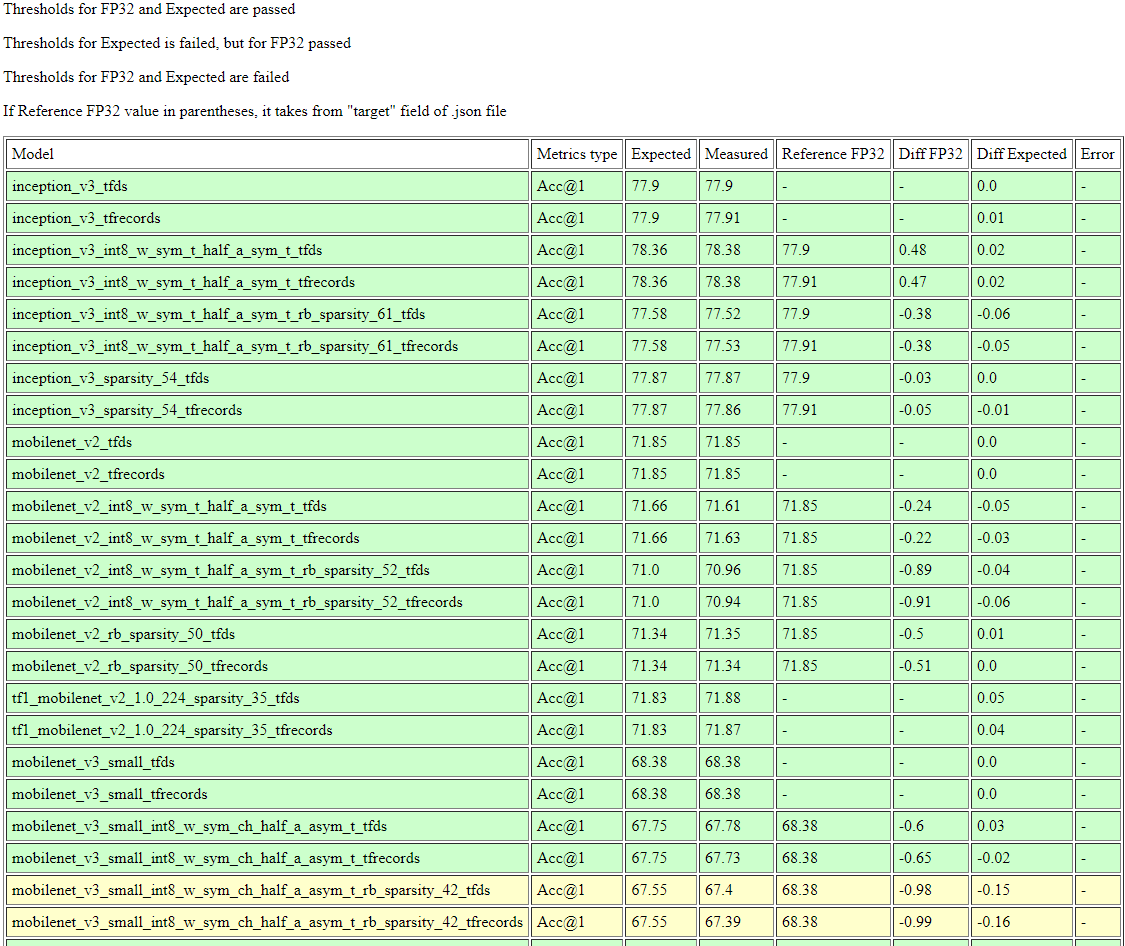

Below is the result from TF2.5 test with importing the tf.keras, rather tf.python.keras in our develop code. The performance is same as previously tested accuracy. I think the reason that performance has changed would come from the framework change, not from changing tf.python.keras to tf.keras. I'm still investigating the reason.

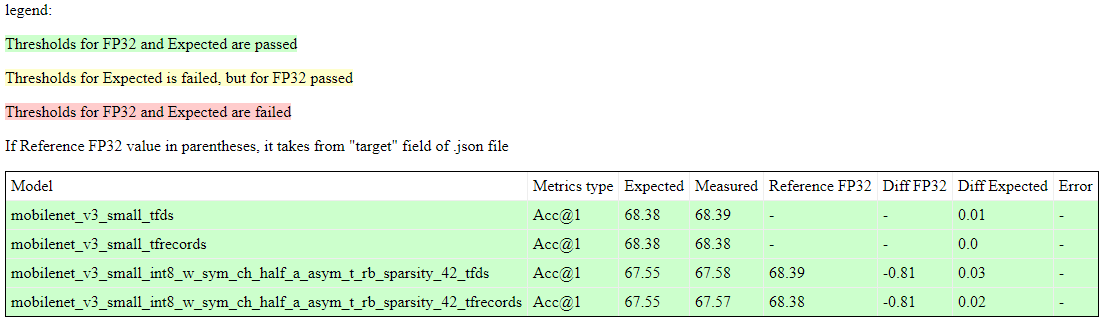

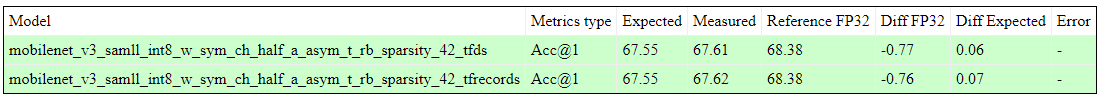

@vshampor @andrey-churkin While running the TF2.8 weekly training, mobilenet_v3 int8 sparsity 42 test shows 67.61% and 67.62% accuracy which is better than expected result 67.55%, so that we can pass the nightly test.

And the reference fp32 full precision Accuracy in TF2.8: tfrecords 68.39%, tfds 68.38%

@vshampor @andrey-churkin While running the TF2.8 weekly training, mobilenet_v3 sparsity quantization test shows 67.61% and 67.62% accuracy which is better than expected result 67.55%, so that we can pass the nightly test.

And the reference fp32 full precision Accuracy in TF2.8: tfrecords 68.39%, tfds 68.38%

Hi @andrey-churkin, could you give an opinion about this result?

The improvement looks great, but now, just to be on the safe side, we would like to understand what changed exactly between the situation where you posted the screenshots with accuracy degradation and the current state. Was there a bug fixed somewhere in the code?

I didn't fix the bug in the code, rather retrained the model in tf2.8. Code level changes were from keras layer importing path, so no specific reason why the accuracy has slightly changed.. Is there any suggestion about the accuracy change?

@dongkwan-kim01 Which test did you launch exactly in order to get the results above? A pytest launch command would be fine.

@vshampor

pytest --junitxml nncf-tests.xml tests/tensorflow/test_sota_checkpoints.py \

-s \

--sota-checkpoints-dir=/REDACTED/develop/tensorflow \

--sota-data-dir=/REDACTED/tensorflow \

--metrics-dump-path=./metrics_dump \

If you need data for test, please tell me.

So in an offline discussion it became clear that the accuracy in 2.8 for the two models could only be retained after retraining in 2.8, which, unfortunately, makes the resulting checkpoints incompatible with 2.5. We will have to keep both versions of the checkpoints in this case if we want to claim support to both 2.5 and 2.8.

CI nightly test passed(test 85) with replacing mobilenet_v3_small int8 sparsity 42 to TF2.8 trained ckpt.

Training for det, seg models are still running. I'll upload it to CI server in the separate path from our develop branch is using.

@dongkwan-kim01 Thanks for sharing the results! Good job!

I would like to summarize:

-

Our current TF2.5 checkpoints were checked on this PR (sota_eval_tensorflow, CI job # 65) The

mobilenet_v3_small_int8_w_sym_ch_half_a_asym_t_rb_sparsity_42was not passed the test. This model was retrained using TF2.8 and the current branch. -

Our current TF2.5 checkpoints, where the

mobilenet_v3_small_int8_w_sym_ch_half_a_asym_t_rb_sparsity_42model was changed, were checked again on this PR (sota_eval_tensorflow, CI job # 85). All models passed the test. -

We are waiting for the results of the weekly test now.

So, I think if this test is successful we can merge this PR. @dongkwan-kim01 Could you please check this message and comment if something is wrong? Thank you!

@dongkwan-kim01 Thanks for sharing the results! Good job!

I would like to summarize:

- Our current TF2.5 checkpoints were checked on this PR (sota_eval_tensorflow, CI job # 65) The

mobilenet_v3_small_int8_w_sym_ch_half_a_asym_t_rb_sparsity_42was not passed the test. This model was retrained using TF2.8 and the current branch.- Our current TF2.5 checkpoints, where the

mobilenet_v3_small_int8_w_sym_ch_half_a_asym_t_rb_sparsity_42model was changed, were checked again on this PR (sota_eval_tensorflow, CI job # 85). All models passed the test.- We are waiting for the results of the weekly test now.

So, I think if this test is successful we can merge this PR. @dongkwan-kim01 Could you please check this message and comment if something is wrong? Thank you!

Thank you for your summarization. All answer for 1, 2, 3 are yes, but since the weekly training was done for classification models, I've tested them and found test failed for all mobilenet related algorithms with accuracy drop, though they trained in TF2.8 environment. I'll make ticket about it, and at this time we can merge this PR, with loosening some accuracy for nightly test.

jenkins retest this please