outward_normal

In the function outward_normal in render.py, when method == 'finite_differences' the gradient is calculated by tetrahedron algorithm.

This is suitable for SDF, but how about Occupancy Function?

Is there any theoritical background that it is suitable for Occupancy Function too under some conditions? (for example, if the tetrahedron is small enough and the Occupancy Function is implemented on MLP?)

Or, this outward_normal is not recommended to use for Occupancy Function?

Hi! Thanks for the interest in the library.

If I understand the question correctly, there should be no problem using this method for an occupancy function. I did so to generate some of the examples in the paper!

The only assumption we really need is that the underlying function is smooth. If the occupancy function was a true 0-1 function you're right; this outward normal computation would output nonsense. However, neural occupancy functions are slightly smoothed at the boundary.

The only danger is that the neural occupancy function is 'too sharp' relative to the scale of the finite difference estimate being used, making it behave like a 0-1 function. In that case, the eps finite-difference width could be adjusted to be smaller. I don't know of any theoretical characterization for this, although you could probably derive one from a smoothness/Lipschitz bound on an MLP.

Hopefully that helps, just let me know if I misunderstood the question!

Hi @nmwsharp , thank you very much for the reply! About the assumption that the underlying function is smooth, yes, that is necessary but what I worry is as follows.

If the steepness of the smoothness is not the same, the tetrahedron algorithm might return an inaccurate gradient.

(For example consider a case like this : at one point the function is a steep Sigmoid and at another point it is more gentle slope Sigmoid.)

Therefore in my opinion if the steepness (of the smooth slope) is guaranteed to be the same everywhere the tetrahedron algorithm works.

If what I wrote above is true what we should do is to find a small enough eps with which the steepness of the slope would be regarded as the same around the tetrahedron locally.

But whether the eps is small enough or not, how can we know?

(Otherwise the obtained gradient cannot be guaranteed to be accurate.)

Any comment would be appreciated. Thank you in advance!

yeah, I think that sounds right to me.

In terms of detecting when something has gone wrong and automatically tuning eps, I'm not sure, I've never really explored that. You could try a local linearity test by testing the value of the function at 3 points in a line with spacing eps about the point of interest. If the value at the midpoint is close to the average of the value at the two endpoints, then the function is locally-linear. If not, try a smaller eps. (I have never implemented this though, just imagining.)

Hi @nmwsharp, thank you very much for your comment. I do appreciate it. But unfortunately, my worry is not resolved by local linearity test.

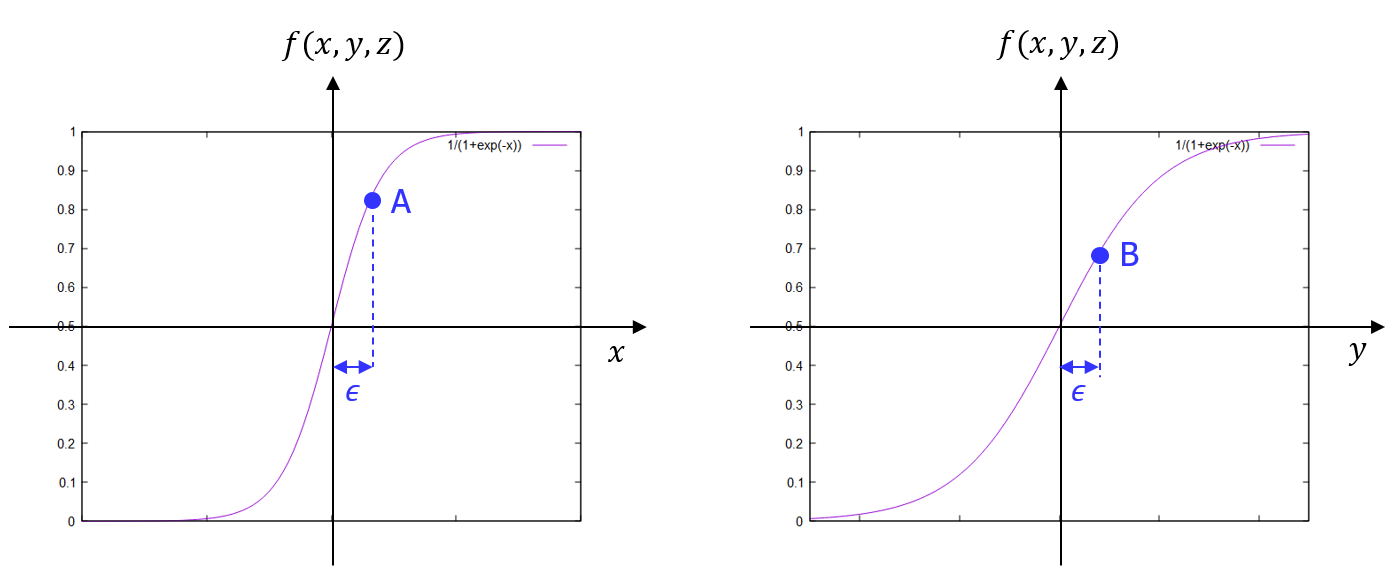

I made an illustration about it.

Let's suppose we have a surface and our reference point has its normal vector as (1, 1, 1).

In that case what we expect from our continuous Occupancy Function is that it returns the same value in x, y, z directions as its gradients.

However, if there is no condition in constructing the Occupancy Function, the continuous curves could be different along x, y, z directions, and thus it could return different values (shown as A and B in the illustration.)

The problem is that we cannot know whether these values we get is accurate or not, since local linearity test won't tell us about this behavior (steepness) of our Occupancy Function really represents the gradient of the surface.

Does my worry make sense?