DeepSpeed

DeepSpeed copied to clipboard

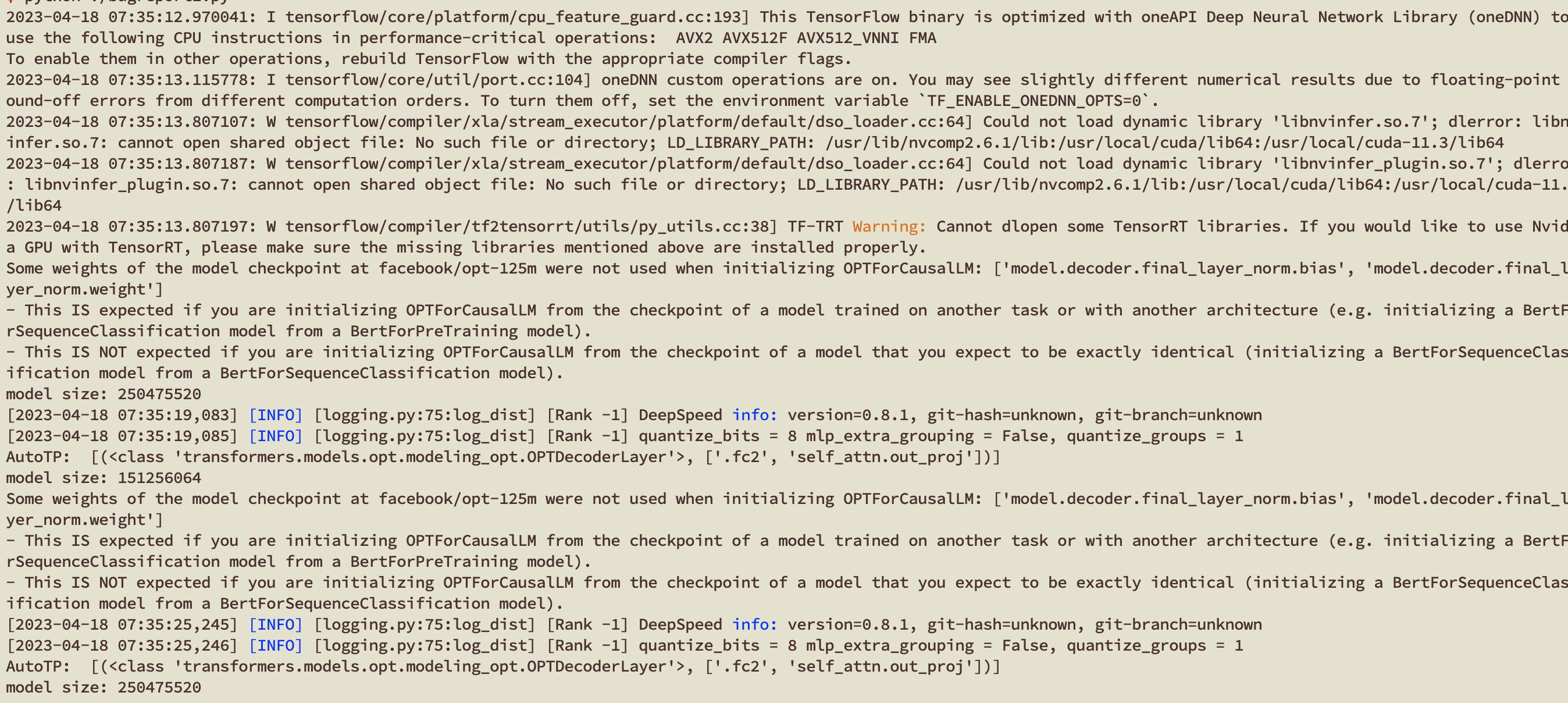

[BUG] InferenceEngine can not parse a cpu model successfully in PyTorch, losing some parameters

Describe the bug

Thedeepspeed.init_inference function can not init a cpu-version model successfully and changes the total model parameters.

To Reproduce

import torch

import deepspeed

from transformers import AutoModelForCausalLM

"""

cpu model -> init_inference

"""

opt125m = AutoModelForCausalLM.from_pretrained(

"facebook/opt-125m", torch_dtype=torch.float16

)

print("model size:", sum(p.numel() * p.element_size() for p in opt125m.parameters()))

# model size: 250475520

model = deepspeed.init_inference(

model=opt125m,

tensor_parallel={"tp_size": 1},

dtype=torch.float16,

zero={"stage": 0},

quant={"enabled": False},

replace_with_kernel_inject=False,

enable_cuda_graph=False,

).cuda()

print("model size:", sum(p.numel() * p.element_size() for p in model.parameters()))

# model size: 151256064

"""

gpu model -> init_inference

"""

opt125m = AutoModelForCausalLM.from_pretrained(

"facebook/opt-125m", torch_dtype=torch.float16

)

model = deepspeed.init_inference(

model=opt125m.cuda(),

tensor_parallel={"tp_size": 1},

dtype=torch.float16,

zero={"stage": 0},

quant={"enabled": False},

replace_with_kernel_inject=False,

enable_cuda_graph=False,

).cuda()

print("model size:", sum(p.numel() * p.element_size() for p in model.parameters()))

# model size: 250475520

Expected behavior

the total model parameters should be kept the same if no related optimizations are applied.

ds_report output

-------------------------------------------------

DeepSpeed C++/CUDA extension op report

--------------------------------------------------

NOTE: Ops not installed will be just-in-time (JIT) compiled at

runtime if needed. Op compatibility means that your system

meet the required dependencies to JIT install the op.

--------------------------------------------------

JIT compiled ops requires ninja

ninja .................. [OKAY]

--------------------------------------------------

op name ................ installed .. compatible

--------------------------------------------------

[WARNING] async_io requires the dev libaio .so object and headers but these were not found.

[WARNING] async_io: please install the libaio-dev package with apt

[WARNING] If libaio is already installed (perhaps from source), try setting the CFLAGS and LDFLAGS environment variables to where it can be found.

async_io ............... [NO] ....... [NO]

cpu_adagrad ............ [NO] ....... [OKAY]

cpu_adam ............... [NO] ....... [OKAY]

fused_adam ............. [NO] ....... [OKAY]

fused_lamb ............. [NO] ....... [OKAY]

quantizer .............. [NO] ....... [OKAY]

random_ltd ............. [NO] ....... [OKAY]

[WARNING] sparse_attn requires a torch version >= 1.5 but detected 2.0

[WARNING] using untested triton version (2.0.0), only 1.0.0 is known to be compatible

sparse_attn ............ [NO] ....... [NO]

spatial_inference ...... [NO] ....... [OKAY]

transformer ............ [NO] ....... [OKAY]

stochastic_transformer . [NO] ....... [OKAY]

transformer_inference .. [NO] ....... [OKAY]

utils .................. [NO] ....... [OKAY]

--------------------------------------------------

DeepSpeed general environment info:

torch install path ............... [....../python3.8/site-packages/torch']

torch version .................... 2.0.0+cu117

deepspeed install path ........... [.......python3.8/site-packages/deepspeed']

deepspeed info ................... 0.8.1, unknown, unknown

torch cuda version ............... 11.7

torch hip version ................ None

nvcc version ..................... 11.6

deepspeed wheel compiled w. ...... torch 1.12, cuda 11.6

Screenshots

System info (please complete the following information):

- OS: Ubuntu 20.04

- A100, GTX 3090