Lot of "Load Error" and various other errors

I've upgraded to the newest version, and i get a lof of "Load Error"'s and

We're sorry, but something went wrong.

If you are the application owner check the logs for more information.

messages. There is nothing in the docker-compose logs or anything that i can find to help narrow down the error.

Here are the logs

db_1 |

db_1 | PostgreSQL Database directory appears to contain a database; Skipping initialization

db_1 |

db_1 | 2022-04-25 13:42:10.723 UTC [1] LOG: starting PostgreSQL 13.6 (Debian 13.6-1.pgdg110+1) on x86_64-pc-linux-gnu, compiled by gcc (Debian 10.2.1-6) 10.2.1 20210110, 64-bit

db_1 | 2022-04-25 13:42:10.723 UTC [1] LOG: listening on IPv4 address "0.0.0.0", port 5432

db_1 | 2022-04-25 13:42:10.723 UTC [1] LOG: listening on IPv6 address "::", port 5432

db_1 | 2022-04-25 13:42:10.725 UTC [1] LOG: listening on Unix socket "/var/run/postgresql/.s.PGSQL.5432"

db_1 | 2022-04-25 13:42:10.730 UTC [25] LOG: database system was shut down at 2022-04-25 13:42:09 UTC

db_1 | 2022-04-25 13:42:10.736 UTC [1] LOG: database system is ready to accept connections

db_1 | 2022-04-26 18:35:34.520 UTC [29] LOG: using stale statistics instead of current ones because stats collector is not responding

db_1 | 2022-04-29 21:25:57.655 UTC [29] LOG: using stale statistics instead of current ones because stats collector is not responding

db_1 | 2022-04-30 04:51:07.800 UTC [29] LOG: using stale statistics instead of current ones because stats collector is not responding

app_1 | Preparing database...

app_1 | Launching application...

app_1 | 18:26:07 rails.1 | started with pid 10

app_1 | 18:26:07 worker.1 | started with pid 11

app_1 | 18:26:08 worker.1 | [Worker(host:2486345d61a3 pid:11)] Starting job worker

app_1 | 18:26:08 worker.1 | [Worker(host:2486345d61a3 pid:11)] Error while reserving job: connection to server at "172.18.0.9", port 5432 failed: Connection refused

app_1 | 18:26:08 worker.1 | Is the server running on that host and accepting TCP/IP connections?

app_1 | 18:26:08 rails.1 | => Booting Puma

app_1 | 18:26:08 rails.1 | => Rails 6.1.5 application starting in production

app_1 | 18:26:08 rails.1 | => Run `bin/rails server --help` for more startup options

app_1 | 18:26:09 rails.1 | [10] Puma starting in cluster mode...

app_1 | 18:26:09 rails.1 | [10] * Puma version: 5.6.4 (ruby 3.0.4-p208) ("Birdie's Version")

app_1 | 18:26:09 rails.1 | [10] * Min threads: 4

app_1 | 18:26:09 rails.1 | [10] * Max threads: 16

app_1 | 18:26:09 rails.1 | [10] * Environment: production

app_1 | 18:26:09 rails.1 | [10] * Master PID: 10

app_1 | 18:26:09 rails.1 | [10] * Workers: 4

app_1 | 18:26:09 rails.1 | [10] * Restarts: (✔) hot (✖) phased

app_1 | 18:26:09 rails.1 | [10] * Preloading application

app_1 | 18:26:09 rails.1 | [10] * Listening on http://0.0.0.0:3214

app_1 | 18:26:09 rails.1 | [10] Use Ctrl-C to stop

app_1 | 18:26:09 rails.1 | [10] - Worker 0 (PID: 16) booted in 0.01s, phase: 0

app_1 | 18:26:09 rails.1 | [10] - Worker 1 (PID: 18) booted in 0.01s, phase: 0

app_1 | 18:26:09 rails.1 | [10] - Worker 3 (PID: 28) booted in 0.0s, phase: 0

app_1 | 18:26:09 rails.1 | [10] - Worker 2 (PID: 24) booted in 0.01s, phase: 0

app_1 | 18:26:13 worker.1 | [Worker(host:2486345d61a3 pid:11)] Error while reserving job: connection to server at "172.18.0.9", port 5432 failed: Connection refused

app_1 | 18:26:13 worker.1 | Is the server running on that host and accepting TCP/IP connections?

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job LibraryScanJob [2c62997d-555f-47f3-9184-8164c3a20bde] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Library/1"}] (id=29) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job LibraryScanJob [2c62997d-555f-47f3-9184-8164c3a20bde] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Library/1"}] (id=29) (queue=van_dam_production_default) COMPLETED after 0.1044

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [f2934e01-a587-4db1-a99f-8683cb96e5cb] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/6"}] (id=30) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [f2934e01-a587-4db1-a99f-8683cb96e5cb] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/6"}] (id=30) (queue=van_dam_production_default) COMPLETED after 0.0902

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [01057241-8dd9-491b-985e-f1020a9ba158] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/3"}] (id=31) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [01057241-8dd9-491b-985e-f1020a9ba158] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/3"}] (id=31) (queue=van_dam_production_default) COMPLETED after 0.0279

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [9e2c3e3b-c0b1-4de6-be64-4f04122c1956] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/1"}] (id=32) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [9e2c3e3b-c0b1-4de6-be64-4f04122c1956] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/1"}] (id=32) (queue=van_dam_production_default) COMPLETED after 0.0387

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [146411d4-0b78-48fd-935f-286a6566f2ae] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/4"}] (id=33) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [146411d4-0b78-48fd-935f-286a6566f2ae] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/4"}] (id=33) (queue=van_dam_production_default) COMPLETED after 0.0614

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [df25ae40-9b93-41af-a9fa-dbfe42b245b9] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/5"}] (id=34) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [df25ae40-9b93-41af-a9fa-dbfe42b245b9] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/5"}] (id=34) (queue=van_dam_production_default) COMPLETED after 0.0651

app_1 | 20:30:09 worker.1 | [Worker(host:2486345d61a3 pid:11)] 6 jobs processed at 13.8592 j/s, 0 failed

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job LibraryScanJob [ca798e24-cb81-4097-9b61-90b3a1a1e49e] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Library/1"}] (id=35) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job LibraryScanJob [ca798e24-cb81-4097-9b61-90b3a1a1e49e] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Library/1"}] (id=35) (queue=van_dam_production_default) COMPLETED after 0.0453

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [02851c3b-3ad3-448e-8078-2290361d88b8] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/6"}] (id=36) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [02851c3b-3ad3-448e-8078-2290361d88b8] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/6"}] (id=36) (queue=van_dam_production_default) COMPLETED after 0.0185

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [ed8b5aa4-ead3-4d27-beb3-0b55baf722f0] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/3"}] (id=37) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [ed8b5aa4-ead3-4d27-beb3-0b55baf722f0] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/3"}] (id=37) (queue=van_dam_production_default) COMPLETED after 0.0176

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [3b966e35-f7c7-4f56-8acb-62050810404d] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/1"}] (id=38) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [3b966e35-f7c7-4f56-8acb-62050810404d] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/1"}] (id=38) (queue=van_dam_production_default) COMPLETED after 0.0224

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [67bdccb6-a0a2-4022-ada9-19e802e7cb68] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/4"}] (id=39) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [67bdccb6-a0a2-4022-ada9-19e802e7cb68] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/4"}] (id=39) (queue=van_dam_production_default) COMPLETED after 0.0166

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [1f06a200-d561-48e6-8dc6-ba6fcb5bfbc3] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/5"}] (id=40) (queue=van_dam_production_default) RUNNING

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [1f06a200-d561-48e6-8dc6-ba6fcb5bfbc3] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/5"}] (id=40) (queue=van_dam_production_default) COMPLETED after 0.0161

app_1 | 20:30:34 worker.1 | [Worker(host:2486345d61a3 pid:11)] 6 jobs processed at 37.3449 j/s, 0 failed

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job LibraryScanJob [41c84a44-8313-4b05-a3fe-b960cc289b9c] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Library/1"}] (id=41) (queue=van_dam_production_default) RUNNING

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job LibraryScanJob [41c84a44-8313-4b05-a3fe-b960cc289b9c] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Library/1"}] (id=41) (queue=van_dam_production_default) COMPLETED after 0.0681

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [f0aa74b7-1c68-46c9-ab4a-044c450de0e2] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/6"}] (id=42) (queue=van_dam_production_default) RUNNING

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [f0aa74b7-1c68-46c9-ab4a-044c450de0e2] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/6"}] (id=42) (queue=van_dam_production_default) COMPLETED after 0.0212

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [60b954d6-d24a-4d9b-84a0-1e79e62fb014] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/3"}] (id=43) (queue=van_dam_production_default) RUNNING

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [60b954d6-d24a-4d9b-84a0-1e79e62fb014] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/3"}] (id=43) (queue=van_dam_production_default) COMPLETED after 0.0168

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [7d0e4169-dbff-49ea-be39-daf1929b1f6f] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/1"}] (id=44) (queue=van_dam_production_default) RUNNING

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [7d0e4169-dbff-49ea-be39-daf1929b1f6f] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/1"}] (id=44) (queue=van_dam_production_default) COMPLETED after 0.0204

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [f4ac1f1f-d338-4e8b-ae90-87604fbf9465] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/7"}] (id=45) (queue=van_dam_production_default) RUNNING

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [f4ac1f1f-d338-4e8b-ae90-87604fbf9465] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/7"}] (id=45) (queue=van_dam_production_default) COMPLETED after 0.0644

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [3b0e2492-e1ef-4b55-aae0-7e77600ed54f] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/4"}] (id=46) (queue=van_dam_production_default) RUNNING

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [3b0e2492-e1ef-4b55-aae0-7e77600ed54f] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/4"}] (id=46) (queue=van_dam_production_default) COMPLETED after 0.0171

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [d31e7948-eab1-41f2-8877-9a78bf8c4a6e] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/5"}] (id=47) (queue=van_dam_production_default) RUNNING

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] Job ModelScanJob [d31e7948-eab1-41f2-8877-9a78bf8c4a6e] from DelayedJob(van_dam_production_default) with arguments: [{"_aj_globalid"=>"gid://van-dam/Model/5"}] (id=47) (queue=van_dam_production_default) COMPLETED after 0.0203

app_1 | 20:46:11 worker.1 | [Worker(host:2486345d61a3 pid:11)] 7 jobs processed at 27.4656 j/s, 0 failed

redis_1 | 1:C 27 Apr 2022 22:57:08.647 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

redis_1 | 1:C 27 Apr 2022 22:57:08.647 # Redis version=6.2.7, bits=64, commit=00000000, modified=0, pid=1, just started

redis_1 | 1:C 27 Apr 2022 22:57:08.647 # Warning: no config file specified, using the default config. In order to specify a config file use redis-server /path/to/redis.conf

redis_1 | 1:M 27 Apr 2022 22:57:08.648 * monotonic clock: POSIX clock_gettime

redis_1 | 1:M 27 Apr 2022 22:57:08.648 # A key '__redis__compare_helper' was added to Lua globals which is not on the globals allow list nor listed on the deny list.

redis_1 | 1:M 27 Apr 2022 22:57:08.648 * Running mode=standalone, port=6379.

redis_1 | 1:M 27 Apr 2022 22:57:08.648 # Server initialized

redis_1 | 1:M 27 Apr 2022 22:57:08.648 # WARNING overcommit_memory is set to 0! Background save may fail under low memory condition. To fix this issue add 'vm.overcommit_memory = 1' to /etc/sysctl.conf and then reboot or run the command 'sysctl vm.overcommit_memory=1' for this to take effect.

redis_1 | 1:M 27 Apr 2022 22:57:08.649 * Ready to accept connections

That's very odd... do any pages load, or do all error? The most obvious thing is that the postgres server isn't running correctly, but the workers seem to be accessing it OK. What do you see in docker ps ?

root@server:~/docker-compose/vandam# docker ps | grep vandam

d18d60e42122 ghcr.io/floppy/van_dam:latest "bin/docker-entrypoi…" 15 hours ago Up 15 hours 3214/tcp vandam_app_1

b82202338283 redis:6 "docker-entrypoint.s…" 5 days ago Up 5 days 6379/tcp vandam_redis_1

92713db59974 postgres:13 "docker-entrypoint.s…" 7 days ago Up 7 days 5432/tcp vandam_db_1

Strange... what happens if you do docker-compose down and then docker-compose up again? I wonder if something's somehow forgotten how to find postgres...

root@tigger:~/docker-compose/vandam# docker-compose down; docker-compose up -d

Stopping vandam_app_1 ... done

Stopping vandam_redis_1 ... done

Stopping vandam_db_1 ... done

Removing vandam_app_1 ... done

Removing vandam_redis_1 ... done

Removing vandam_db_1 ... done

Network myNetwork is external, skipping

Creating vandam_redis_1 ... done

Creating vandam_db_1 ... done

Creating vandam_app_1 ... done

root@tigger:~/docker-compose/vandam# docker-compose logs

Attaching to vandam_app_1, vandam_db_1, vandam_redis_1

db_1 |

db_1 | PostgreSQL Database directory appears to contain a database; Skipping initialization

db_1 |

db_1 | 2022-05-03 11:23:45.698 UTC [1] LOG: starting PostgreSQL 13.6 (Debian 13.6-1.pgdg110+1) on x86_64-pc-linux-gnu, compiled by gcc (Debian 10.2.1-6) 10.2.1 20210110, 64-bit

db_1 | 2022-05-03 11:23:45.699 UTC [1] LOG: listening on IPv4 address "0.0.0.0", port 5432

db_1 | 2022-05-03 11:23:45.699 UTC [1] LOG: listening on IPv6 address "::", port 5432

db_1 | 2022-05-03 11:23:45.700 UTC [1] LOG: listening on Unix socket "/var/run/postgresql/.s.PGSQL.5432"

db_1 | 2022-05-03 11:23:45.705 UTC [26] LOG: database system was shut down at 2022-05-03 11:23:44 UTC

db_1 | 2022-05-03 11:23:45.712 UTC [1] LOG: database system is ready to accept connections

app_1 | Preparing database...

app_1 | rails aborted!

app_1 | ActiveRecord::ConnectionNotEstablished: connection to server at "172.18.0.9", port 5432 failed: Connection refused

app_1 | Is the server running on that host and accepting TCP/IP connections?

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/postgresql_adapter.rb:83:in `rescue in new_client'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/postgresql_adapter.rb:77:in `new_client'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/postgresql_adapter.rb:37:in `postgresql_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:882:in `public_send'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:882:in `new_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:926:in `checkout_new_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:905:in `try_to_checkout_new_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:866:in `acquire_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:588:in `checkout'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:428:in `connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:1128:in `retrieve_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_handling.rb:327:in `retrieve_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_handling.rb:283:in `connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/tasks/database_tasks.rb:237:in `migrate'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/railties/databases.rake:363:in `block (3 levels) in <main>'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/railties/databases.rake:359:in `each'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/railties/databases.rake:359:in `block (2 levels) in <main>'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:281:in `block in execute'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:281:in `each'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:281:in `execute'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:219:in `block in invoke_with_call_chain'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:199:in `synchronize'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:199:in `invoke_with_call_chain'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:188:in `invoke'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:160:in `invoke_task'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:116:in `block (2 levels) in top_level'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:116:in `each'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:116:in `block in top_level'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:125:in `run_with_threads'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:110:in `top_level'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/commands/rake/rake_command.rb:24:in `block (2 levels) in perform'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:186:in `standard_exception_handling'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/commands/rake/rake_command.rb:24:in `block in perform'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/rake_module.rb:59:in `with_application'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/commands/rake/rake_command.rb:18:in `perform'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/command.rb:50:in `invoke'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/commands.rb:18:in `<main>'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/bootsnap-1.11.1/lib/bootsnap/load_path_cache/core_ext/kernel_require.rb:30:in `require'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/bootsnap-1.11.1/lib/bootsnap/load_path_cache/core_ext/kernel_require.rb:30:in `require'

app_1 |

app_1 | Caused by:

app_1 | PG::ConnectionBad: connection to server at "172.18.0.9", port 5432 failed: Connection refused

app_1 | Is the server running on that host and accepting TCP/IP connections?

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/pg-1.3.5/lib/pg/connection.rb:637:in `async_connect_or_reset'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/pg-1.3.5/lib/pg/connection.rb:707:in `new'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/pg-1.3.5/lib/pg.rb:69:in `connect'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/postgresql_adapter.rb:78:in `new_client'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/postgresql_adapter.rb:37:in `postgresql_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:882:in `public_send'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:882:in `new_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:926:in `checkout_new_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:905:in `try_to_checkout_new_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:866:in `acquire_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:588:in `checkout'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:428:in `connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_adapters/abstract/connection_pool.rb:1128:in `retrieve_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_handling.rb:327:in `retrieve_connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/connection_handling.rb:283:in `connection'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/tasks/database_tasks.rb:237:in `migrate'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/railties/databases.rake:363:in `block (3 levels) in <main>'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/railties/databases.rake:359:in `each'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/activerecord-6.1.5/lib/active_record/railties/databases.rake:359:in `block (2 levels) in <main>'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:281:in `block in execute'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:281:in `each'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:281:in `execute'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:219:in `block in invoke_with_call_chain'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:199:in `synchronize'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:199:in `invoke_with_call_chain'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/task.rb:188:in `invoke'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:160:in `invoke_task'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:116:in `block (2 levels) in top_level'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:116:in `each'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:116:in `block in top_level'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:125:in `run_with_threads'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:110:in `top_level'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/commands/rake/rake_command.rb:24:in `block (2 levels) in perform'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/application.rb:186:in `standard_exception_handling'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/commands/rake/rake_command.rb:24:in `block in perform'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/rake-13.0.6/lib/rake/rake_module.rb:59:in `with_application'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/commands/rake/rake_command.rb:18:in `perform'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/command.rb:50:in `invoke'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/railties-6.1.5/lib/rails/commands.rb:18:in `<main>'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/bootsnap-1.11.1/lib/bootsnap/load_path_cache/core_ext/kernel_require.rb:30:in `require'

app_1 | /usr/src/app/vendor/bundle/ruby/3.0.0/gems/bootsnap-1.11.1/lib/bootsnap/load_path_cache/core_ext/kernel_require.rb:30:in `require'

app_1 | Tasks: TOP => db:prepare

app_1 | (See full trace by running task with --trace)

redis_1 | 1:C 03 May 2022 11:23:45.593 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

redis_1 | 1:C 03 May 2022 11:23:45.593 # Redis version=6.2.7, bits=64, commit=00000000, modified=0, pid=1, just started

redis_1 | 1:C 03 May 2022 11:23:45.593 # Warning: no config file specified, using the default config. In order to specify a config file use redis-server /path/to/redis.conf

redis_1 | 1:M 03 May 2022 11:23:45.594 * monotonic clock: POSIX clock_gettime

redis_1 | 1:M 03 May 2022 11:23:45.595 # A key '__redis__compare_helper' was added to Lua globals which is not on the globals allow list nor listed on the deny list.

redis_1 | 1:M 03 May 2022 11:23:45.595 * Running mode=standalone, port=6379.

redis_1 | 1:M 03 May 2022 11:23:45.595 # Server initialized

redis_1 | 1:M 03 May 2022 11:23:45.595 # WARNING overcommit_memory is set to 0! Background save may fail under low memory condition. To fix this issue add 'vm.overcommit_memory = 1' to /etc/sysctl.conf and then reboot or run the command 'sysctl vm.overcommit_memory=1' for this to take effect.

redis_1 | 1:M 03 May 2022 11:23:45.595 * Ready to accept connections

It seems to to get up again. Or atleast, the webinterface is not responding

Interesting, it's definitely failing to connect to postgres. What's your docker-compose.yml look like? And is the database connection string correct? I've no idea why this might have changed with an update to the app container, though.

Here is my yml file, I'm using traefik as reverse proxy

version: "3"

services:

app:

image: ghcr.io/floppy/van_dam:latest

volumes:

- /srv/vandam/libraries:/libraries

environment:

DATABASE_URL: postgresql://van_dam:password@db/van_dam?pool=5

SECRET_KEY_BASE: a_nice_long_random_string

GRID_SIZE: 260

depends_on:

- db

- redis

labels:

- "traefik.enable=true"

- "traefik.http.routers.vandam.entrypoints=http"

- "traefik.http.routers.vandam.rule=Host(`vandam.example.com`)"

- "traefik.http.middlewares.vandam-https-redirect.redirectscheme.scheme=https"

- "traefik.http.routers.vandam.middlewares=vandam-https-redirect"

- "traefik.http.routers.vandam-secure.entrypoints=https"

- "traefik.http.routers.vandam-secure.rule=Host(`vandam.example.com`)"

- "traefik.http.routers.vandam-secure.tls=true"

- "traefik.http.routers.vandam-secure.tls.certresolver=http"

- "traefik.http.routers.vandam-secure.service=vandam"

- "traefik.http.services.vandam.loadbalancer.server.port=3214"

- "traefik.docker.network=myNetwork"

db:

image: postgres:13

volumes:

- /srv/vandam/postgress:/var/lib/postgresql/data

environment:

POSTGRES_USER: van_dam

POSTGRES_PASSWORD: password

restart: on-failure

redis:

image: redis:6

restart: on-failure

networks:

default:

external:

name: myNetwork

I wonder if it's a name resolution thing because of the external myNetwork and traefik config. I'm writing this from memory so it might be wrong, but try docker exec -it {app_container_name} ping db and see if the app container can see the db hostname properly.

With the restart, the app doesn't stay up long enough to try this... I'm going to try it without the network part.

root@tigger:~/docker-compose/vandam# docker exec -it vandam_app_1 ping db

PING db (172.18.0.9): 56 data bytes

64 bytes from 172.18.0.9: seq=0 ttl=64 time=0.093 ms

64 bytes from 172.18.0.9: seq=1 ttl=64 time=0.130 ms

64 bytes from 172.18.0.9: seq=2 ttl=64 time=0.124 ms

64 bytes from 172.18.0.9: seq=3 ttl=64 time=0.104 ms

64 bytes from 172.18.0.9: seq=4 ttl=64 time=0.116 ms

--- db ping statistics ---

5 packets transmitted, 5 packets received, 0% packet loss

round-trip min/avg/max = 0.093/0.113/0.130 ms

It does connect. but it sometimes just gives errors like described in the first post.

Very weird. I'll keep thinking. I hope if anyone else is experiencing this, they'll add something here too.

i'm seeing similar issue .

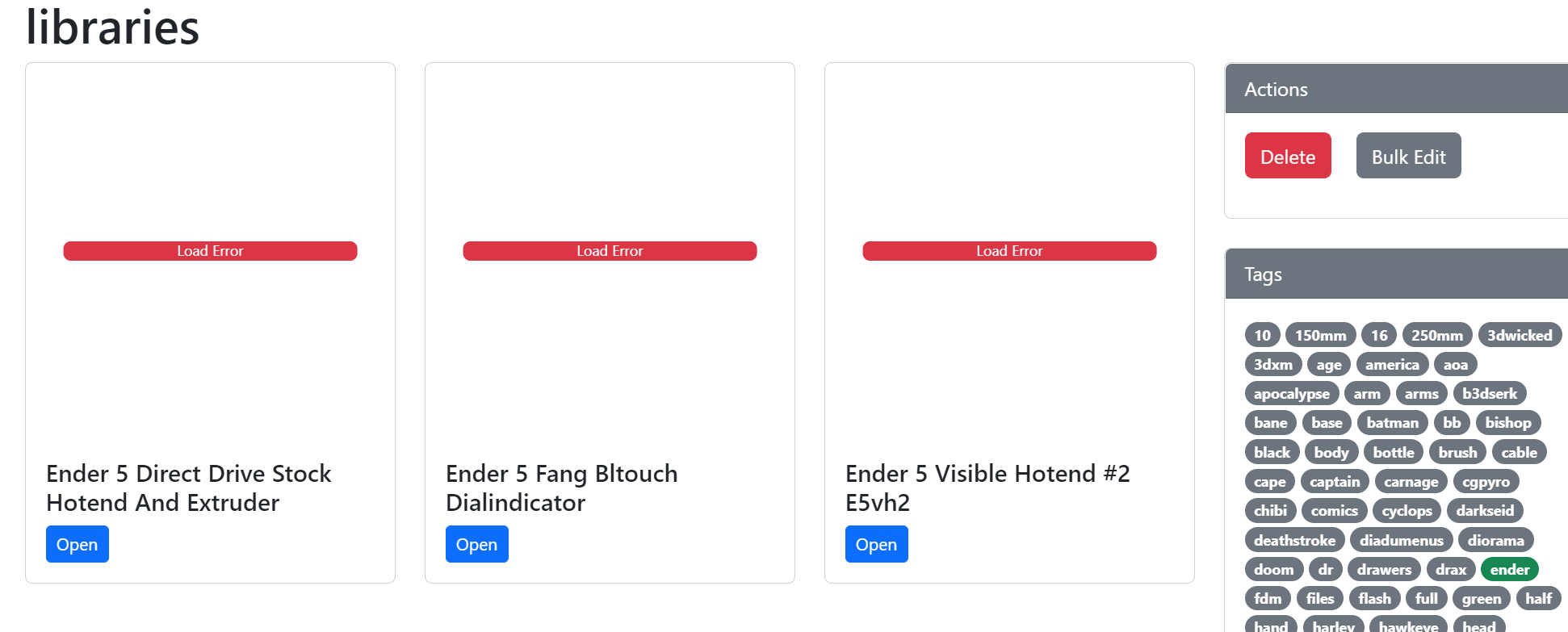

this is how the page looks like

What happens if you go into the details for a single model? Same thing?

Yes.. Could it be the folder structure? My subfolders have varying depths...some have models/files in further sub folders

That should be ok, mine are the same. Anything useful in the logs?

not that i can see :(

@legomannetje did you get anywhere with this, did it start working at all?

I've not looked at it since I couldn't fix the issue. I've just pulled the latest image, and it seems to work now!

Fantastic news, thanks for letting me know! @nabeelmoeen if your issue is still happening, can you open another ticket please, I think it's a different cause?

@legomannetje we've fixed a few docker things, including some networking stuff, so it might have been something to do with that. 👍🏻