PNDMScheduler has a considerable cost when running StableDiffusionPipeline in diffuser-0.4.1

Describe the bug

Enviroment

GPU: A10, CUDA 11.6, cuDNN 8.4.0 Torch: 1.12.1 diffuser: 0.4.1

Phenomenon

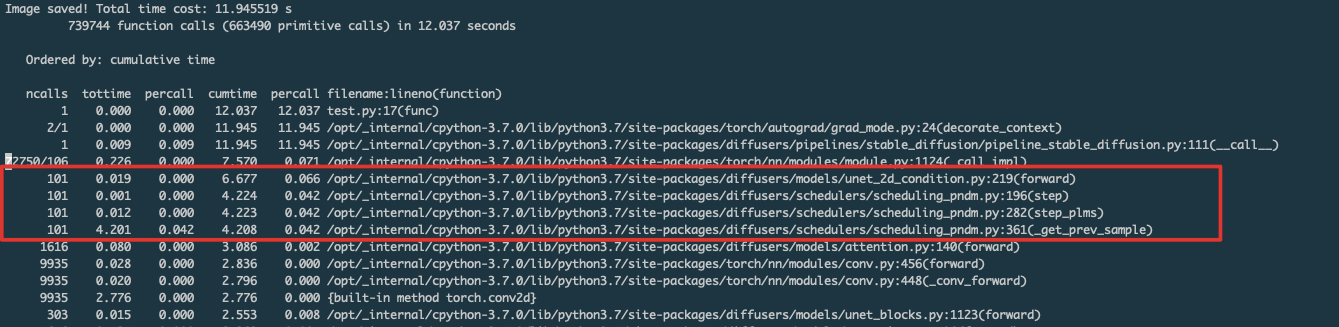

When I ran the StableDiffusionPipeline with fp16 precision, I found the time cost of PNDMScheduler increase a lot after I upgraded the diffuser to 0.4.1. It costs about 4.2 seconds while unet costs 6.6 seconds. The time cost of PNDMScheduler in diffuser-0.3.0 can be almost ignored. I wonder what happends with diffuser upgrade.

Profile

Code

from diffusers import StableDiffusionPipeline

import time

import torch

pipe = StableDiffusionPipeline.from_pretrained(

"CompVis/stable-diffusion-v1-4",

revision="fp16",

torch_dtype=torch.float16,

use_auth_token=True)

pipe = pipe.to("cuda")

prompt = "a photo of an astronaut riding a horse on mars"

start = time.time()

image = pipe(prompt, num_inference_steps=100).images[0]

time_cost = time.time() - start

image.save("astronaut_rides_horse.png")

print(f"Image saved! Total time cost: {time_cost:2f} s")

Reproduction

No response

Logs

No response

System Info

-

diffusersversion: 0.4.1 - Platform: Linux-4.14.0_1-0-0-43-x86_64-with-centos-7.9.2009-Core

- Python version: 3.7.0

- PyTorch version (GPU?): 1.12.1+cu116 (True)

- Huggingface_hub version: 0.10.0

- Transformers version: 4.22.2

- Using GPU in script?:

- Using distributed or parallel set-up in script?:

Hey @joey12300,

I cannot really confirm this. I'm running the following script:

#!/usr/bin/env python3

from diffusers import StableDiffusionPipeline

import diffusers

import time

import torch

pipe = StableDiffusionPipeline.from_pretrained(

"CompVis/stable-diffusion-v1-4",

revision="fp16",

torch_dtype=torch.float16,

)

pipe = pipe.to("cuda")

prompt = "a photo of an astronaut riding a horse on mars"

start = time.time()

if diffusers.__version__ == "0.3.0":

with torch.autocast("cuda"):

image = pipe(prompt, num_inference_steps=100).images[0]

else:

image = pipe(prompt, num_inference_steps=100).images[0]

time_cost = time.time() - start

image.save("astronaut_rides_horse.png")

print(f"Image saved! Total time cost: {time_cost:2f} s")

Note that in version 0.3.0 it was not yet possible to run fp16 without autocast. I'm getting the following result for 0.3.0:

Image saved! Total time cost: 10.437387 s

and for 0.4.1:

Image saved! Total time cost: 8.903677 s

Showing that 0.4.1 is significantly faster. I'm using a A100 GPU.

This issue has been automatically marked as stale because it has not had recent activity. If you think this still needs to be addressed please comment on this thread.

Please note that issues that do not follow the contributing guidelines are likely to be ignored.