Multi-Node Training No progress on Azure

System Info

accelerate_Version: 0.12.0,

OS: Ubuntu18.04,

python version:3.8

torch version: 1.11.0

accelerate config: Root node(0) - {

"compute_environment": "LOCAL_MACHINE",

"deepspeed_config": {},

"distributed_type": "MULTI_GPU",

"downcast_bf16": false,

"fsdp_config": {},

"machine_rank": 0,

"main_process_ip": "20.169.144.69",

"main_process_port": 46585,

"main_training_function": "main",

"mixed_precision": "no",

"num_machines": 2,

"num_processes": 4,

"rdzv_backend": "static",

"use_cpu": false

}

Node 1:{

"compute_environment": "LOCAL_MACHINE",

"deepspeed_config": {},

"distributed_type": "MULTI_GPU",

"downcast_bf16": false,

"fsdp_config": {},

"machine_rank": 1,

"main_process_ip": "20.169.144.69",

"main_process_port": 51731,

"main_training_function": "main",

"mixed_precision": "no",

"num_machines": 2,

"num_processes": 4,

"rdzv_backend": "static",

"use_cpu": false

}

Information

- [ ] The official example scripts

- [X] My own modified scripts

Tasks

- [ ] One of the scripts in the examples/ folder of Accelerate or an officially supported

no_trainerscript in theexamplesfolder of thetransformersrepo (such asrun_no_trainer_glue.py) - [ ] My own task or dataset (give details below)

Reproduction

Use two azure VMs for multi-node training

Expected behavior

The expected behaviour was that both machine start training and either communticate with each to sync or provide an error if that fails but the training did not proceed on either of the nodes. Tried using NCC_DEBUG=INFO to check for network issues but did not get any prompts. Not sure what I could be missing here as I followed the items mentioned in previous issues which were closed for multi-node such as https://github.com/huggingface/accelerate/issues/609 and https://github.com/huggingface/accelerate/issues/412

Hello @vishalghor, please have the same port for both the nodes and try it out using latest accelerate main branch. Let us know if you still have this issue.

Hi @pacman100 , thank you . I tried your suggestion with the latest accelerate version=0.13.0 and by setting the main_process_port = 'xxxx' same for both the nodes but still it seems the training doesnt move ahead. Is there a way to get more information on why it is hanging or not moving ahead?

@pacman100 for debugging I also tried with the example scripts and I see the same thing. The training doesn't start and it exits without any error message after some time.

Hello @vishalghor , can you share the updated accelerate config? Also, prepend NCCL_DEBUG=INFO to the launch command to get logs on the NCCL issues you might be facing.

Hi @pacman100 ,

Below are the configs after setting the same port number: Master Node(0):{ "compute_environment": "LOCAL_MACHINE", "deepspeed_config": {}, "distributed_type": "MULTI_GPU", "downcast_bf16": false, "fsdp_config": {}, "machine_rank": 0, "main_process_ip": "40.121.95.65", "main_process_port": 8892, "main_training_function": "main", "mixed_precision": "no", "num_machines": 2, "num_processes": 4, "rdzv_backend": "static", "use_cpu": false } Slave Node(1):{ "compute_environment": "LOCAL_MACHINE", "deepspeed_config": {}, "distributed_type": "MULTI_GPU", "downcast_bf16": false, "fsdp_config": {}, "machine_rank": 1, "main_process_ip": "40.121.95.65", "main_process_port": 8892, "main_training_function": "main", "mixed_precision": "no", "num_machines": 2, "num_processes": 4, "rdzv_backend": "static", "use_cpu": false } These were the configs when I executed the cv_example.py or nlp_example.py from accelerate repo. I used the NCCL_DEBUG flag but there were no logs or prints from NCCL.

Hello @vishalghor, I think you are not using the accelerate from the main branch because it isn't having same_network param. Can you install accelerate from source like this pip install git+https://github.com/huggingface/accelerate and try it out with same_network being no as shown below:

- `Accelerate` version: 0.13.0.dev0

- Platform: Linux-5.4.0-122-generic-x86_64-with-glibc2.29

- Python version: 3.8.10

- Numpy version: 1.23.2

- PyTorch version (GPU?): 1.12.1+cu116 (True)

- `Accelerate` default config:

- compute_environment: LOCAL_MACHINE

- distributed_type: MULTI_GPU

- mixed_precision: no

- use_cpu: False

- num_processes: 4

- machine_rank: 0

- num_machines: 2

- main_process_ip: 192.168.1.29

- main_process_port: 29500

- rdzv_backend: static

- same_network: False

- main_training_function: main

- deepspeed_config: {}

- fsdp_config: {}

- downcast_bf16: no

Hi @pacman100 , thank you for pointing that out. I did install the latest accelerate, here is a pip show accelerate: (venv_training) azureuser@lbue-mlspr-vm-prefect:~/accelerate/examples$ pip show accelerate Name: accelerate Version: 0.13.0.dev0 Summary: Accelerate Home-page: https://github.com/huggingface/accelerate Author: The HuggingFace team Author-email: [email protected] License: Apache Location: /home/azureuser/venv_training/lib/python3.8/site-packages Requires: numpy, packaging, psutil, pyyaml, rich, torch Required-by: upscalers-pytorch

I think the same_network flag could be the reason as by default it is True. But while doing accelerate config I didnt get the option select the same_network field. I tried manually adding the parameter to the config but threw an error as below:

╭─────────────────────────────── Traceback (most recent call last) ────────────────────────────────╮ │ /home/azureuser/venv_training/bin/accelerate:8 in <module> │ │ │ │ 5 from accelerate.commands.accelerate_cli import main │ │ 6 if __name__ == '__main__': │ │ 7 │ sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0]) │ │ ❱ 8 │ sys.exit(main()) │ │ 9 │ │ │ │ /home/azureuser/venv_training/lib/python3.8/site-packages/accelerate/commands/accelerate_cli.py: │ │ 43 in main │ │ │ │ 40 │ │ exit(1) │ │ 41 │ │ │ 42 │ # Run │ │ ❱ 43 │ args.func(args) │ │ 44 │ │ 45 │ │ 46 if __name__ == "__main__": │ │ │ │ /home/azureuser/venv_training/lib/python3.8/site-packages/accelerate/commands/launch.py:762 in │ │ launch_command │ │ │ │ 759 │ warned = [] │ │ 760 │ # Get the default from the config file. │ │ 761 │ if args.config_file is not None or os.path.isfile(default_config_file) and not args. │ │ ❱ 762 │ │ defaults = load_config_from_file(args.config_file) │ │ 763 │ │ if ( │ │ 764 │ │ │ not args.multi_gpu │ │ 765 │ │ │ and not args.tpu │ │ │ │ /home/azureuser/venv_training/lib/python3.8/site-packages/accelerate/commands/config/config_args │ │ .py:64 in load_config_from_file │ │ │ │ 61 │ │ │ │ config_class = ClusterConfig │ │ 62 │ │ │ else: │ │ 63 │ │ │ │ config_class = SageMakerConfig │ │ ❱ 64 │ │ │ return config_class.from_yaml_file(yaml_file=config_file) │ │ 65 │ │ 66 │ │ 67 @dataclass │ │ │ │ /home/azureuser/venv_training/lib/python3.8/site-packages/accelerate/commands/config/config_args │ │ .py:117 in from_yaml_file │ │ │ │ 114 │ │ if "use_cpu" not in config_dict: │ │ 115 │ │ │ config_dict["use_cpu"] = False │ │ 116 │ │ │ │ ❱ 117 │ │ return cls(**config_dict) │ │ 118 │ │ │ 119 │ def to_yaml_file(self, yaml_file): │ │ 120 │ │ with open(yaml_file, "w", encoding="utf-8") as f: │ ╰──────────────────────────────────────────────────────────────────────────────────────────────────╯ TypeError: __init__() got an unexpected keyword argument 'same_network'

I'm not sure what could be the probable reason for it. As per the code I see that the option to select same_network should have come after it asks the port number

Hello @pacman100 , I fixed the version and same_network param issue. It was due to my virtualenv. 😅 But setting the same_network didn't help in running the example scripts in accelerate. Below are the configs: Node 0:

compute_environment: LOCAL_MACHINE

deepspeed_config: {}

distributed_type: MULTI_GPU

downcast_bf16: 'no'

fsdp_config: {}

machine_rank: 0

main_process_ip: 20.163.205.163

main_process_port: 4252

main_training_function: main

mixed_precision: 'no'

num_machines: 2

num_processes: 4

rdzv_backend: static

same_network: false

use_cpu: false

Node 1:

compute_environment: LOCAL_MACHINE

deepspeed_config: {}

distributed_type: MULTI_GPU

downcast_bf16: 'no'

fsdp_config: {}

machine_rank: 1

main_process_ip: 20.163.205.163

main_process_port: 4252

main_training_function: main

mixed_precision: 'no'

num_machines: 2

num_processes: 4

rdzv_backend: static

same_network: false

use_cpu: false

I'm using the NCCL_DEBUG flag but it doesn't print any message to understand what could be the root issue.

Hello @vishalghor, maybe go through the discussions on issue #609 and #412 and see if that helps. Are you able to ssh from one node to another?

Hello @pacman100 , thank you for the information. I'm able to ssh from one VM to another. I added the ip address for the VMs to connect to in the file /etc/hosts as below :

127.0.0.1 localhost

20.x.x.x azure_3

# The following lines are desirable for IPv6 capable hosts

::1 ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

ff02::3 ip6-allhosts

~

I'm not sure if this is the correct way or I should modify any other file or create a hostfile. But if I ssh as : ssh azure_3 , I'm able to connect to the other VM without any issue. To avoid auth chekcing I disabled it from the /etc/ssh/ssh_config file.

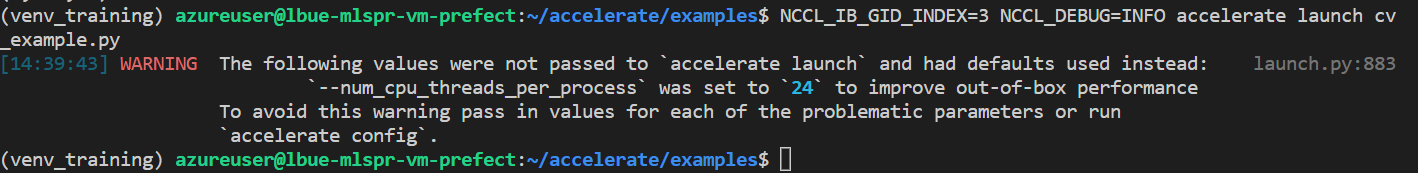

As mentioned in the #609 I tried NCCL_IB_GID_INDEX=3 NCCL_DEBUG=INFO accelerate launch script.py but it didn't print any message. Below is a snapshot of what happens after I execute the command.

Thanks for the clear feedback @vishalghor, I'll be looking into it this week

Thanks @muellerzr . Looking forward to your feedback over it. :)

Labeling this as "feature request" for now since after some 1:1 debugging it seems to be related with the Azure platform, and we don't have access to Azure machines with GPUs (yet) to test this out. But we'll get them soon