[question] Use of Dopamine with Tabular/Structured Data

Hello there,

First and foremost, thank you for opensourcing those agents ! I would like to try to use them in a context where inputs are structured/tabular data. What do you think would be the best (and/or quickest) approach between :

- fork the project in order to allow custom network template and inputs (as convolutional layers are maybe not needed in the first layers)

- put all our structured data in a matrix form for the conv layers can process it (that doesn't seem right to me as applying the same kernel between heterogeneous inputs seems weird)

And lastly, is there already something in the project allowing us to process tabular/structured data or do you plan to add it in future releases ?

Thank you in advance for you answers.

Nicolas.

In my fork I made changes to accept flat inputs https://github.com/pathway/dopamine/commit/e0e9d469b57ccee8db3edcc991393d11201ec823

Then you can just overrid _network_template in an agent subclass.

Thank you for your quick answer @pathway In order to make the 'observe' work, I had to remove dimensions in the '_record_observation' method too and comment the 'self._q_values_dict = {' section in the '_select_action_deterministic' method, is it what I was supposed to do ?

Furthermore, I tried to apply a 'flat' DQN Agent to a very simple game (2 random inputs, 2 outputs, 1 stack frame), a reward is distributed only if agent chooses the action index of the highest input, and it doesn't seem to converge at all even if I try to tune the hyperparameters. Here is the code snippet : `class DQN_Flat(DQNAgent):

def _network_template(self, state):

net = tf.cast(state, tf.float32)

net = slim.flatten(net)

net = slim.fully_connected(net, 512)

q_values = slim.fully_connected(net, self.num_actions, activation_fn=None)

return self._get_network_type()(q_values)

if __name__ == "__main__":

with tf.Session() as sess:

agent = DQN_Flat(sess, 2)

sess.run(tf.global_variables_initializer())

score_history = 0

for i in range(10000000000):

obs1 = np.random.random()

obs2 = np.random.random()

a1 = agent.begin_episode([obs1, obs2])

r = 1 if a1 == 0 and obs1 > obs2 or a1 == 1 and obs1 < obs2 else 0

score_history += r

agent.end_episode(r)

if i % 1000 == 0:

print(score_history / 1000)

score_history = 0`

In the above code you are not doing any training, just running getting random action.

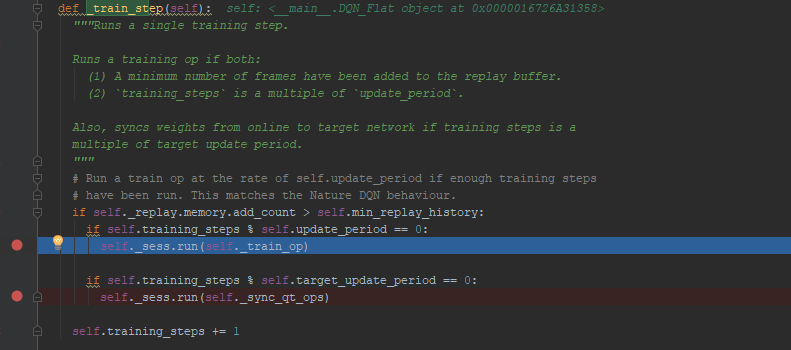

Look at https://github.com/google/dopamine/blob/master/dopamine/agents/dqn/dqn_agent.py , _train_step() is called from within step(), you dont use step().

Look at the atari runner for more details.

Also if you package your game into a proper env you can more easily compare various approaches to solving.

train_step seems to be called in 'begin_episode' too, and eval_mode beeing at False by default, it should train anyway in my above code, don't you think ?

Do you have any idea why it can't converge on this simpler version of a multi armed bandit ? Maybe my set of hyperparameters aren't correct ?

Do you have any idea why it can't converge on this simpler version of a multi armed bandit ? Maybe my set of hyperparameters aren't correct ?

I see what you mean! Sorry not sure the answer. But you can try to integrate cartpole in your example to ensure it makes sense.

note dqn usually has a very long warmup before training is allowed to begin:

https://github.com/google/dopamine/blob/master/dopamine/agents/dqn/dqn_agent.py#L73

min_replay_history: int, number of transitions that should be experienced

before the agent begins training its value function.