[ALBERT] How to deal with `Model diverged with loss = NaN` when training from scratch?

hi,

I tried to train albert base model from scratch on 64 v100 gpus. Part of my hyper parameters are :

init_lr=2.75e-5 (i.e. 0.00173/64)

train_batch_size=48

train_steps=32millions, so 500K for each gpu

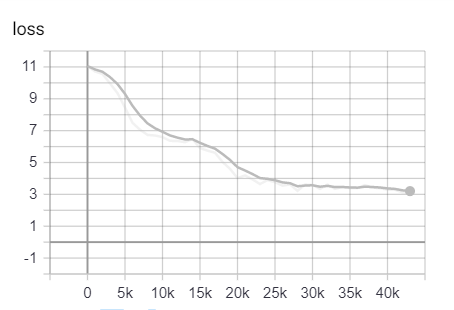

I used the LAMP optimizer. My training was crashed at around 43K due to Model diverged with loss = NaN. Does anyone know how to address this ? On v100, I cann't set very large train batch size

your representation is so confusing. train_batch_size=48 means batch size per gpu? or global batch size. if you use global batch size 48 your learning rate should be scaled (48/4096)^0.5 times not 1/64. and warm up step should be remain same. train step should be scaled (4096/48) times. or if you use local batch size 48 and global batch size 48*64=3062, your learning rate should be scaled (3062/4096)^0.5 times, warm up step remain same and train step should be scaled (4096/3092) times and what is lamp optimizer? not lamb?

your representation is so confusing. train_batch_size=48 means batch size per gpu? or global batch size. if you use global batch size 48 your learning rate should be scaled (48/4096)^0.5 times not 1/64. and warm up step should be remain same. train step should be scaled (4096/48) times. or if you use local batch size 48 and global batch size 48*64=3062, your learning rate should be scaled (3062/4096)^0.5 times, warm up step remain same and train step should be scaled (4096/3092) times and what is lamp optimizer? not lamb?

Sorry for my incomplete info gave previously. The train batch size is 48 per gpu. The optimizer I used is LAMB.

your representation is so confusing. train_batch_size=48 means batch size per gpu? or global batch size. if you use global batch size 48 your learning rate should be scaled (48/4096)^0.5 times not 1/64. and warm up step should be remain same. train step should be scaled (4096/48) times. or if you use local batch size 48 and global batch size 48*64=3062, your learning rate should be scaled (3062/4096)^0.5 times, warm up step remain same and train step should be scaled (4096/3092) times and what is lamp optimizer? not lamb?

Why the learning rate should be scaled to (3062/4096)^0.5 times if train_batch_size=48 is for each gpu?

see LARGE BATCH OPTIMIZATION FOR DEEP LEARNING: TRAINING BERT IN 76 MINUTES(https://arxiv.org/abs/1904.00962) also you'd better specify warm up step

see LARGE BATCH OPTIMIZATION FOR DEEP LEARNING: TRAINING BERT IN 76 MINUTES(https://arxiv.org/abs/1904.00962) also you'd better specify warm up step

I did another round ALBERT BASE model training. I used Horovod to train on 64 GPUs, train_batch_size=32 per GPU, global train batch size=2048, train_steps=250K. According to TRAINING BERT IN 76 MINUTES, I used the square root learning rate scaling, so the initial learning rate is 0.00173/(2^0.5), in which 0.00173 is the learning rate for train_batch_size=4096. As the warm-up steps/proportion, I set 3125/0.0125, 6250/0.025, 25K/0.1. The optimizer I used is LAMP.

But unfortunately all my training were crashed by Model diverged with loss = NaN . Any suggestions about this? Is it possible to replicate ALBERT model training from scratch ?

BTW, I didn't use google's run_pretrain.py, but the code I used is almost same, we just added some training acceleration techniques, e.g. horovod, amp and xla.

Thanks.

- in paper they do not use constant warm proportion. instead they use constant warm up step. it means with batch size 2048 you have to set warm up step 3125.

- in many code with horovod, learning rate is automatically scaled horovod_size times. it means that your learning rate might be scaled 64 times. so you have to remove

learning rate = learning*horovod sizepart - in horovod you have to split tfrecord to many files(at least your horovod size). have you done this?