Wider student loss not changing

Hi Eren!

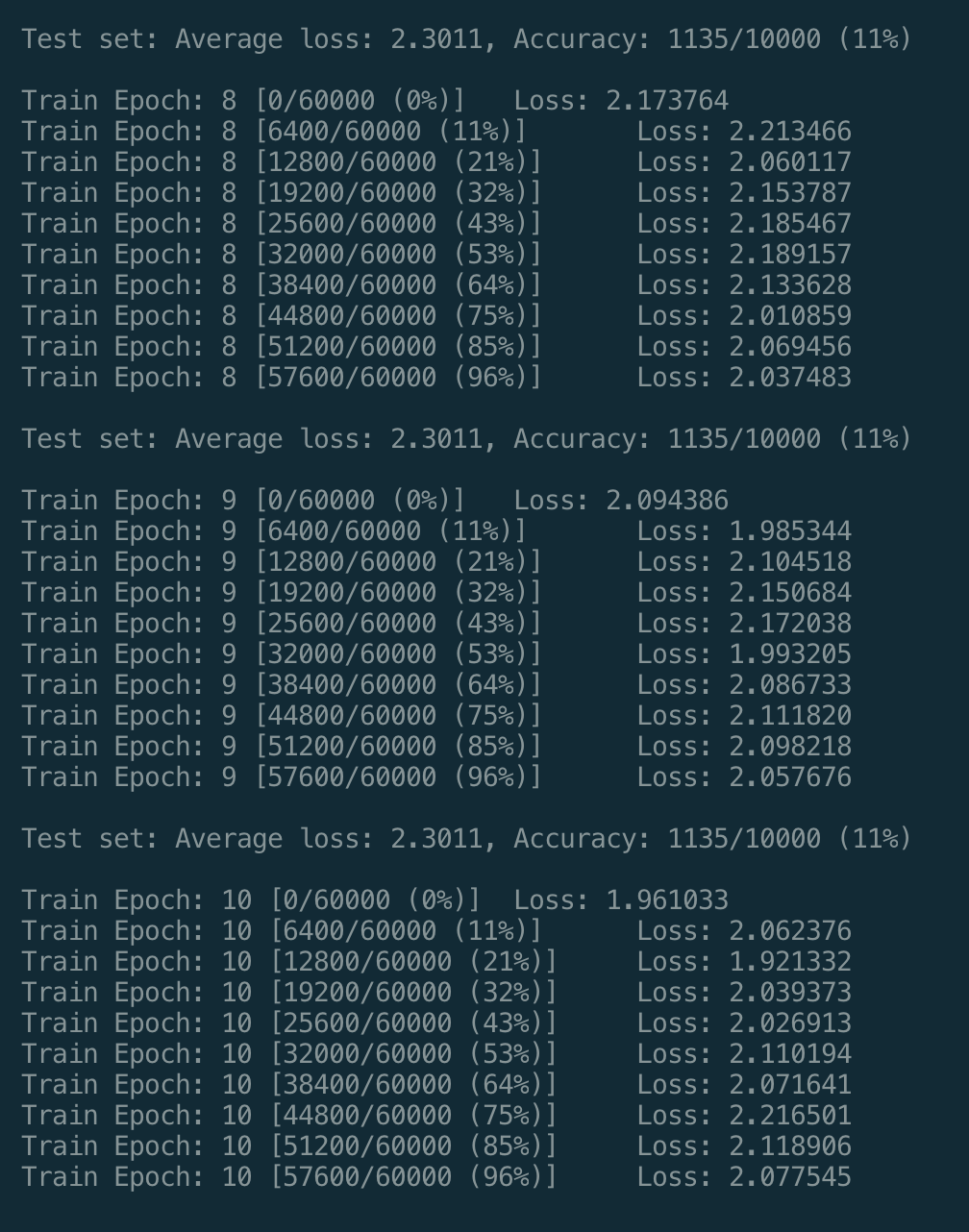

I'm trying to run your train_mnist.py example, and found that the loss isn't decreasing during training for the wider student net.

PS Initially the code didn't run on my computer as I was testing it on my mac without GPUs. So I modified the code a bit so that it didn't use cuda.

It has been a while I've not checked the code. But likely that you use a newer version of PyTorch and that might lead some changes.

Yeah I figured as much. I also notice the results computed using GPU and CPU are different. It ran perfectly (or at the least met my expectation) on my Ubuntu machine with PyTorch 0.4/Cuda 9.2 installed while failed with this bug when running on CPU on my mac.

Thanks for your prompt reply tho! Appreciated!