Make AI Trained Models modal translatable

What: Add messages and common localization strings to the JavaScript modal representing choosing an ML model.

Why: This will make the AI Trained Models modal translatable moving forward.

Comment:

There was no substantial existing boilerplate to work off of for testing the localization, so I have done my best. The delete failure mocks the request, but the model polling does not... it just sets the state. Unclear which approach is best for testing localization, but I wanted to avoid rigid implementation details which should also be tested... but in different tests. (These do not exist)

Links

- jira ticket: FND-2072

Testing story

The AI Trained Models modal is accessible via creating an applab project and clicking the "Settings Cog" and selecting "Manage AI Models". It is unclear how to create an AI Model to populate this list from the developer build.

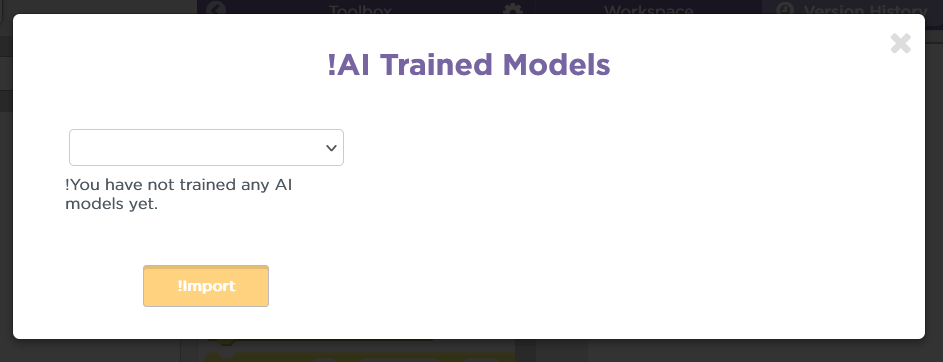

Locally tested translations by modifying the added English tests to place an exclamation point in front to know they are being used as populated. The header and a message for when you have no models are localized:

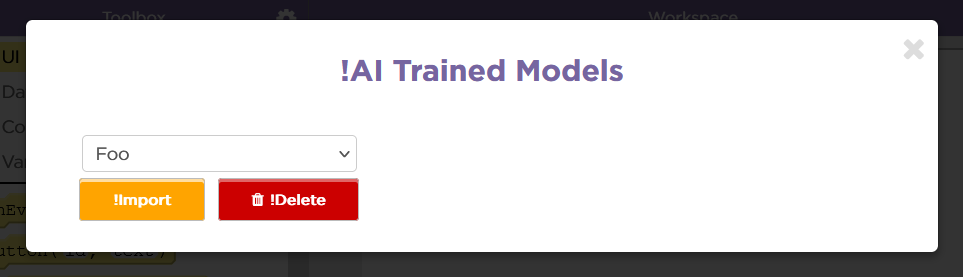

It is difficult to add AI models in a development build (I do not know how) so I forced the JavaScript component to fake a few to test the localization of the import/delete buttons.

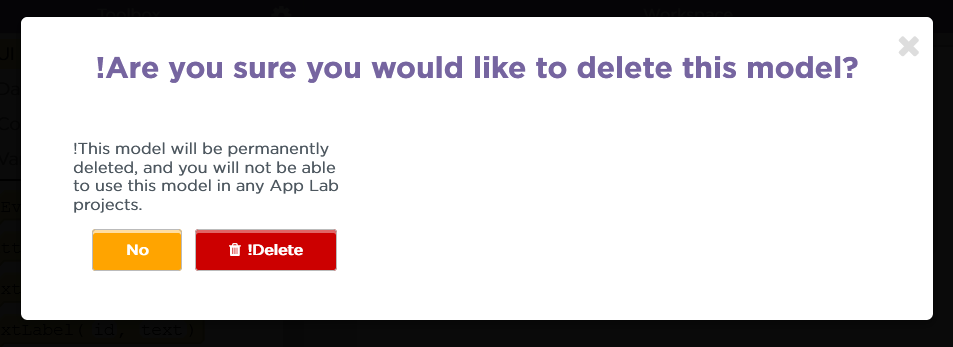

Pressing delete presents a confirm modal on top of the other one. The header and message are localized: ("No" is also localized, but I missed adding the "!" in the locale file)

The "Deleting" pending text is localized with ellipsis:

When the delete fails (I modified the ml_models controller to always 200 with a failure code), a delete message is localized:

And then... going back again to the normal view and pressing "Import"... when importing, the pending text (with ellipsis) is localized:

The localization is tested further with automated JavaScript tests via karma.

For the delete message upon failure, the response with the failure code is faked via a fake ajax service from sinon.

Faking having models versus having none is done simply by setting the state of the models list with some fake model info instead of faking the response as this is somewhat hard to stub.

PR Checklist:

- [ ] Tests provide adequate coverage

- [ ] Privacy and Security impacts have been assessed

- [ ] Code is well-commented

- [ ] New features are translatable or updates will not break translations

- [ ] Relevant documentation has been added or updated

- [ ] User impact is well-understood and desirable

- [ ] Pull Request is labeled appropriately

- [ ] Follow-up work items (including potential tech debt) are tracked and linked