Optimize PrepareRequest and req.Context lookups

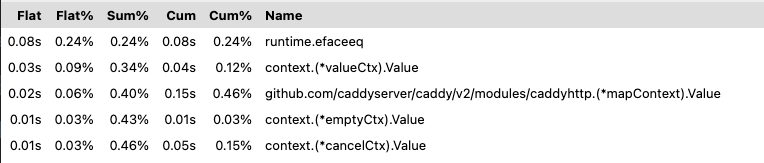

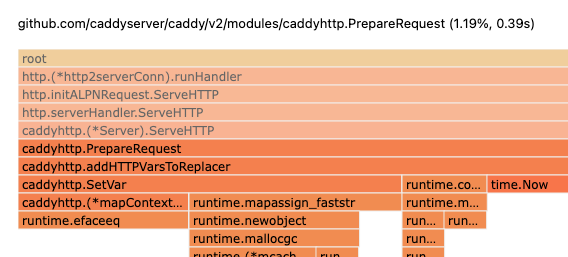

Upon analyzing a profile of Caddy during benchmarking (with h2load), I found out that a large chunk of CPU time is eaten up by PrepareRequest, specifically the multiple context.WithValue being used there - up to 3% of CPU time (during the 30 second benchmark+profile). Additionally, lots of CPU time was being used up by req.Context().Value in various places, such as matching.

Edit: creating a map with no hint does the same thing, so I have reverted this since it's useless

Firstly, I've added a capacity argument to the two make(map[string]interface{}) located there - for VarsCtxKey and routeGroupCtxKey. By default Caddy adds only 2 vars to the context map of vars (VarsCtxKey), and by checking out the code it seemed like only 1-2 should be rarely added, which is why using 4 here makes sense (also using <=8 allows Go to use runtime.makemap_small which is significantly faster than runtime.makemap). As for routeGroupCtxKey - I can't imagine using more than 4 route groups, especially since they can't be set in a Caddyfile except implicitly using try_files.

Secondly, I have changed the various context keys to their own types with int32 as the base type. This is what gave the largest boost to performance:

- since every key is a different type,

context.Valuefails quicker - as soon as it realizes that the type is wrong (before it would see that the type iscaddy.CtxKeyand compare strings) - since the key is an int it's comparison is instant, compared to string keys

- using

int32basically allows the compiler to use only 4 bytes instead of 8 forint, which is better for 32-bit systems This all matters pretty much due to howcontext.WithValueis implemented - it returns a simple wrapper storing 1 key/value pair, which is why using string keys results in constant meaningless string comparisons on everycontext.Valuecall.

I've additionally tried creating a custom "fastContext" which acted like a map for multiple values (so something like a ContextWithValues() function), however there was no performance gain (tried using both slices and map for storage), which makes sense since in the newer versions of Go the default context.Value is iterative instead of recursive.

These 2 optimizations cumulatively sped up PrepareRequest by 2 times on average (though I've seen a boost of up to 4 times). Additionally, they sped up the context.Value calls by 2 times on average as well. In terms of requests this gave an average boost of extra 100-150 requests per second in my benchmarks (on a 2cpu 3gb ram VPS).

I've tested a default map size of 8 instead of 4 and it does not impact performance in any way (makes sense, since that's what the Go runtime.makemap_small source says), so I've bumped them up to that just to allow for more room without a resize. Now resizes should definitely be a rare thing for Vars and route Groups.

Sorry! It actually doesn't matter if a map with no hint, or hint <=8 is created, since in both cases runtime.makemap_small is used, so I removed the hint

I've also searched through Github to see if some plugins rely on these constants being strings and couldn't find any that do, please correct me if I'm wrong though.

I have removed the request copy using originalRequest() since it was redundant. Notice, that it was used to copy some parts of the original request so that they can be later used in the error handlers as well as other places, however, we already use request.WithContext to make another shallow copy which copies those and even more values (!!) which is why we can simply set OriginalRequestCtxKey to the received request and it will not have those values overridden. This removes the call to originalRequest as well as a costly object allocation, now PrepareRequest is 3x faster on average

NB: I've also reordered context.WithValue calls so that the values which are used more often, such as the replacer, are faster to find using when using context.Value

@renbou, I love you. (Professionally...) These are great little improvements.

After just a quick glance so far, I'm on board for these changes. My only concern is, are you sure that we don't need to use originalRequest() to copy the request? It's very important that it remain unchanged even if middleware handlers mutate any part of the original request (the one we are copying to preserve it).

Just curious, do you have profiles or benchmark results to post? I believe you of course, but being able to see the performance improvements visually would make for a very compelling tweet. 😃 If you don't, and if it would be a lot of work to gather those again, then don't worry about it.

PS. Can I invite you to our developers Slack? We'd love to have you, as we really appreciate your recent contributions. Just let me know which email address to send the invite to.

Thank you! :) originalRequest() does this:

func originalRequest(req *http.Request, urlCopy *url.URL) http.Request {

cloneURL(req.URL, urlCopy)

return http.Request{

Method: req.Method,

RemoteAddr: req.RemoteAddr,

RequestURI: req.RequestURI,

URL: urlCopy,

}

}

So it simply returns an http.Request with some copied over string fields and a shallow copy of the URL.

request.WithContext does this:

func (r *Request) WithContext(ctx context.Context) *Request {

if ctx == nil {

panic("nil context")

}

r2 := new(Request)

*r2 = *r

r2.ctx = ctx

r2.URL = cloneURL(r.URL) // legacy behavior; TODO: try to remove. Issue 23544

return r2

}

The *r2 = *r basically means that we copy over all of the basic-type fields (such as those string fields), and we copy over the URL as well. And since PrepareRequest does r = r.WithContext(ctx), we simply lose the reference to the original request - it is stored in OriginalRequestCtxKey instead, which the handlers should not change. PrepareRequest then returns the pointer to the new request, and also overwrites the original pointer r = PrepareRequest(r, repl, w, s), so there's no way the original request will change. One thing that may change, though, are the headers, but originalRequest didn't prevent that as well. However, I've already tested out another optimization which will prevent us from losing the original headers as well, but it's more related to Server.ServeHTTP which is why I want to implement it after this PR.

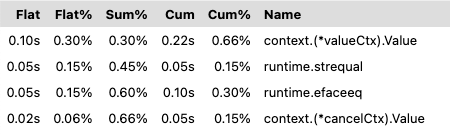

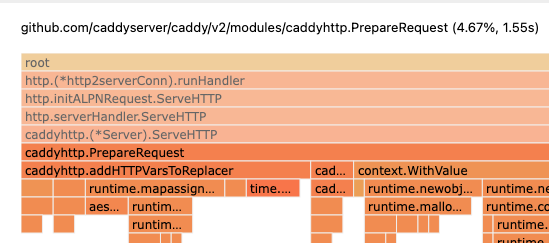

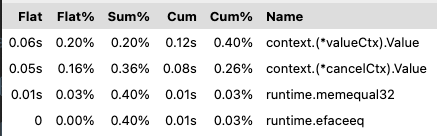

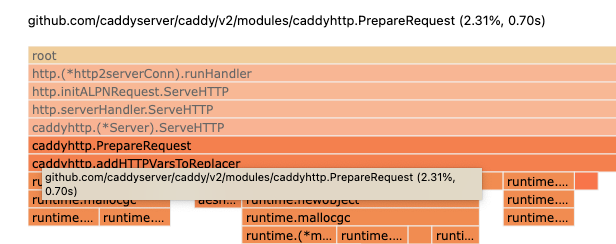

Here's a profile without the optimization:

profile-original.zip

And with the optimization:

profile-optimization.zip

Here the performance of working with the context values + PrepareRequest differs over 2 times, however more complex examples where the context is accessed more often it should be even more visible.

I realized that what gave the consistent 3x boost was actually the temporary optimization I did to addHTTPVarsToReplacer - I made VarsCtxKey into a struct with 2 value fields (uuid and start_time) and a map for other variables, but I haven't finished working on that, so I reverted it in the last commit (you can check out what I tried doing in the commit before the last one). I think I'll still implement it later, since it's also on the hotpath and is a nice optimization in itself :)

Slack would be awesome! You can invite me using [email protected]

@mholt Personally I'd delay the tweet since I've tested out other optimizations which don't change the API and which can be gradually implemented and then tweeted about ;)

@renbou this is awesome - ~have you thought of adding some benchmarks too?~ Edit: I see Matt already asked above 😅

As a related aside, it might be useful to hook up https://bencher.orijtech.com to keep track of these @mholt 😉

I've made the allocation of the requestID structure lazy, since it seems like it's supposed to be used through the replacer anyways (using "http.request.uuid"). I couldn't find where "uuid" var would be used directly so this should be fine, right?

(I'm watching this, just to let you know! I'll probably be able to give more attention to this on Monday or Tuesday when I get back into the office. Thanks for your work on this.)

The latest commit is experimental - it adds a new mapContext which stores a "map" in the form of a slice, which gives us faster lookup and allocation time since it's much smaller than the smallest map. It also allows setting context values lazily if they are initialized using make() or new() (default value, basically), so the routeGroupCtxKey map can be initialized only if groups are actually used, which seems rare to me (?).

Made a simple PrepareRequest benchmark which doesn't show results as great as the probably more realistic http2 benchmark, here are the results:

- Without latest experimental commit:

benchmark old ns/op new ns/op delta

BenchmarkPrepareRequest-8 1681 1289 -23.32%

benchmark old allocs new allocs delta

BenchmarkPrepareRequest-8 23 18 -21.74%

benchmark old bytes new bytes delta

BenchmarkPrepareRequest-8 2056 1608 -21.79%

- With latest experimental commit:

benchmark old ns/op new ns/op delta

BenchmarkPrepareRequest-8 1681 1097 -34.74%

benchmark old allocs new allocs delta

BenchmarkPrepareRequest-8 23 14 -39.13%

benchmark old bytes new bytes delta

BenchmarkPrepareRequest-8 2056 1496 -27.24%

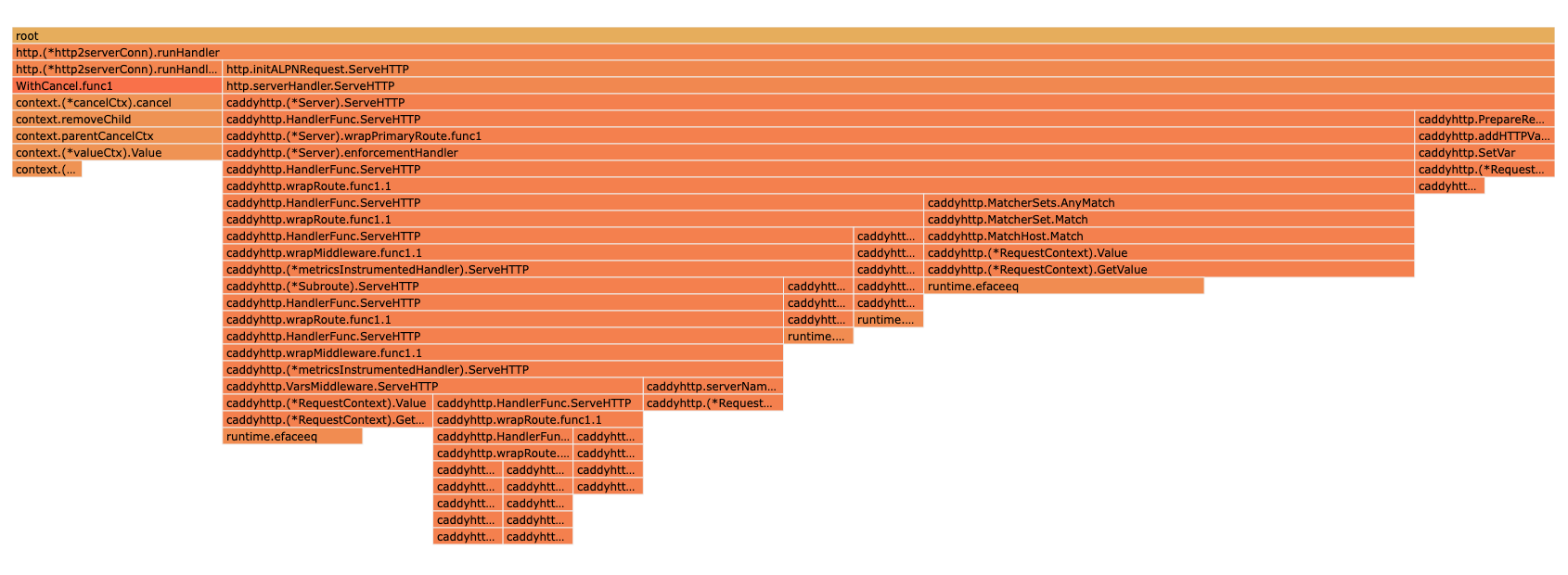

Interesting results from the profile during h2load benchmark with the latest commit - nearly 2x faster again

This is also with an added route group, which proves that everything works.

I've polished up the experimental fast context map and added short-circuit possibilities via caddyhttp.RequestContextValue and caddyhttp.RequestContextSetValue. Basically, they take the context from http.Request, lookup our fast map (renamed to RequestContext) and then work directly with it. For caddyhttp.RequestContextValue if a key is not found in the RequestContext map, it will not be looked up further in the parent context, which allows us to save lots of time on lookups of keys which might not be in the context, such as ErrorCtxKey and routeGroupCtxKey - before most of the time looking them up was spent digging through underlying context.valueCtx, context.cancelCtx, etc. Now pretty much all of the lookups happening in Server.ServeHTTP are handled by this fast map:

This just became even more awesome. Since now the new RequestContextstructure is used, which is defined by Caddy, I was able to add a sync.Pool so now close to none allocations other than during r.WithContext happen in PrepareRequest!

Benchmark of concurrent 20 workers running PrepareRequest 5 times.

Optimized PrepareRequest without/with pool:

benchmark old ns/op new ns/op delta

BenchmarkConcurrentPrepareRequest20-8 149091 125600 -15.76%

benchmark old allocs new allocs delta

BenchmarkConcurrentPrepareRequest20-8 1437 1030 -28.32%

benchmark old bytes new bytes delta

BenchmarkConcurrentPrepareRequest20-8 151519 100089 -33.94%

Non-optimized PrepareRequest and optimized PrepareRequest with pool:

benchmark old ns/op new ns/op delta

BenchmarkConcurrentPrepareRequest20-8 219029 125600 -42.66%

benchmark old allocs new allocs delta

BenchmarkConcurrentPrepareRequest20-8 2291 1030 -55.04%

benchmark old bytes new bytes delta

BenchmarkConcurrentPrepareRequest20-8 204148 100089 -50.97%

Very cool! Will read more in-depth soon. But for now I noticed the tests are failing:

=== RUN TestStaticResponseHandler

--- FAIL: TestStaticResponseHandler (0.00s)

panic: interface conversion: interface {} is nil, not *caddyhttp.RequestContext [recovered]

panic: interface conversion: interface {} is nil, not *caddyhttp.RequestContext

goroutine 28 [running]:

testing.tRunner.func1.2({0x19d6000, 0xc000424720})

/opt/hostedtoolcache/go/1.17.7/x64/src/testing/testing.go:1209 +0x36c

testing.tRunner.func1()

/opt/hostedtoolcache/go/1.17.7/x64/src/testing/testing.go:1212 +0x3b6

panic({0x19d6000, 0xc000424720})

/opt/hostedtoolcache/go/1.17.7/x64/src/runtime/panic.go:1047 +0x266

github.com/caddyserver/caddy/v2/modules/caddyhttp.RequestContextValue(0xc000602700, {0x195c740, 0x1def928})

/home/runner/work/caddy/caddy/modules/caddyhttp/requestcontext.go:58 +0x10d

github.com/caddyserver/caddy/v2/modules/caddyhttp.StaticResponse.ServeHTTP({{0xc00042a0bd, 0x3}, 0xc0004246f0, {0x1b6dfc0, 0x4}, 0x1, 0x0}, {0x1e1efb0, 0xc0003a8e80}, 0xc000602700, ...)

/home/runner/work/caddy/caddy/modules/caddyhttp/staticresp.go:142 +0x551

github.com/caddyserver/caddy/v2/modules/caddyhttp.TestStaticResponseHandler(0xc0003f5860)

/home/runner/work/caddy/caddy/modules/caddyhttp/staticresp_test.go:41 +0x370

testing.tRunner(0xc0003f5860, 0x1bdc940)

/opt/hostedtoolcache/go/1.17.7/x64/src/testing/testing.go:1259 +0x230

created by testing.(*T).Run

/opt/hostedtoolcache/go/1.17.7/x64/src/testing/testing.go:1306 +0x727

FAIL github.com/caddyserver/caddy/v2/modules/caddyhttp 0.168s

? github.com/caddyserver/caddy/v2/modules/caddyhttp/caddyauth [no test files]

Ah, makes sense. The tests don't add the full context to the request, instead only adding the replacer. Since PrepareRequest is exported, it seems only natural that it should be used if calling Caddy handlers directly instead of using the server, right? I'll make sure the tests use PrepareRequest.

Edit: tests are passing now

@mholt What are the chances of getting this merged this week? Does anything else have to be done on my part? This doesn't change the API other than OriginalRequestCtxKey having a *http.Request as value instead of http.Request (and PrepareRequest returns an additional *RequestContext, but I didn't find any code in third-party modules using it).

There's a conflict now on map_test.go if you could fix that

Is this ok? Doesn't look very nice, I don't rebase PRs often so I'm not sure if this is how it's supposed to look, lol

Hmm, there does seem to be some extra cruft in this PR diff now. Not sure what caused that exactly.

I'll review this as soon as I get a chance. It started as a somewhat small change, but now it's got quite a lot of changes -- including a lot of exported surface area -- so it's going to take significantly longer to review.

To speed up the process, I usually recommend/prefer several smaller PRs, one at a time (easier to manage that way, rather than having to submit several PRs all at the same time which rely on the previous one, and updating one after the other). Best to iterate on one small change at a time. So if you want to do that, it might be faster. Or you can leave this PR open, it will just take longer. Especially in this part of the code which is quite central and critical, so I will have to scrutinize it more.

For some reason the rebase added all of the commits in this repo over the past few days to this PR. I'll open up a new one with the changes I actually made

Opening a new PR would lose all the discussion and context of this one though.

Should be good now. @mholt even though a lot of files seem changed, if you look through the changes nearly all of them just add RequestContextValue to allow short-circuiting the context value lookup and PrepareRequest in the tests so that the request context is prepared properly (preparing the request using multiple context.Value in every test definitely isn't the way to do it)

The key is that when you rebase, you have to force push, otherwise git might try to create a merge commit with master.

After some more testing and research (found this article https://darkcoding.net/software/go-slice-search-vs-map-lookup/) it turned out that using a map[interface{}]interface{} here should actually be faster for the lookups even without storing extra values (using a map on the default 4-6 values is faster in most cases than the slice), so I replaced the slice-map with a proper one.

A simple benchmark of PrepareRequest says that this is a bit slower, though the speed-up we gain during lookups will definitely be more significant, especially with complex configs.

I love O(1) lookups

I don't get it... if this is so much faster, why wasn't context implemented this way? What are we sacrificing? Immutability (for concurrency)?

Will need more time to finish reviewing this...

I don't get it... if this is so much faster, why wasn't context implemented this way? What are we sacrificing? Immutability (for concurrency)?

Well, we aren't sacrificing anything with this optimization, but in general context cannot be implemented this way since it has no way of knowing what ctx will be passed to context.WithValue (since it's an interface), which is why all it can do is add one value which will be looked up in linear time in the future... Contexts with cancels and deadlines are implemented "efficiently" since they just add a new goroutine which just blocks, using near zero resources. But a value context can't be implemented efficiently, i.e. using a map, since (like in this case) the map will have to be found first, again by using ctx.Value, which can be costly when we don't know what the context will consist of. Here we know that the request context will always be our custom RequestContext, which is why the lookup is fast.