Gradio interface "full_precision" returns a RuntimeError on txt2img

Whenever I try to run with full precision (due to a bug with green output/fp16 with the GTX 1660) I get a RuntimeError, output is as follows:

Traceback (most recent call last): File "X:\miniconda3\envs\ldm\lib\site-packages\gradio\routes.py", line 248, in run_predict output = await app.blocks.process_api( File "X:\miniconda3\envs\ldm\lib\site-packages\gradio\blocks.py", line 643, in process_api predictions, duration = await self.call_function(fn_index, processed_input) File "X:\miniconda3\envs\ldm\lib\site-packages\gradio\blocks.py", line 556, in call_function prediction = await block_fn.fn(*processed_input) File "X:\miniconda3\envs\ldm\lib\site-packages\gradio\interface.py", line 655, in submit_func prediction = await self.run_prediction(args) File "X:\miniconda3\envs\ldm\lib\site-packages\gradio\interface.py", line 684, in run_prediction prediction = await anyio.to_thread.run_sync( File "X:\miniconda3\envs\ldm\lib\site-packages\anyio\to_thread.py", line 31, in run_sync return await get_asynclib().run_sync_in_worker_thread( File "X:\miniconda3\envs\ldm\lib\site-packages\anyio_backends_asyncio.py", line 937, in run_sync_in_worker_thread return await future File "X:\miniconda3\envs\ldm\lib\site-packages\anyio_backends_asyncio.py", line 867, in run result = context.run(func, *args) File ".\optimizedSD\txt2img_gradio.py", line 118, in generate uc = modelCS.get_learned_conditioning(batch_size * [""]) File "c:\stable-diffusion\optimizedSD\ddpm.py", line 297, in get_learned_conditioning c = self.cond_stage_model.encode(c) File "c:\stable-diffusion\ldm\modules\encoders\modules.py", line 162, in encode return self(text) File "X:\miniconda3\envs\ldm\lib\site-packages\torch\nn\modules\module.py", line 1110, in _call_impl return forward_call(*input, **kwargs) File "c:\stable-diffusion\ldm\modules\encoders\modules.py", line 156, in forward outputs = self.transformer(input_ids=tokens) File "X:\miniconda3\envs\ldm\lib\site-packages\torch\nn\modules\module.py", line 1110, in _call_impl return forward_call(*input, **kwargs) File "X:\miniconda3\envs\ldm\lib\site-packages\transformers\models\clip\modeling_clip.py", line 722, in forward return self.text_model( File "X:\miniconda3\envs\ldm\lib\site-packages\torch\nn\modules\module.py", line 1110, in _call_impl return forward_call(*input, **kwargs) File "X:\miniconda3\envs\ldm\lib\site-packages\transformers\models\clip\modeling_clip.py", line 643, in forward encoder_outputs = self.encoder( File "X:\miniconda3\envs\ldm\lib\site-packages\torch\nn\modules\module.py", line 1110, in _call_impl return forward_call(*input, **kwargs) File "X:\miniconda3\envs\ldm\lib\site-packages\transformers\models\clip\modeling_clip.py", line 574, in forward layer_outputs = encoder_layer( File "X:\miniconda3\envs\ldm\lib\site-packages\torch\nn\modules\module.py", line 1110, in _call_impl return forward_call(*input, **kwargs) File "X:\miniconda3\envs\ldm\lib\site-packages\transformers\models\clip\modeling_clip.py", line 317, in forward hidden_states, attn_weights = self.self_attn( File "X:\miniconda3\envs\ldm\lib\site-packages\torch\nn\modules\module.py", line 1110, in _call_impl return forward_call(*input, **kwargs) File "X:\miniconda3\envs\ldm\lib\site-packages\transformers\models\clip\modeling_clip.py", line 257, in forward attn_output = torch.bmm(attn_probs, value_states) RuntimeError: expected scalar type Half but found Float

I think I found a solution... PR incoming :) EDIT: It only happened once for some reason. Will report back if I see it again.

Hi, I cannot reproduce this issue, does img2img_gradio.py work fine for you?

I have the same condition, even checked the full_precision checkbox for the same reason.

Traceback:

stable-diffusion-sd-1 | Traceback (most recent call last):

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/gradio/routes.py", line 248, in run_predict

stable-diffusion-sd-1 | output = await app.blocks.process_api(

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/gradio/blocks.py", line 643, in process_api

stable-diffusion-sd-1 | predictions, duration = await self.call_function(fn_index, processed_input)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/gradio/blocks.py", line 556, in call_function

stable-diffusion-sd-1 | prediction = await block_fn.fn(*processed_input)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/gradio/interface.py", line 655, in submit_func

stable-diffusion-sd-1 | prediction = await self.run_prediction(args)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/gradio/interface.py", line 684, in run_prediction

stable-diffusion-sd-1 | prediction = await anyio.to_thread.run_sync(

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/anyio/to_thread.py", line 31, in run_sync

stable-diffusion-sd-1 | return await get_asynclib().run_sync_in_worker_thread(

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/anyio/_backends/_asyncio.py", line 937, in run_sync_in_worker_thread

stable-diffusion-sd-1 | return await future

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/anyio/_backends/_asyncio.py", line 867, in run

stable-diffusion-sd-1 | result = context.run(func, *args)

stable-diffusion-sd-1 | File "optimizedSD/txt2img_gradio.py", line 146, in generate

stable-diffusion-sd-1 | uc = modelCS.get_learned_conditioning(batch_size * [""])

stable-diffusion-sd-1 | File "/root/stable-diffusion/optimizedSD/ddpm.py", line 296, in get_learned_conditioning

stable-diffusion-sd-1 | c = self.cond_stage_model.encode(c)

stable-diffusion-sd-1 | File "/root/stable-diffusion/ldm/modules/encoders/modules.py", line 162, in encode

stable-diffusion-sd-1 | return self(text)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1110, in _call_impl

stable-diffusion-sd-1 | return forward_call(*input, **kwargs)

stable-diffusion-sd-1 | File "/root/stable-diffusion/ldm/modules/encoders/modules.py", line 156, in forward

stable-diffusion-sd-1 | outputs = self.transformer(input_ids=tokens)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1110, in _call_impl

stable-diffusion-sd-1 | return forward_call(*input, **kwargs)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/transformers/models/clip/modeling_clip.py", line 722, in forward

stable-diffusion-sd-1 | return self.text_model(

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1110, in _call_impl

stable-diffusion-sd-1 | return forward_call(*input, **kwargs)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/transformers/models/clip/modeling_clip.py", line 643, in forward

stable-diffusion-sd-1 | encoder_outputs = self.encoder(

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1110, in _call_impl

stable-diffusion-sd-1 | return forward_call(*input, **kwargs)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/transformers/models/clip/modeling_clip.py", line 574, in forward

stable-diffusion-sd-1 | layer_outputs = encoder_layer(

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1110, in _call_impl

stable-diffusion-sd-1 | return forward_call(*input, **kwargs)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/transformers/models/clip/modeling_clip.py", line 317, in forward

stable-diffusion-sd-1 | hidden_states, attn_weights = self.self_attn(

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1110, in _call_impl

stable-diffusion-sd-1 | return forward_call(*input, **kwargs)

stable-diffusion-sd-1 | File "/root/miniconda/envs/ldm/lib/python3.8/site-packages/transformers/models/clip/modeling_clip.py", line 257, in forward

stable-diffusion-sd-1 | attn_output = torch.bmm(attn_probs, value_states)

stable-diffusion-sd-1 | RuntimeError: expected scalar type Half but found Float

I am running with the following docker-compose.yml (had to make some changes to get it to work):

version: "3.9"

services:

sd:

build:

context: .

network: host

network_mode: "host"

ports:

- "7860:7860"

volumes:

- ./sd-data:/data

- ./sd-output:/output

- sd-cache:/root/.cache

deploy:

resources:

reservations:

devices:

- capabilities: [gpu]

volumes:

sd-cache:

Also had this issue with my 1650.

Restarting the script seemed to fix the problem - might just been an issue with the first time run?

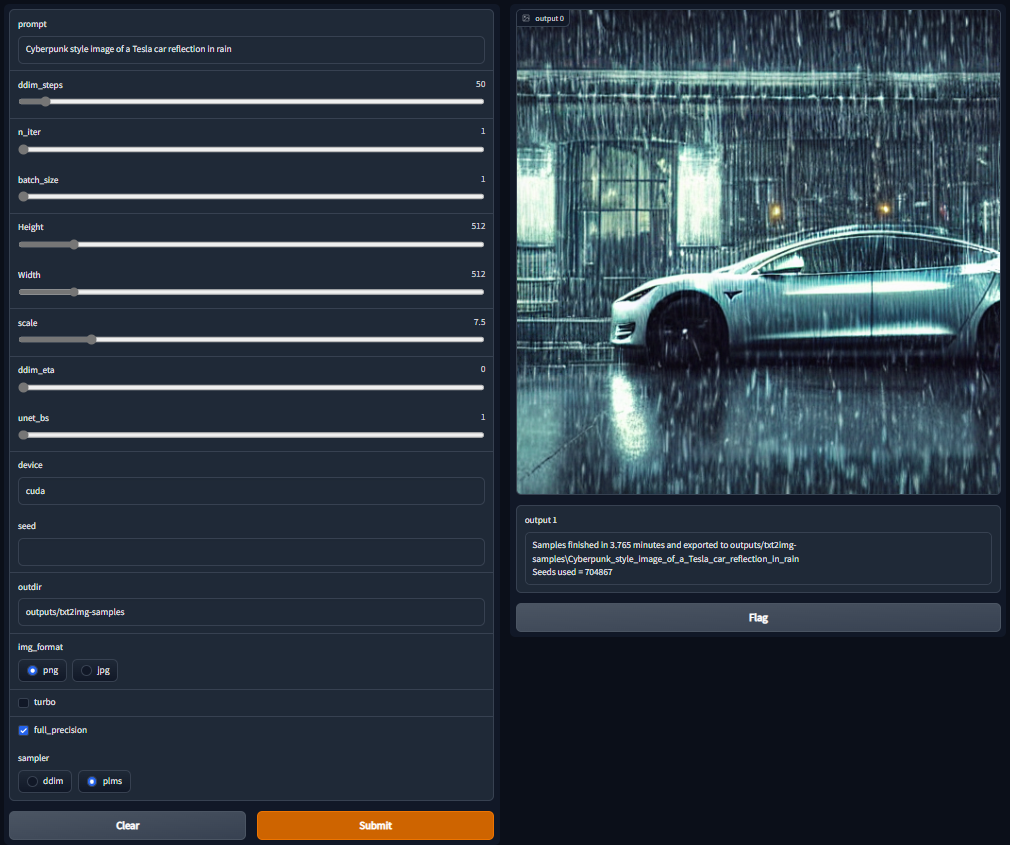

These were my settings:

I just had this happen to me. I was able to reproduce it. (Gradio txt2img)

It only happens when you try generating a picture on half precision at least once, and then try again with full precision.

All you need to do is restart the script, and use full precision since the beginning.