[luci-interpreter] Benchmark modes from tflite-micro

This is continuation of #5080, but with more specific goals.

Goal

To measure luci-interpreter performance and memory consumption for models delivered with tflite-micro: https://github.com/tensorflow/tflite-micro/tree/main/tensorflow/lite/micro/benchmarks

Currently it contains two models:

- keyword_scrambled

- person_detect

Notes

We are in the process of review and merge of build infrastructure, PAL and memory managers in luci-interpreter:

- Memory managers activity #7572

- Build infrastructure and PAL #7288

To achieve best results I propose to use memory managers and MCU related stuff.

+cc @chunseoklee

@binarman @SlavikMIPT Is it possible to investigate similar ( https://github.com/Samsung/ONE/issues/5080#issuecomment-743022914) performance/memory comparison with the latest tf-micro as of now ?

@chunseoklee Yes, we can do it. The only thing you should know is that this ( model measured in #5080) is a "toy" example, so it is not actually very descriptive...

"toy" example

means sine generator model on tf-micro site, right ?

means sine generator model on tf-micro site, right ?

Yes, this is about sin(x) model. It is a normal tflite model (you can cut it from examples, save as tflite file, etc.), but it is too simple compared to real model.

Keyword model has some issues: it does not have some fields and does not verify.

In order to extract it from char array I've used some hacks on tensorflow code:

code

diff --git a/tensorflow/lite/micro/benchmarks/keyword_scrambled_model_data.cc b/tensorflow/lite/micro/benchmarks/keyword_scrambled_model_data.cc

index 56fed4e..97ca9a8 100644

--- a/tensorflow/lite/micro/benchmarks/keyword_scrambled_model_data.cc

+++ b/tensorflow/lite/micro/benchmarks/keyword_scrambled_model_data.cc

@@ -13,7 +13,8 @@ See the License for the specific language governing permissions and

limitations under the License.

==============================================================================*/

-#include "tensorflow/lite/micro/benchmarks/keyword_scrambled_model_data.h"

+//#include "tensorflow/lite/micro/benchmarks/keyword_scrambled_model_data.h"

+

// Keep model aligned to 8 bytes to guarantee aligned 64-bit accesses.

alignas(8) const unsigned char g_keyword_scrambled_model_data[] = {

@@ -2901,3 +2902,21 @@ alignas(8) const unsigned char g_keyword_scrambled_model_data[] = {

0x1b, 0x00, 0x00, 0x00};

const unsigned int g_keyword_scrambled_model_data_length = 34576;

+

+#include <fstream>

+#include "tensorflow/lite/schema/schema_generated.h"

+

+int main()

+{

+ auto model = tflite::GetModel(g_keyword_scrambled_model_data);

+ flatbuffers::FlatBufferBuilder fbb;

+ auto offset = tflite::Model::Pack(fbb, model->UnPack());

+ tflite::FinishModelBuffer(fbb, offset);

+ uint8_t *buf = fbb.GetBufferPointer();

+ int size = fbb.GetSize();

+

+ std::ofstream ofs("model.tflite", std::ostream::out | std::ostream::binary);

+ for (int i = 0; i < size; ++i)

+ ofs << buf[i];

+}

+

So I've got the model: model.zip

It has some features we do not support currently:

- signed int8 fullyconnected layer

- SVDF layer

- Quantize layers (that is used for requantization)

- Softmax with mixed precision

I plan to resolve quantization and softmax problem with just cutting them out from the model, support SVDF and int8 FC.

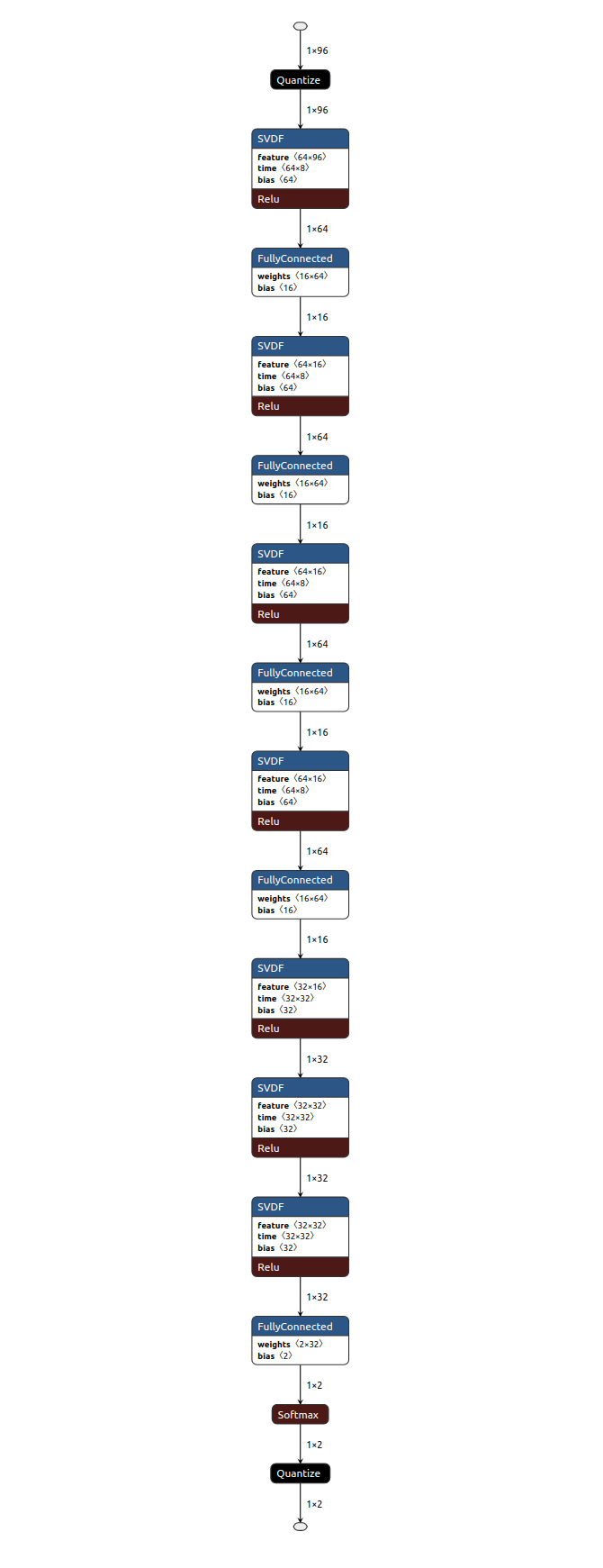

Model looks like this:

cut model: model_1-12.tflite.zip cut model with softmax: model_1-13.tflite.zip

Another issue I noticed is SVDF operator takes additional tensor as input and modifies it in-place. This is neither constant nor input tensor, looks like it is internal state of operation, this is kind of breaks pure functional model of our computations, need to think how to handle this...

Also tensorflow documentation states that this is experimental operation, so I assume it could change.

I have run three NN tflite models: The first one is simple model from tflite-micro hello_word example (sin(x) model), the second one is keyword model (mentioned and attached above) from tflite-micro benchmarks, and the third is person detection model also from tflite-micro benchmarks (attached below). person_detect.tflite.zip

I have run on stm32F413ZH microcontroller with 320 KB of SRAM in a single thread of MbedOS.

The memory costs were measured using tflite::RecordingMicroInterpreter (https://github.com/tensorflow/tflite-micro/blob/main/tensorflow/lite/micro/docs/memory_management.md)

Results:

- sin(x) model

Finished in 128us

[RecordingMicroAllocator] Arena allocation total 676 bytes

[RecordingMicroAllocator] Arena allocation head 40 bytes

[RecordingMicroAllocator] Arena allocation tail 636 bytes

[RecordingMicroAllocator] 'TfLiteEvalTensor data' used 120 bytes with alignment overhead (requested 120 bytes for 10 allocations)

[RecordingMicroAllocator] 'Persistent TfLiteTensor data' used 64 bytes with alignment overhead (requested 64 bytes for 2 tensors)

[RecordingMicroAllocator] 'Persistent TfLiteTensor quantization data' used 40 bytes with alignment overhead (requested 40 bytes for 4 allocations)

[RecordingMicroAllocator] 'Persistent buffer data' used 128 bytes with alignment overhead (requested 104 bytes for 5 allocations)

[RecordingMicroAllocator] 'NodeAndRegistration struct' used 96 bytes with alignment overhead (requested 96 bytes for 3 NodeAndRegistration structs)

- keyword model

Finished in 5382us

[RecordingMicroAllocator] Arena allocation total 13020 bytes

[RecordingMicroAllocator] Arena allocation head 680 bytes

[RecordingMicroAllocator] Arena allocation tail 12340 bytes

[RecordingMicroAllocator] 'TfLiteEvalTensor data' used 648 bytes with alignment overhead (requested 648 bytes for 54 allocations)

[RecordingMicroAllocator] 'Persistent TfLiteTensor data' used 64 bytes with alignment overhead (requested 64 bytes for 2 tensors)

[RecordingMicroAllocator] 'Persistent TfLiteTensor quantization data' used 40 bytes with alignment overhead (requested 40 bytes for 4 allocations)

[RecordingMicroAllocator] 'Persistent buffer data' used 548 bytes with alignment overhead (requested 512 bytes for 17 allocations)

[RecordingMicroAllocator] 'TfLiteTensor variable buffer data' used 10248 bytes with alignment overhead (requested 10240 bytes for 7 allocations)

[RecordingMicroAllocator] 'NodeAndRegistration struct' used 480 bytes with alignment overhead (requested 480 bytes for 15 NodeAndRegistration structs)

- person detection model

Finished in 12416052us

[RecordingMicroAllocator] Arena allocation total 82276 bytes

[RecordingMicroAllocator] Arena allocation head 55304 bytes

[RecordingMicroAllocator] Arena allocation tail 26972 bytes

[RecordingMicroAllocator] 'TfLiteEvalTensor data' used 1068 bytes with alignment overhead (requested 1068 bytes for 89 allocations)

[RecordingMicroAllocator] 'Persistent TfLiteTensor data' used 64 bytes with alignment overhead (requested 64 bytes for 2 tensors)

[RecordingMicroAllocator] 'Persistent TfLiteTensor quantization data' used 40 bytes with alignment overhead (requested 40 bytes for 4 allocations)

[RecordingMicroAllocator] 'Persistent buffer data' used 23824 bytes with alignment overhead (requested 23456 bytes for 88 allocations)

[RecordingMicroAllocator] 'NodeAndRegistration struct' used 992 bytes with alignment overhead (requested 992 bytes for 31 NodeAndRegistration structs)

@BalyshevArtem Do you have benchmark result with luci-interpreter? (The result above is the one with tf-micro?)

@chunseoklee Sorry for the delayed status =_=

@SlavikMIPT is benchmarking luci interpreter. We faced some issues with both target models:

- keyword model uses experimental SVDF operation that is not easy to support right now

- person detection model uses too much RAM, so we have some issues fitting it in hardware we have in SRR (we actually have one board with large enough external RAM, but it requires extra efforts to utilize it)

@chunseoklee

On stm32f767 216MHz with external SDRAM

person detection:

tflite micro: Finished in 6747161us

luci: Finished in 4766142us

I had to make some modifications to make the interpreter use external memory

On stm32f767 216MHz with external SDRAM person detection: tflite micro: Finished in 6747161us luci: Finished in 4766142us

This is a bit interesting. We're still better even with tf-micro's new allocator.

@SlavikMIPT Do you have comparison with sin and kerword model ?

@chunseoklee Here is data for sin(x): https://github.com/Samsung/ONE/issues/5080#issuecomment-743022914

@SlavikMIPT Could you measure it again with new tflite?

Just to be sure data is fresh =)

@SlavikMIPT

tflite micro: Finished in 6747161us luci: Finished in 4766142us

This is really interesting results, thank you!

@chunseoklee for sin(x) it's almost the same ~50us for tflite micro on internal SRAM, for keyword - the model is broken - so I was not able to convert it to circle

@chunseoklee I tried to represent https://github.com/Samsung/ONE/issues/7598#issuecomment-916753214 results for person_detection model with board NUCLEO_F413ZH and CMSIS_NN kernels and got result much more better.

With CMSIS_NN:

invokation time: 386595us

Without CMSIS_NN:

invokation time: 13005446us

Sorry for typo in log msg! =) Anybody can represent my measurements with my local branch using README.md.

The results for STM32F767ZI 216MHz, 512kB on-chip SRAM and 128Mbit SDRAM 187MHz on FSMC

tflite micro with CMSIS_NN on external SDRAM:

invokation time: 393753us

luci-micro with CMSIS_NN on external SDRAM:

invokation time: 313689us

At this moment I can't fit luci in 512kB on-chip SRAM to compare, but working on it

I have run some models on tflite-micro/luci-micro with cmsis-nn from https://github.com/ARM-software/ML-zoo and my own phrase recognition model (MFCC based) on STM32H743ZI Cortex-M7 MCU with 2MBytes of Flash memory, 1MB RAM, 480 MHz CPU(SystemCoreClock is 216MHz for all tests):

Model | tflite-micro | luci-micro |

-------------------------------------------------------

ad_small_int8 | 182721us | 2628189us

-------------------------------------------------------

kws_micronet_s | 97284us | 1771989us

-------------------------------------------------------

speech_recognition | 3409us | 13723us

-------------------------------------------------------

It seems that reference kernels have been used for some operations in luci-micro.

I also ran the benchmarks with model placed in RAM not in FLASH on tflite-micro - inference time has increased on ~10%

Used models and models that can be potentially used on MCU are attached modelzoo.zip

I also ran the benchmarks with model placed in RAM not in FLASH on tflite-micro - inference time has increased on ~10%

This is extremely unexpected results for me =O

480 MHz CPU(SystemCoreClock is 216MHz for all tests)

Does System core clock affect performance? If I understood documentation right, this clock is used for debugging, tracing or measuring time.

Model | tflite-micro | luci-micro | ------------------------------------------------------- ad_small_int8 | 182721us | 2628189us ------------------------------------------------------- kws_micronet_s | 97284us | 1771989us ------------------------------------------------------- speech_recognition | 3409us | 13723us -------------------------------------------------------It seems that reference kernels have been used for some operations in luci-micro.

Сould it be that one of the reasons for such a difference in speed between tflite-micro and luci-micro is that the first allocates memory statically for all tensors, and in the case of the second dynamically?

Or am I wrong, and tflite-micro in your experiments also uses dynamic allocation type?

Model | tflite-micro | luci-micro | ------------------------------------------------------- ad_small_int8 | 182721us | 2628189us ------------------------------------------------------- kws_micronet_s | 97284us | 1771989us ------------------------------------------------------- speech_recognition | 3409us | 13723us -------------------------------------------------------It seems that reference kernels have been used for some operations in luci-micro.

Сould it be that one of the reasons for such a difference in speed between

tflite-microandluci-microis that the first allocates memory statically for all tensors, and in the case of the second dynamically? Or am I wrong, andtflite-microin your experiments also uses dynamic allocation type?

I have done some benchmarks and tracing - the problem (as I said above) is calling reference kernels instead optimized for following operations: Conv2D DepthwiseConv2D AveragePool2D Reshape I will make a pull request with tracing utility compatible with arm soon - so you can debug this

I made a mistake - in the last test I did not notice that reference kernels were used (PAL has been changed after rebase )

I made a comparison on the kws_micronet_s again with correct PAL:

tflite-micro

arm_convolve_s8 31936us

arm_depthwise_conv_wrapper_s8 3558us

arm_convolve_1x1_s8_fast 9059us

arm_depthwise_conv_wrapper_s8 4604us

arm_convolve_1x1_s8_fast 7608us

arm_depthwise_conv_wrapper_s8 3471us

arm_convolve_1x1_s8_fast 6799us

arm_depthwise_conv_wrapper_s8 3468us

arm_convolve_1x1_s8_fast 6800us

arm_depthwise_conv_wrapper_s8 3465us

arm_convolve_1x1_s8_fast 15851us

arm_depthwise_conv_wrapper_s8 614us

arm_convolve_1x1_s8_fast 71us

luci-micro

arm_convolve_s8 323831us

arm_depthwise_conv_wrapper_s8 15876us

arm_convolve_1x1_s8_fast 258151us

arm_depthwise_conv_wrapper_s8 20417us

arm_convolve_1x1_s8_fast 256459us

arm_depthwise_conv_wrapper_s8 15298us

arm_convolve_1x1_s8_fast 193568us

arm_depthwise_conv_wrapper_s8 15298us

arm_convolve_1x1_s8_fast 193585us

arm_depthwise_conv_wrapper_s8 15288us

arm_convolve_1x1_s8_fast 451642us

arm_avgpool_s8 4294us

arm_convolve_1x1_s8_fast 723us

The execution time of the same functions from the CMSIS-NN library is different - so I think the problem is different definitions used during compilation

I have compiled with correct flags, so now results are:

Model | tflite-micro | luci-micro |

-------------------------------------------------------

kws_micronet_s | 97284us | 92275us

-------------------------------------------------------

speech_recognition | 3409us | 2701us

-------------------------------------------------------

@SlavikMIPT @binarman @BalyshevArtem Could you please help to measure the result like https://github.com/Samsung/ONE/issues/7598#issuecomment-1042640533 for new arrived internal models ?

We tried to do it by ourselves, but we did not succeed to compile it like https://github.com/Samsung/ONE/issues/8754

@chunseoklee Can we use python notebooks with the internal models which @lemmaa provided with me yesterday or you already have converted to tf-micro models? If so, could share them in some internal repo?

@chunseoklee Can we use python notebooks with the internal models which @lemmaa provided with me yesterday or you already have converted to tf-micro models? If so, could share them in some internal repo?

Okay, I will after @lemmaa 's approval.

Note that I have converted as follows after model.fit :

# Convert the model.

converter = tf.lite.TFLiteConverter.from_keras_model(model)

tflite_model = converter.convert()

# Save the model.

with open('model.tflite', 'wb') as f:

f.write(tflite_model)

If so, could share them in some internal repo?

@lucenticus , PTAL RS7-RuntimeNTools/model_zoo_da for tflite models converted by @chunseoklee . :)

@lucenticus Note that the model by https://github.com/Samsung/ONE/issues/7598#issuecomment-1082816468 might have non -static input shape.