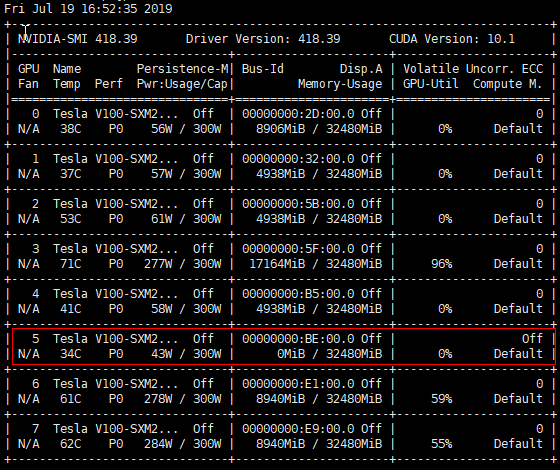

the gpu is not registered when ECC is off

The template below is mostly useful for bug reports and support questions. Feel free to remove anything which doesn't apply to you and add more information where it makes sense.

1. Issue or feature description

the gpu is not registered when ECC is off

2. Steps to reproduce the issue

the 5th card's ECC is off for unknown reason.

at this time . I see the Allocatable in k8s of this node is 7

Capacity:

cpu: 72

ephemeral-storage: 1146196168Ki

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 525490100Ki

nvidia.com/gpu: 8

pods: 110

rdma/hca: 1k

Allocatable:

cpu: 71500m

ephemeral-storage: 1050965677560

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 508028852Ki

nvidia.com/gpu: 7

pods: 110

rdma/hca: 1k

I test this simply demo.when ECC is off. the GPU card still usefull.

>>> import os

>>> os.environ["CUDA_VISIBLE_DEVICES"] = "5"

>>> import tensorflow as tf

>>> tf.test.gpu_device_name()

What are the considerations for not registering this card when the ECC is off?

3. Information to attach (optional if deemed irrelevant)

Common error checking:

- [ ] The output of

nvidia-smi -aon your host - [ ] Your docker configuration file (e.g:

/etc/docker/daemon.json) - [ ] The k8s-device-plugin container logs

- [ ] The kubelet logs on the node (e.g:

sudo journalctl -r -u kubelet)

Additional information that might help better understand your environment and reproduce the bug:

- [ ] Docker version from

docker version - [ ] Docker command, image and tag used

- [ ] Kernel version from

uname -a - [ ] Any relevant kernel output lines from

dmesg - [ ] NVIDIA packages version from

dpkg -l '*nvidia*'orrpm -qa '*nvidia*' - [ ] NVIDIA container library version from

nvidia-container-cli -V - [ ] NVIDIA container library logs (see troubleshooting)

@tingweiwu Sorry for the late response. Are you still facing this issue with the latest driver?

This issue is stale because it has been open 90 days with no activity. This issue will be closed in 30 days unless new comments are made or the stale label is removed.

This issue was automatically closed due to inactivity.