Rendering bugs with Radeon HD 3650 (RV635) on Linux with Mesa

Given we recommend OpenGL 3.2 GPU (and the game may work with OpenGL 2.1) I may attempt to plug some older GPU to my computer to drive some tests.

I just did it with a Radeon HD 3650 (RV635) on Linux with Mesa 20.0.8 and glxinfo tells me OpenGL 3.3 is supported.

Don't expect a stellar experience (expect a 30~40 fps range) but it works, and except a warning telling me SSAO is not used because GL_ARB_texture_gather is not available, all features seems to work as far as I've seen (even relief mapping works, but you have to disable it because of performance).

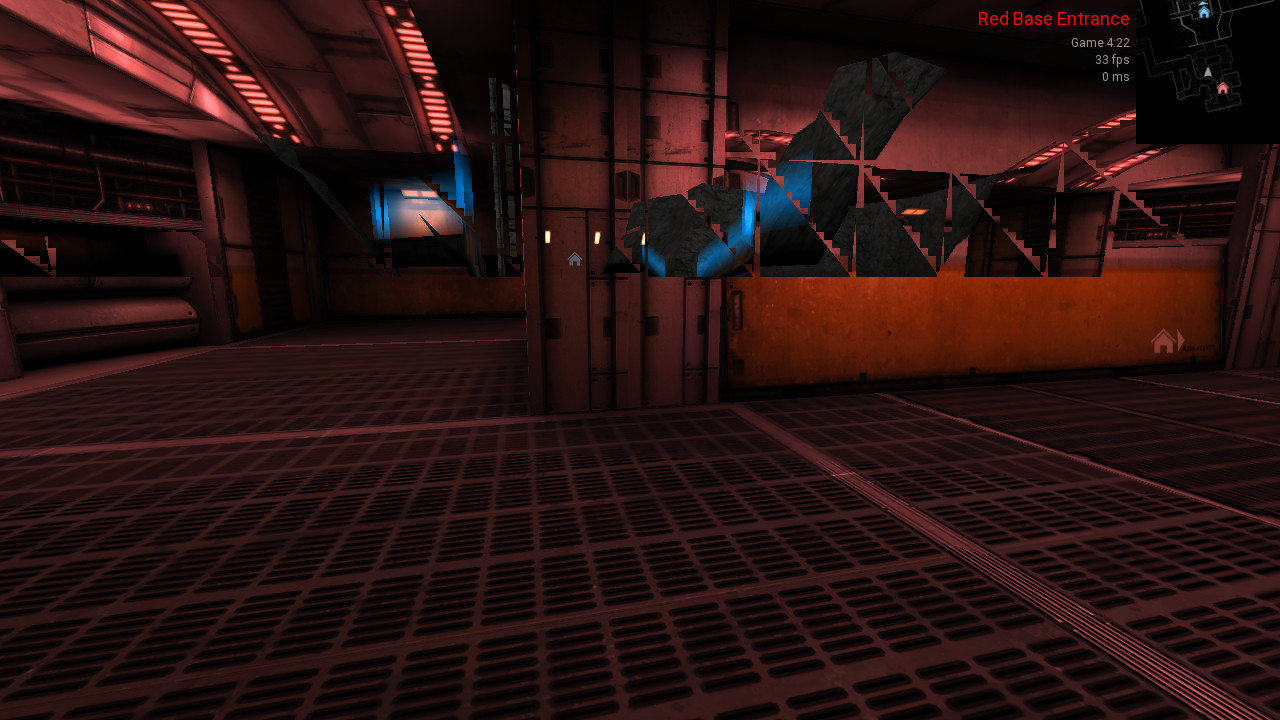

The bug is that some far surfaces that are hidden by close geometry are rendered atop the close geometry hiding them. I can get that bug in plat23 or yocto maps for example. On chasm map when being outside the skybox is rendered above everything.

It may also be a driver bug, I don't know.

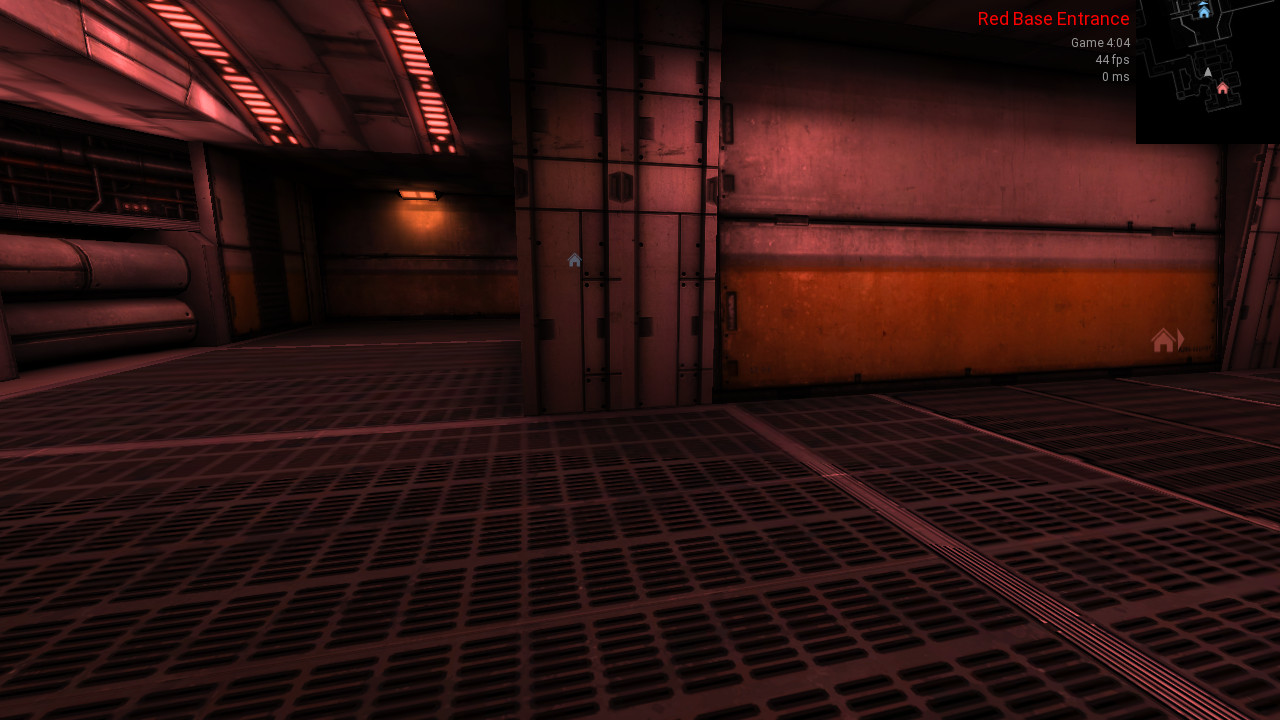

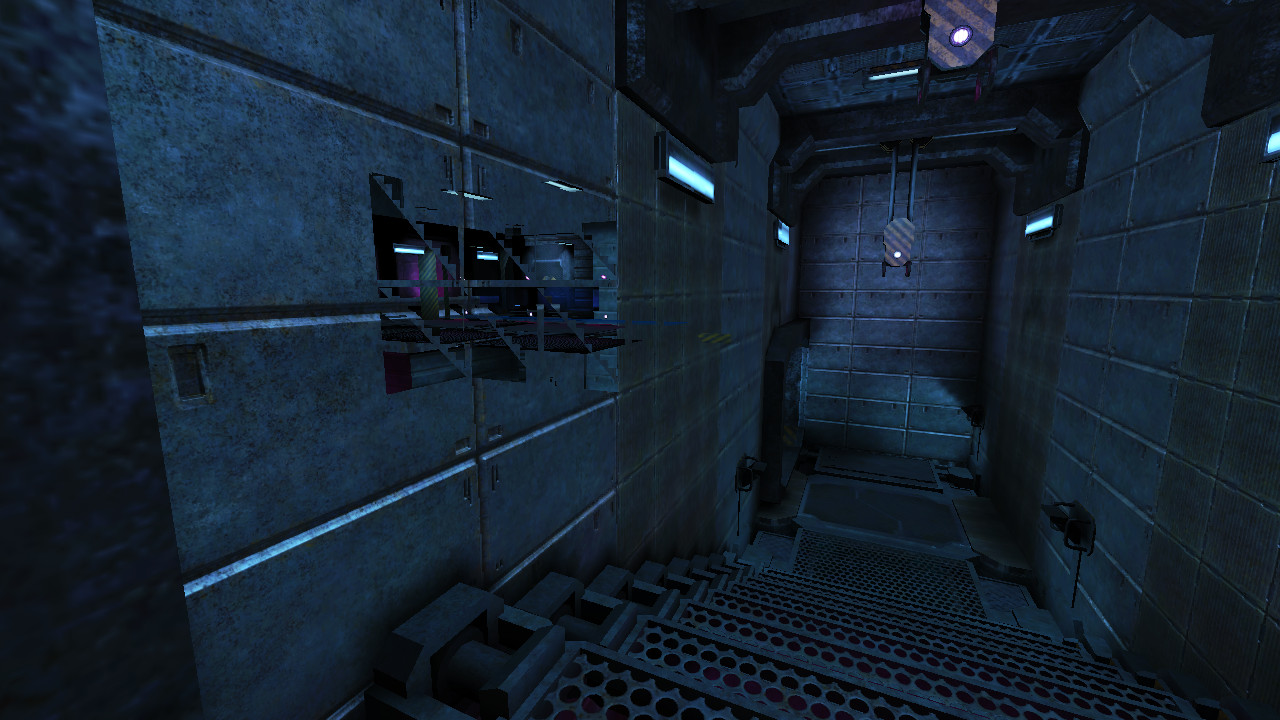

When outside is not rendered:

When outside is rendered (walked a bit to the left):

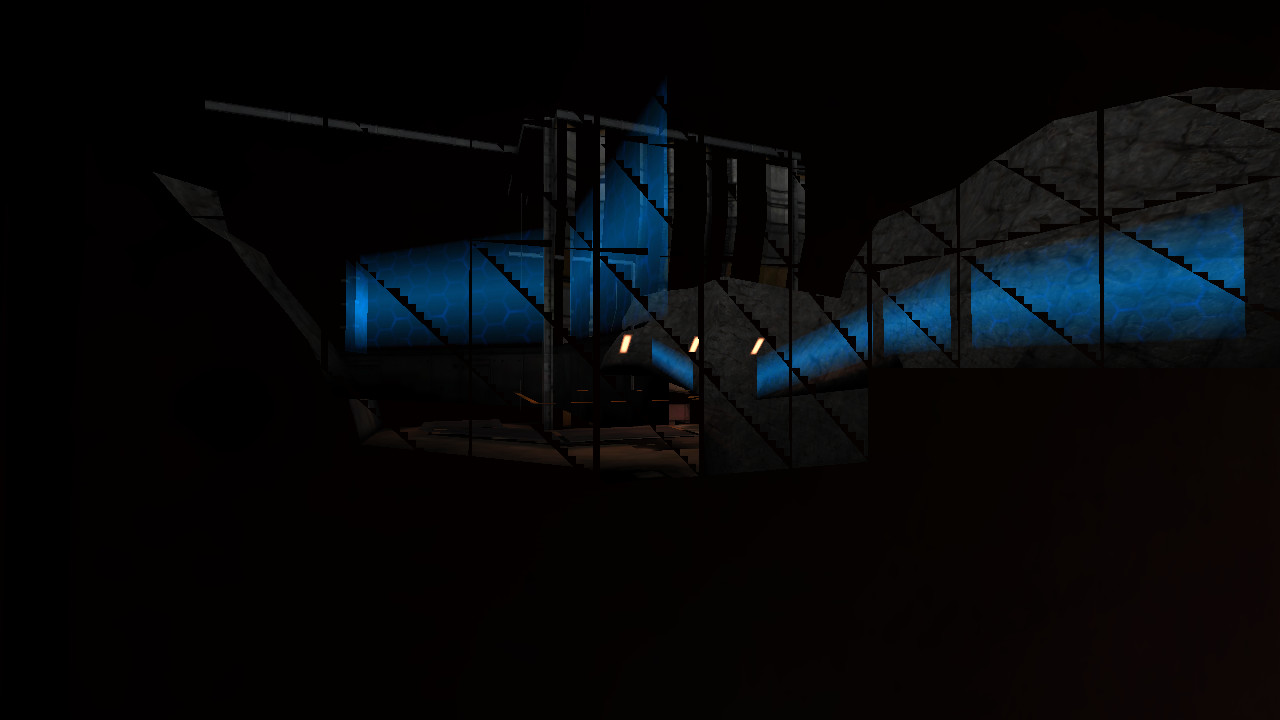

I'm close to a wall entirely hiding my viewport but I still see through:

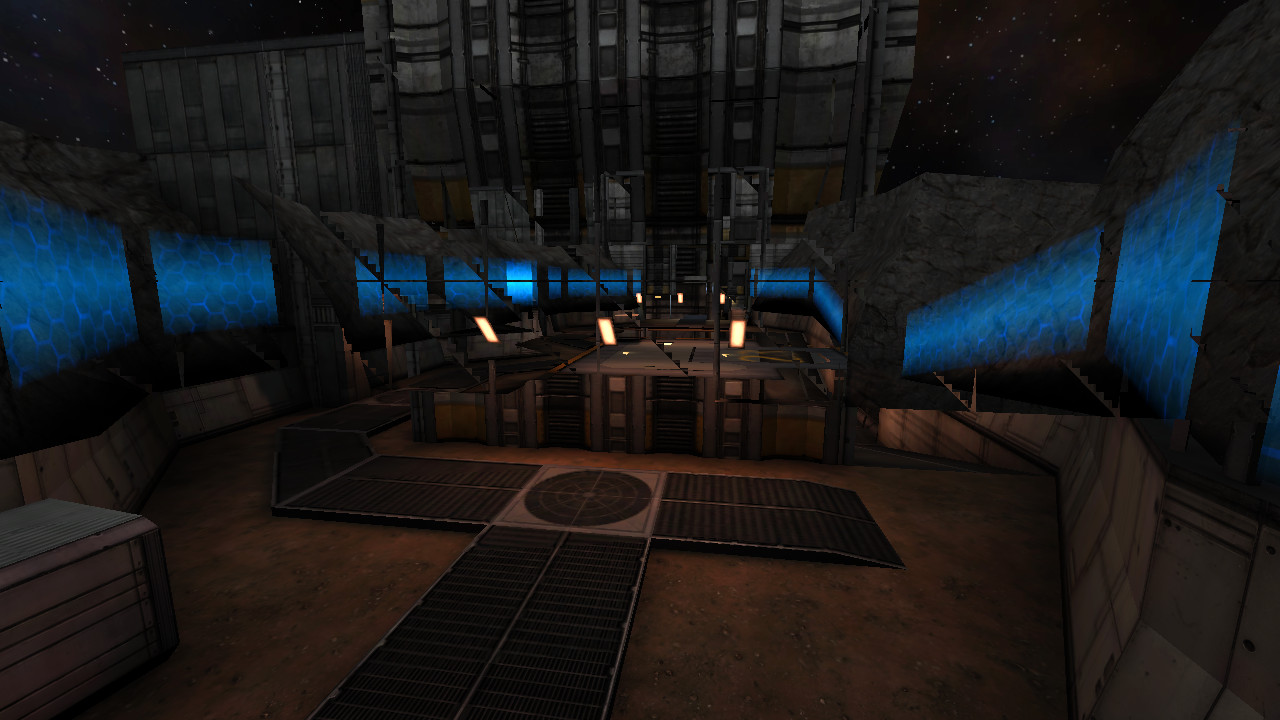

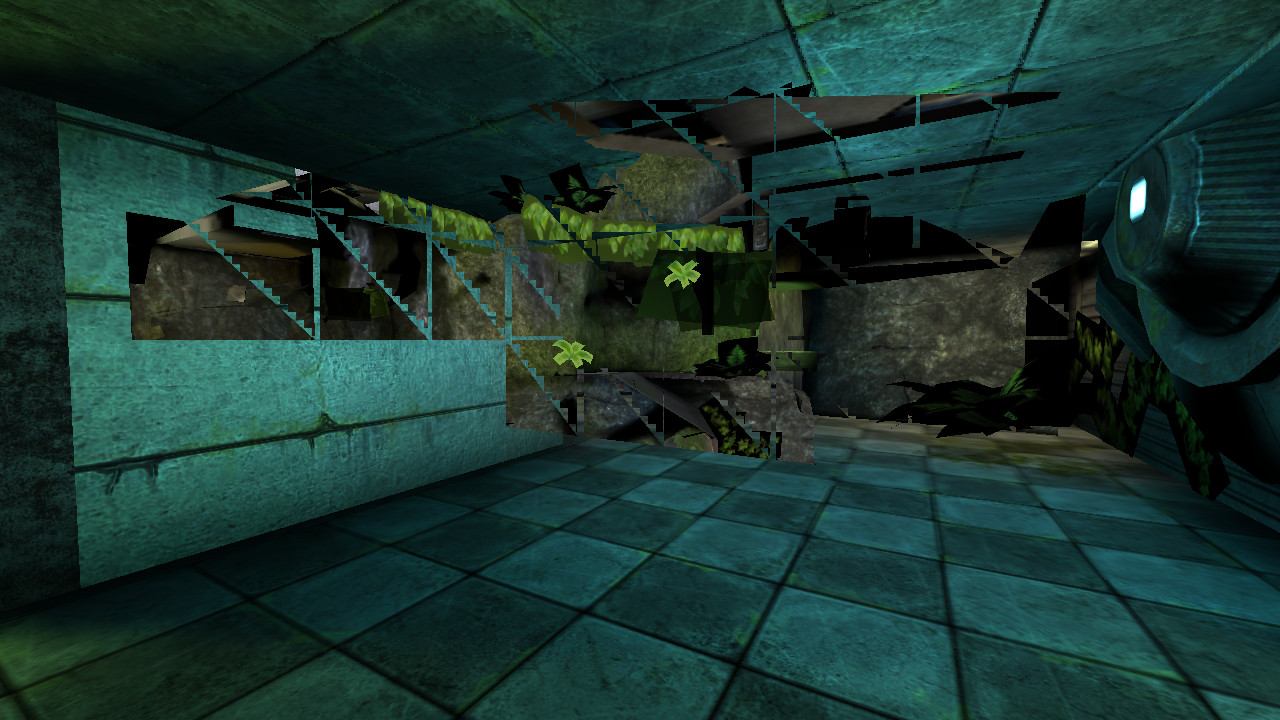

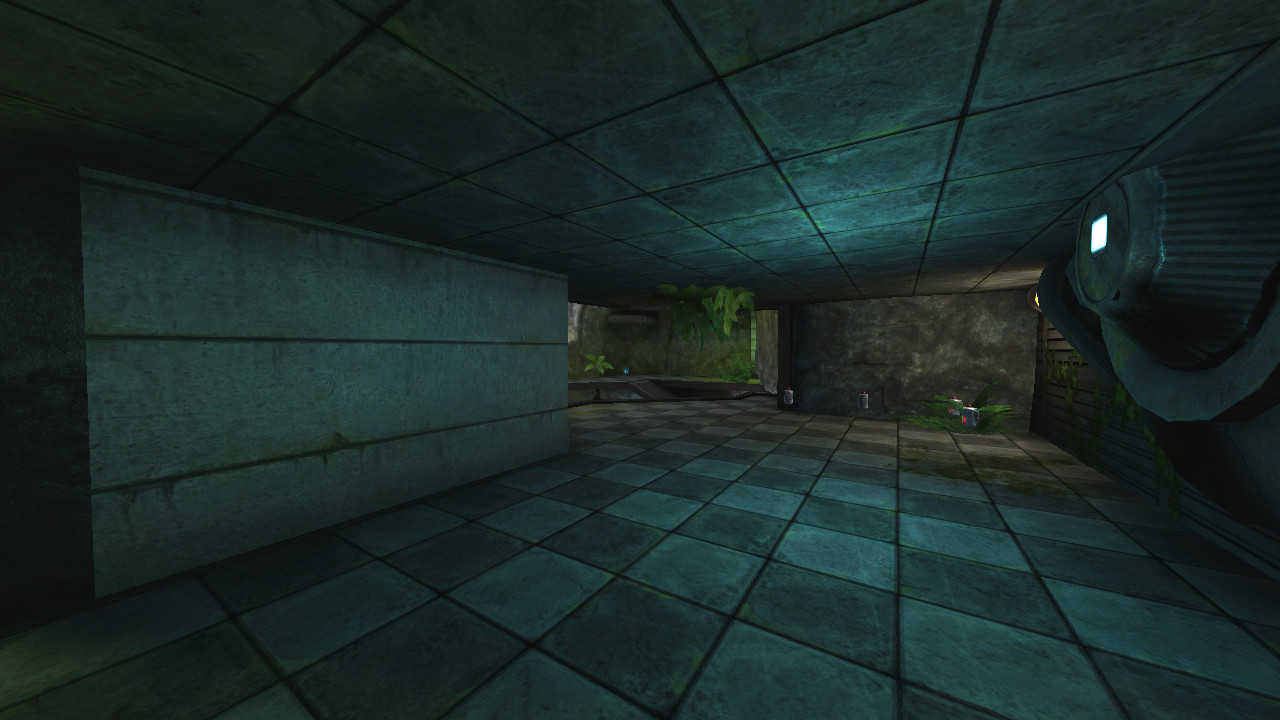

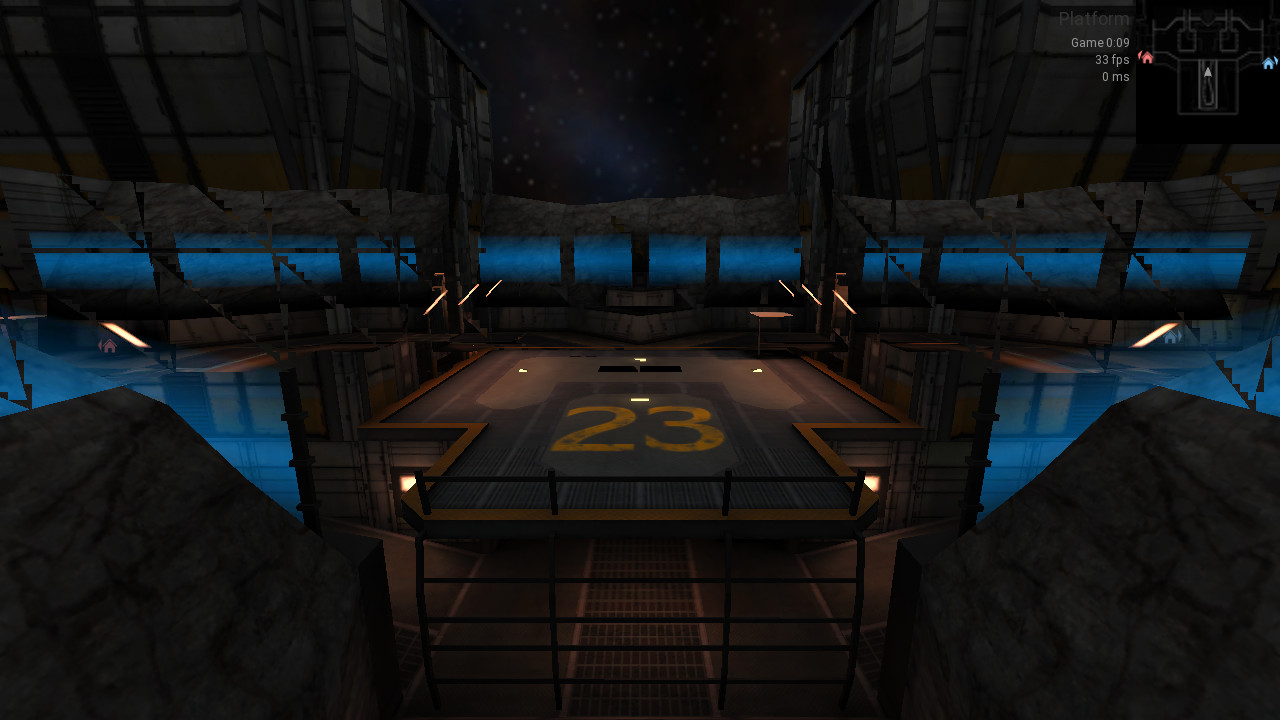

The plat23 map really makes this bug very obvious:

You can also get the bug in yocto:

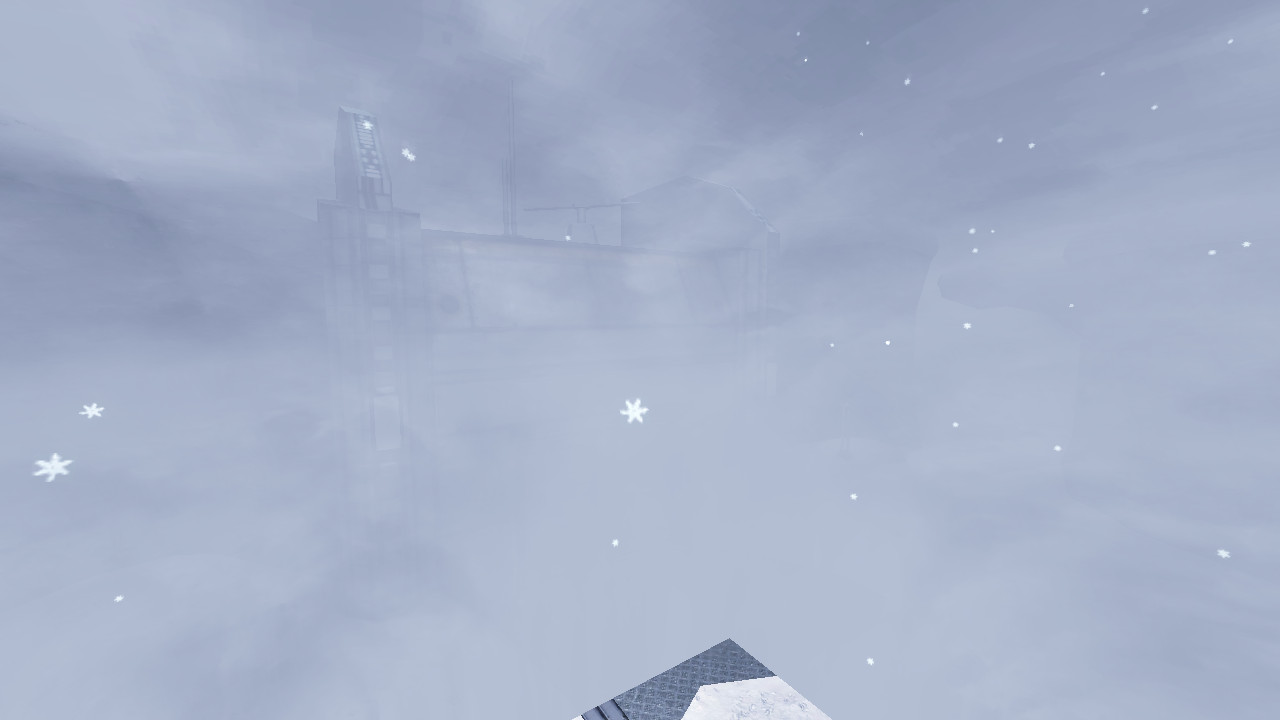

A bug affects the skybox in chasm, may be another bug or the same, I don't know:

It may be a driver issue but in all case we know it's possible to not trigger it in game because I reproduce the bug when loading the Xonotic Erbium map in Dæmon but I do not reproduce the bug by loading this map in DarkPlaces engine:

Dæmon:

DarkPlaces:

Edit: note that Xonotic is an OpenGL 2 renderer while Dæmon is an OpenGL 3 renderer. Used features may be different.

I forgot to say, but I reproduce the bug on both 0.51 release and upcoming 0.52 branch, so that's unrelated to the recent renderer revamp.

That really looks like a driver bug.

When enabling tris display (r_showtris 1), the line repaint the background texture with the foreground texture:

I tried with four TeraScale1 GPUs, and two of them reproduce the issue:

- Sapphire Radeon HD 2600 PRO | RV630

- HP Radeon HD 3650 | RV635 PRO (the one I reported first)

But the two other ones do not reproduce the issue:

- HIS Radeon HD 4670 IceQ | RV730 XT

- Sapphire Radeon HD 4890 Vapor-X | RV790 XT

This is a screenshot with a Radeon HD 2600 PRO:

So, at this point it looks to be a driver bug.

Bug was reported on Mesa side: gitlab.freedesktop.org:mesa/mesa#3290

David Airlie suggested to try to run the game with R600_DEBUG=nohyperz environment variable, I tried on both AGP Radeon HD 2600 PRO (RV630) and PCIe Radeon HD 3650 (RV635 PRO) and disabling this driver feature fixes the bug on both and I experience no impact on performance when playing Unvanquished.

We may implement a workaround for this, by setting the said environment variable with setenv.

Affected GPUs are expected to be:

- Radeon HD 2000 Series (TeraScale 1) https://en.wikipedia.org/wiki/Radeon_HD_2000_series

- Radeon HD 2400

AMD RV610 - Radeon HD 2600

AMD RV630(also Radeon HD 2600 X2) - Radeon HD 2900

AMD R600

- Radeon HD 2400

- Radeon HD 3000 Series (TeraScale 1) https://en.wikipedia.org/wiki/Radeon_HD_3000_series

- Radeon HD 3800

AMD RV670(also Radeon HD 3870 X2) - Radeon HD R690/3830

???(probablyAMD RV635) - Radeon HD 3600

AMD RV635(also Radon HD 3650) - Radeon HD 3400

AMD RV620

- Radeon HD 3800

The AMD RV610 string and others are the ones expected to be found in GL_RENDERER.

What I'm afraid of is that we may have to set the variable before LibGL is loaded, and then before knowing if the GPU requires it or not. But the R600_DEBUG variable may be used for non-TeraScale 1/HD 2000/HD 3000 devices and disabling the feature for all cards supporting that environment variable may reduce performance of the ones that doesn't have broken hyperz feature.

For example it is known that TeraScale 1 RV730 XT (HD 4670) and RV790 XT (HD 4890) are not affected, those likely support the R600_DEBUG=nohyperz environment variable but don't need it.

Edit: It is also expected that TeraScale 2 and 3 support the R600_DEBUG=nohyperz environment variable while we don't need it.

In Mesa the R600_DEBUG=nohyperz environment variable is read by r600_common_screen_init() in src/gallium/drivers/r600/r600_pipe_common.c after building the GL_RENDERER string, so when we know the card is one being buggy, it's too late to set the environment variable to enable the workaround…

Maybe we can create a temporary GL context just to detect the GPU, and then destroy it?

This would be annoying because our GL creation context code iterates various versions until on works. I was more thinking about recreating the window (and then the context) when the bad GPU is detected.

So just do a check after the normal context creation, and then set the env variable and recreate context if needed? That should work I think.

I implemented a fix in:

- https://github.com/DaemonEngine/Daemon/pull/1224

With workaround.noHyperZ.mesaRv600 off:

With workaround.noHyperZ.mesaRv600 on:

The fix requires to restart SDL after having read GL_RENDERER, so a mechanism to restart SDL on some conditions was implemented.

It was tested on Radeon HD 3650 (RV635) on Mesa 24.0.9.

I noticed enabling r_fastsky also workarounds the bug, I don't know why.